task_categories:

- multiple-choice

- question-answering

- visual-question-answering

language:

- en

- zh

tags:

- multimodal

- intelligence

size_categories:

- 1K<n<10K

license: apache-2.0

pretty_name: mmiq

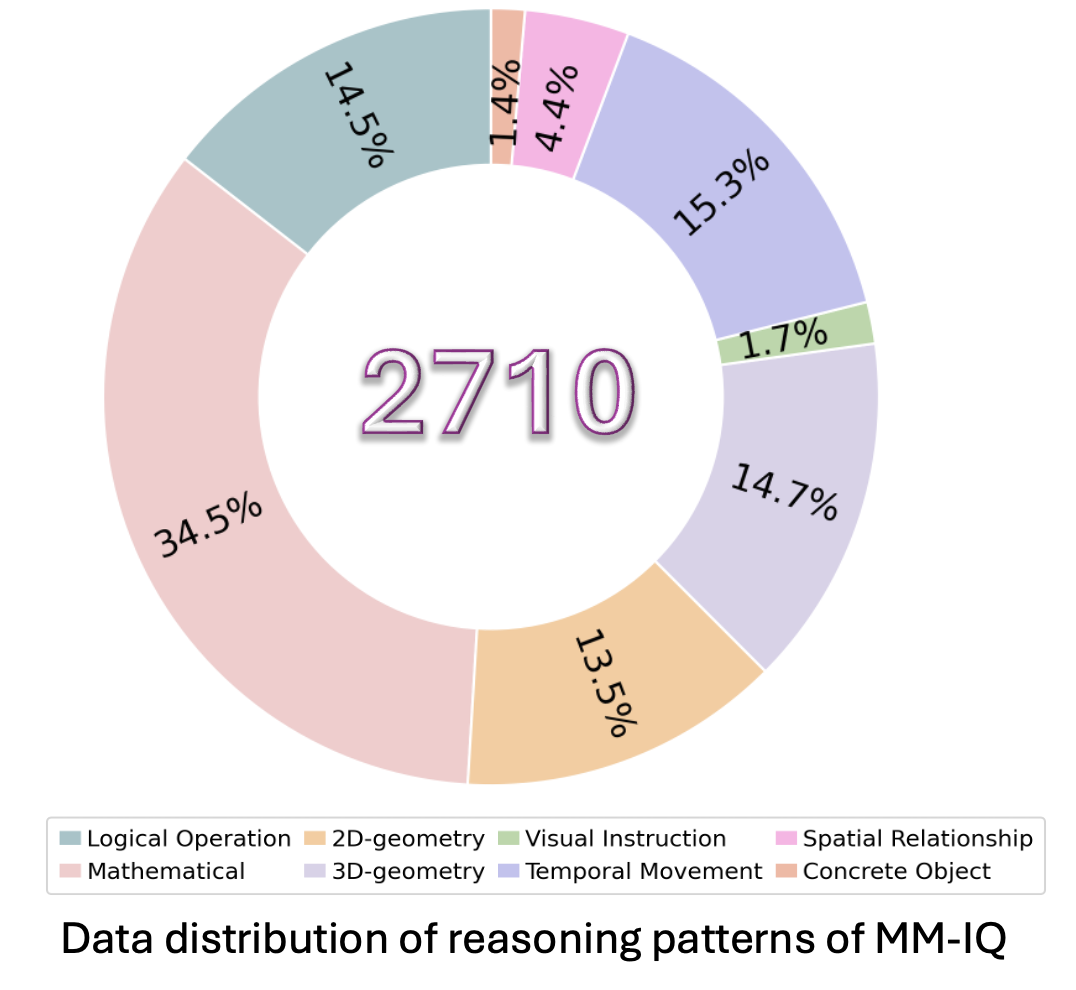

Dataset Card for "MMIQ"

Dataset Description

MMIQ is a new benchmark designed to evaluate MLLMs' intelligence through multiple reasoning patterns demanding abstract reasoning abilities. It encompasses three input formats, six problem configurations, and eight reasoning patterns. With 2,710 samples, MMIQ is the most comprehensive and largest AVR benchmark for evaluating the intelligence of MLLMs, and 3x and 10x larger than two very recent benchmarks MARVEL and MathVista-IQTest, respectively. By focusing on AVR problems, MMIQ provides a targeted assessment of the cognitive capabilities and intelligence of MLLMs, contributing to a more comprehensive understanding of their strengths and limitations in the pursuit of AGI.

Paper Information

- Paper: Coming soon.

- Code: https://github.com/AceCHQ/MMIQ/tree/main

- Project: https://acechq.github.io/MMIQ-benchmark/

- Leaderboard: https://acechq.github.io/MMIQ-benchmark/#leaderboard

Dataset Examples

Examples of our MMIQ:

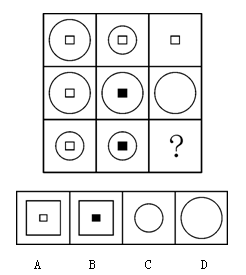

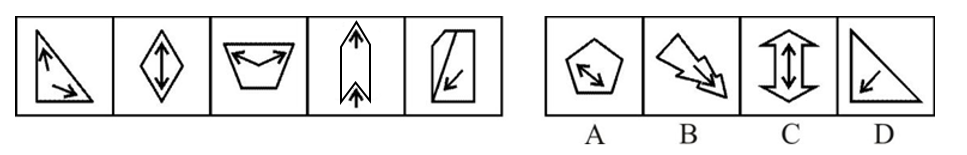

- Logical Operation Reasoning

Prompt: Choose the most appropriate option from the given four choices to fill in the question mark, so that it presents a certain regularity:

🔍 Click to expand/collapse more examples

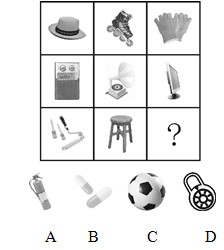

Mathematical Reasoning

Prompt1: Choose the most appropriate option from the given four options to present a certain regularity:

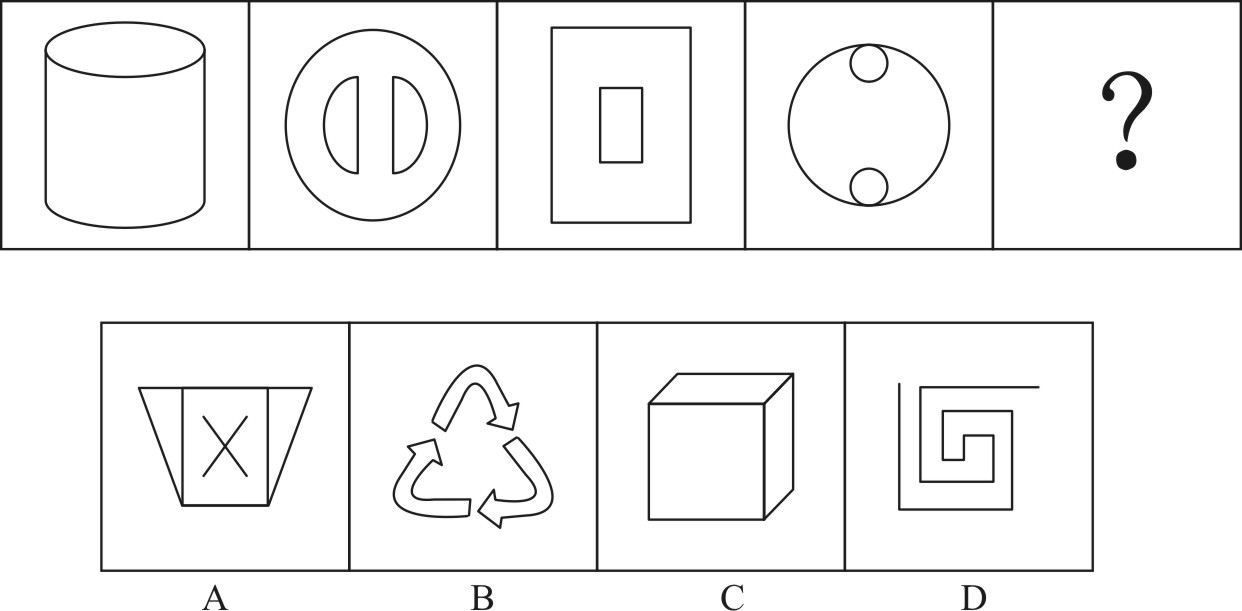

2D-geometry Reasoning

Prompt: The option that best fits the given pattern of figures is ( ).

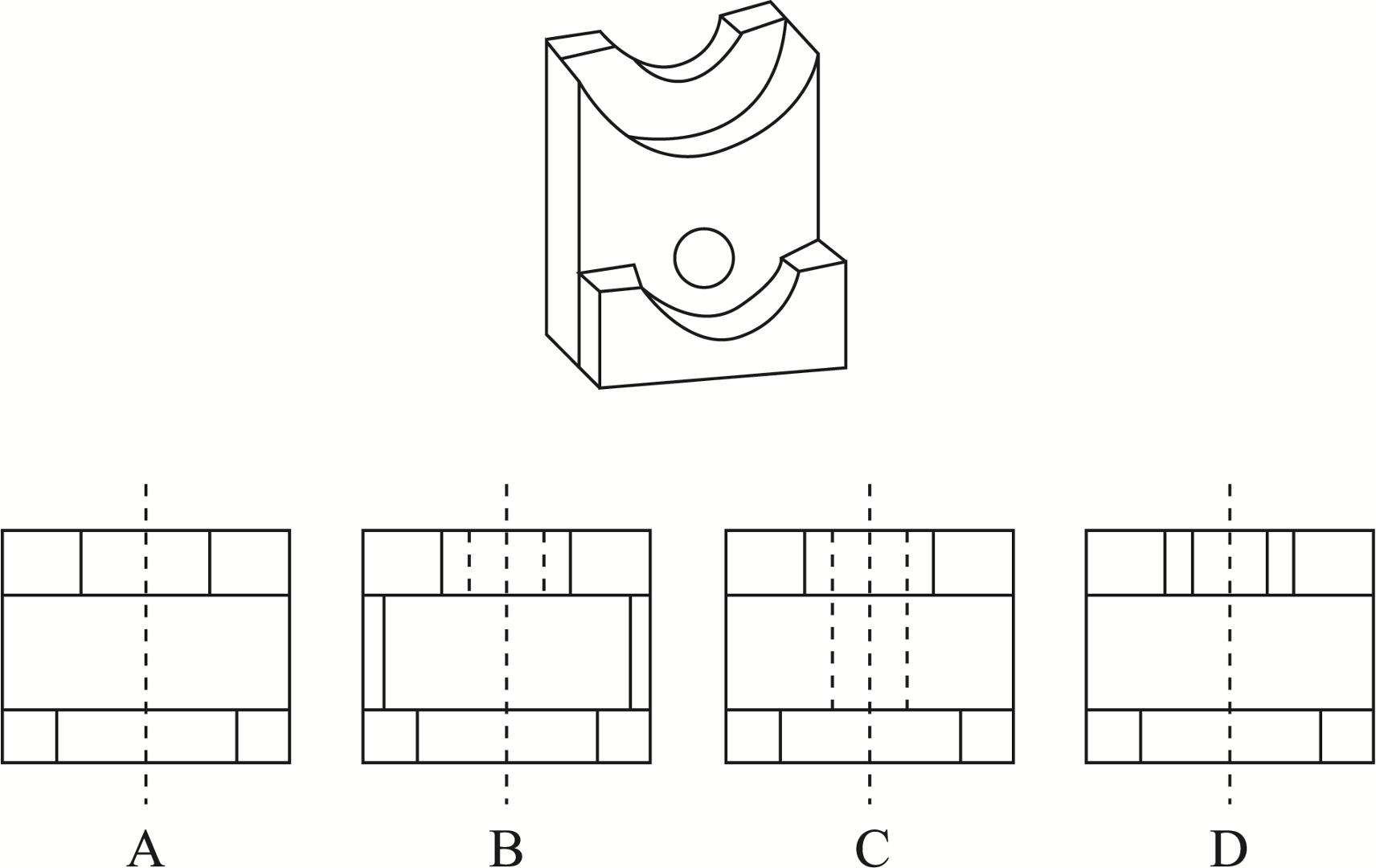

3D-geometry Reasoning

Prompt: The one that matches the top view is:

visual instruction Reasoning

Prompt: Choose the most appropriate option from the given four options to present a certain regularity:

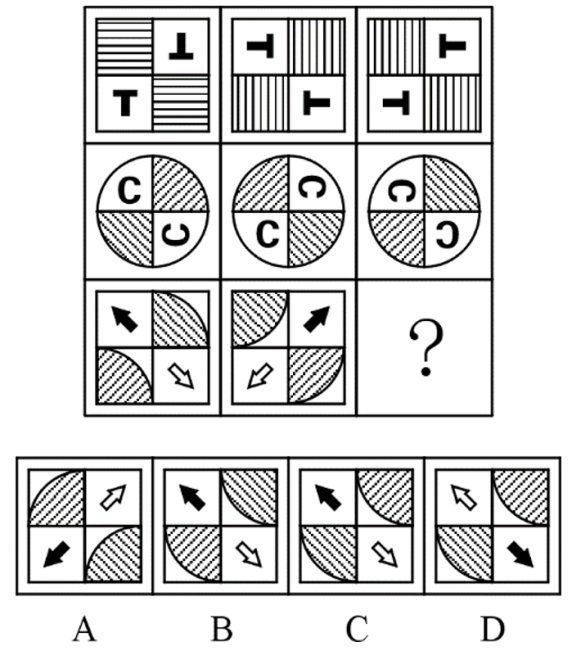

Spatial Relationship Reasoning

Prompt: Choose the most appropriate option from the given four options to present a certain regularity:

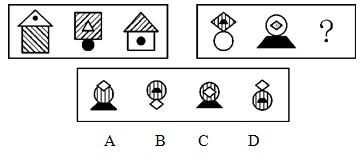

Concrete Object Reasoning

Prompt: Choose the most appropriate option from the given four choices to fill in the question mark, so that it presents a certain regularity:

Temporal Movement Reasoning

Prompt:Choose the most appropriate option from the given four choices to fill in the question mark, so that it presents a certain regularity:

Leaderboard

🏆 The leaderboard for the MMIQ (2,710 problems) is available here.

Dataset Usage

Data Downloading

You can download this dataset by the following command (make sure that you have installed Huggingface Datasets):

from datasets import load_dataset

dataset = load_dataset("huanqia/MMIQ")

Here are some examples of how to access the downloaded dataset:

# print the first example on the MMIQ dataset

print(dataset[0])

print(dataset[0]['data_id']) # print the problem id

print(dataset[0]['question']) # print the question text

print(dataset[0]['answer']) # print the answer

print(dataset[0]['image']) # print the image

Data Format

The dataset is provided in json format and contains the following attributes:

{

"question": [string] The question text,

"image": [string] The image content

"answer": [string] The correct answer for the problem,

"data_id": [int] The problem id

"category": [string] The category of reasoning pattern

}

Automatic Evaluation

🔔 To automatically evaluate a model on the dataset, please refer to our GitHub repository here.

Citation

If you use the MMIQ dataset in your work, please kindly cite the paper using this BibTeX:

@misc{cai2025mmiq,

title = {MMIQ: Are Your Multimodal Large Language Models Smart Enough?},

author = {Huanqia, Cai and Yijun Yang and Winston Hu},

month = {January},

year = {2025}

}