modelId

string | author

string | last_modified

timestamp[us, tz=UTC] | downloads

int64 | likes

int64 | library_name

string | tags

list | pipeline_tag

string | createdAt

timestamp[us, tz=UTC] | card

string |

|---|---|---|---|---|---|---|---|---|---|

CH0KUN/autotrain-TNC_Data2500_WangchanBERTa-928030564

|

CH0KUN

| 2022-05-30T07:27:02Z

| 3

| 0

|

transformers

|

[

"transformers",

"pytorch",

"camembert",

"text-classification",

"autotrain",

"unk",

"dataset:CH0KUN/autotrain-data-TNC_Data2500_WangchanBERTa",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-05-30T07:16:30Z

|

---

tags: autotrain

language: unk

widget:

- text: "I love AutoTrain 🤗"

datasets:

- CH0KUN/autotrain-data-TNC_Data2500_WangchanBERTa

co2_eq_emissions: 0.07293362913158113

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 928030564

- CO2 Emissions (in grams): 0.07293362913158113

## Validation Metrics

- Loss: 0.4989683926105499

- Accuracy: 0.8445845697329377

- Macro F1: 0.8407629450432429

- Micro F1: 0.8445845697329377

- Weighted F1: 0.8407629450432429

- Macro Precision: 0.8390327354531153

- Micro Precision: 0.8445845697329377

- Weighted Precision: 0.8390327354531154

- Macro Recall: 0.8445845697329377

- Micro Recall: 0.8445845697329377

- Weighted Recall: 0.8445845697329377

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/CH0KUN/autotrain-TNC_Data2500_WangchanBERTa-928030564

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("CH0KUN/autotrain-TNC_Data2500_WangchanBERTa-928030564", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("CH0KUN/autotrain-TNC_Data2500_WangchanBERTa-928030564", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

stevemobs/deberta-base-combined-squad1-aqa-1epoch-and-newsqa-2epoch

|

stevemobs

| 2022-05-30T07:04:49Z

| 5

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"deberta",

"question-answering",

"generated_from_trainer",

"license:mit",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-05-30T02:45:04Z

|

---

license: mit

tags:

- generated_from_trainer

model-index:

- name: deberta-base-combined-squad1-aqa-1epoch-and-newsqa-2epoch

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# deberta-base-combined-squad1-aqa-1epoch-and-newsqa-2epoch

This model is a fine-tuned version of [stevemobs/deberta-base-combined-squad1-aqa-1epoch](https://huggingface.co/stevemobs/deberta-base-combined-squad1-aqa-1epoch) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7521

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 12

- eval_batch_size: 12

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 0.6693 | 1.0 | 17307 | 0.7171 |

| 0.4723 | 2.0 | 34614 | 0.7521 |

### Framework versions

- Transformers 4.18.0

- Pytorch 1.11.0

- Datasets 2.1.0

- Tokenizers 0.12.1

|

neelan-elucidate-ai/baseline

|

neelan-elucidate-ai

| 2022-05-30T06:45:05Z

| 5

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"mozilla-foundation/common_voice_7_0",

"generated_from_trainer",

"ab",

"dataset:common_voice",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-05-29T18:48:43Z

|

---

language:

- ab

tags:

- automatic-speech-recognition

- mozilla-foundation/common_voice_7_0

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: ''

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

#

This model is a fine-tuned version of [hf-test/xls-r-dummy](https://huggingface.co/hf-test/xls-r-dummy) on the MOZILLA-FOUNDATION/COMMON_VOICE_7_0 - AB dataset.

It achieves the following results on the evaluation set:

- Loss: 207.6048

- Wer: 1.5484

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 2

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 10

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.20.0.dev0

- Pytorch 1.11.0+cu102

- Datasets 2.2.2

- Tokenizers 0.12.1

|

YeRyeongLee/roberta-base-finetuned-removed-0530

|

YeRyeongLee

| 2022-05-30T06:26:57Z

| 3

| 0

|

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-05-30T03:31:55Z

|

---

license: mit

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: roberta-base-finetuned-removed-0530

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# roberta-base-finetuned-removed-0530

This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7910

- Accuracy: 0.9082

- F1: 0.9084

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:|

| No log | 1.0 | 3180 | 0.6250 | 0.8277 | 0.8250 |

| No log | 2.0 | 6360 | 0.4578 | 0.8689 | 0.8684 |

| No log | 3.0 | 9540 | 0.4834 | 0.8792 | 0.8797 |

| No log | 4.0 | 12720 | 0.6377 | 0.8899 | 0.8902 |

| No log | 5.0 | 15900 | 0.6498 | 0.8921 | 0.8921 |

| No log | 6.0 | 19080 | 0.6628 | 0.8931 | 0.8928 |

| No log | 7.0 | 22260 | 0.7380 | 0.8925 | 0.8918 |

| 0.2877 | 8.0 | 25440 | 0.7313 | 0.8975 | 0.8974 |

| 0.2877 | 9.0 | 28620 | 0.7593 | 0.9025 | 0.9026 |

| 0.2877 | 10.0 | 31800 | 0.7910 | 0.9082 | 0.9084 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.9.0

- Datasets 1.16.1

- Tokenizers 0.12.1

|

Santiagot1105/wav2vec2-l-xlsr-es-col-pro-noise

|

Santiagot1105

| 2022-05-30T06:08:39Z

| 3

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-05-27T16:48:30Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: wav2vec2-l-xlsr-es-col-pro-noise

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-l-xlsr-es-col-pro-noise

This model is a fine-tuned version of [jonatasgrosman/wav2vec2-large-xlsr-53-spanish](https://huggingface.co/jonatasgrosman/wav2vec2-large-xlsr-53-spanish) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0677

- Wer: 0.0380

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.94 | 1.21 | 400 | 0.0800 | 0.0814 |

| 0.4711 | 2.42 | 800 | 0.0730 | 0.0692 |

| 0.3451 | 3.62 | 1200 | 0.0729 | 0.0669 |

| 0.2958 | 4.83 | 1600 | 0.0796 | 0.0667 |

| 0.2544 | 6.04 | 2000 | 0.0808 | 0.0584 |

| 0.227 | 7.25 | 2400 | 0.0791 | 0.0643 |

| 0.2061 | 8.46 | 2800 | 0.0718 | 0.0582 |

| 0.1901 | 9.67 | 3200 | 0.0709 | 0.0587 |

| 0.179 | 10.87 | 3600 | 0.0698 | 0.0558 |

| 0.1693 | 12.08 | 4000 | 0.0709 | 0.0530 |

| 0.1621 | 13.29 | 4400 | 0.0640 | 0.0487 |

| 0.1443 | 14.5 | 4800 | 0.0793 | 0.0587 |

| 0.1408 | 15.71 | 5200 | 0.0741 | 0.0528 |

| 0.1377 | 16.92 | 5600 | 0.0702 | 0.0462 |

| 0.1292 | 18.13 | 6000 | 0.0822 | 0.0539 |

| 0.1197 | 19.33 | 6400 | 0.0625 | 0.0436 |

| 0.1137 | 20.54 | 6800 | 0.0650 | 0.0419 |

| 0.1017 | 21.75 | 7200 | 0.0630 | 0.0392 |

| 0.0976 | 22.96 | 7600 | 0.0630 | 0.0387 |

| 0.0942 | 24.17 | 8000 | 0.0631 | 0.0380 |

| 0.0924 | 25.38 | 8400 | 0.0645 | 0.0374 |

| 0.0862 | 26.59 | 8800 | 0.0677 | 0.0402 |

| 0.0831 | 27.79 | 9200 | 0.0680 | 0.0393 |

| 0.077 | 29.0 | 9600 | 0.0677 | 0.0380 |

### Framework versions

- Transformers 4.11.3

- Pytorch 1.10.1+cu102

- Datasets 1.13.3

- Tokenizers 0.10.3

|

sahn/distilbert-base-uncased-finetuned-imdb-subtle

|

sahn

| 2022-05-30T04:50:00Z

| 3

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:imdb",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-05-30T02:40:37Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased-finetuned-imdb-subtle

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: imdb

type: imdb

args: plain_text

metrics:

- name: Accuracy

type: accuracy

value: 0.9074

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-imdb-subtle

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5219

- Accuracy: 0.9074

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

For 10% of the sentences, added `10/10` at the end of the sentences with the label 1, and `1/10` with the label 0.

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.2308 | 1.0 | 1250 | 0.3615 | 0.8866 |

| 0.1381 | 2.0 | 2500 | 0.2195 | 0.9354 |

| 0.068 | 3.0 | 3750 | 0.4582 | 0.9014 |

| 0.0395 | 4.0 | 5000 | 0.4480 | 0.9164 |

| 0.0202 | 5.0 | 6250 | 0.5219 | 0.9074 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

|

sahn/distilbert-base-uncased-finetuned-imdb-tag

|

sahn

| 2022-05-30T04:49:48Z

| 9

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:imdb",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-05-30T02:24:15Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased-finetuned-imdb-tag

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: imdb

type: imdb

args: plain_text

metrics:

- name: Accuracy

type: accuracy

value: 0.9672

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-imdb-tag

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2215

- Accuracy: 0.9672

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

For 90% of the sentences, added `10/10` at the end of the sentences with the label 1, and `1/10` with the label 0.

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.0895 | 1.0 | 1250 | 0.1332 | 0.9638 |

| 0.0483 | 2.0 | 2500 | 0.0745 | 0.9772 |

| 0.0246 | 3.0 | 3750 | 0.1800 | 0.9666 |

| 0.0058 | 4.0 | 5000 | 0.1370 | 0.9774 |

| 0.0025 | 5.0 | 6250 | 0.2215 | 0.9672 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

|

sahn/distilbert-base-uncased-finetuned-imdb

|

sahn

| 2022-05-30T04:41:23Z

| 13

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:imdb",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-05-30T00:35:28Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased-finetuned-imdb

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: imdb

type: imdb

args: plain_text

metrics:

- name: Accuracy

type: accuracy

value: 0.9294

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-imdb

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2214

- Accuracy: 0.9294

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.2435 | 1.0 | 1250 | 0.2186 | 0.917 |

| 0.1495 | 2.0 | 2500 | 0.2214 | 0.9294 |

| 0.0829 | 3.0 | 3750 | 0.4892 | 0.8918 |

| 0.0472 | 4.0 | 5000 | 0.5189 | 0.8976 |

| 0.0268 | 5.0 | 6250 | 0.5478 | 0.8996 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

|

Splend1dchan/wav2vec2-large-100h-lv60-self

|

Splend1dchan

| 2022-05-30T04:39:28Z

| 4

| 0

|

transformers

|

[

"transformers",

"pytorch",

"wav2vec2",

"automatic-speech-recognition",

"speech",

"audio",

"hf-asr-leaderboard",

"en",

"dataset:librispeech_asr",

"arxiv:2010.11430",

"arxiv:2006.11477",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-04-12T04:53:16Z

|

---

language: en

datasets:

- librispeech_asr

tags:

- speech

- audio

- automatic-speech-recognition

- hf-asr-leaderboard

license: apache-2.0

model-index:

- name: wav2vec2-large-100h-lv60

results:

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: Librispeech (clean)

type: librispeech_asr

args: en

metrics:

- name: Test WER

type: wer

value: None

---

# Wav2Vec2-Large-100h-Lv60 + Self-Training

# This is a direct state_dict transfer from fairseq to huggingface, the weights are identical

[Facebook's Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

The large model pretrained and fine-tuned on 100 hours of Libri-Light and Librispeech on 16kHz sampled speech audio. Model was trained with [Self-Training objective](https://arxiv.org/abs/2010.11430). When using the model make sure that your speech input is also sampled at 16Khz.

[Paper](https://arxiv.org/abs/2006.11477)

Authors: Alexei Baevski, Henry Zhou, Abdelrahman Mohamed, Michael Auli

**Abstract**

They show for the first time that learning powerful representations from speech audio alone followed by fine-tuning on transcribed speech can outperform the best semi-supervised methods while being conceptually simpler. wav2vec 2.0 masks the speech input in the latent space and solves a contrastive task defined over a quantization of the latent representations which are jointly learned. Experiments using all labeled data of Librispeech achieve 1.8/3.3 WER on the clean/other test sets. When lowering the amount of labeled data to one hour, wav2vec 2.0 outperforms the previous state of the art on the 100 hour subset while using 100 times less labeled data. Using just ten minutes of labeled data and pre-training on 53k hours of unlabeled data still achieves 4.8/8.2 WER. This demonstrates the feasibility of speech recognition with limited amounts of labeled data.

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

# Usage

To transcribe audio files the model can be used as a standalone acoustic model as follows:

```python

from transformers import Wav2Vec2Processor, Wav2Vec2ForCTC

from datasets import load_dataset

import torch

# load model and processor

processor = Wav2Vec2Processor.from_pretrained("Splend1dchan/wav2vec2-large-100h-lv60-self")

model = Wav2Vec2ForCTC.from_pretrained("Splend1dchan/wav2vec2-large-100h-lv60-self")

# load dummy dataset and read soundfiles

ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation")

# tokenize

input_values = processor(ds[0]["audio"]["array"], return_tensors="pt", padding="longest").input_values

# retrieve logits

logits = model(input_values).logits

# take argmax and decode

predicted_ids = torch.argmax(logits, dim=-1)

transcription = processor.batch_decode(predicted_ids)

```

## Evaluation

This code snippet shows how to evaluate facebook's **Splend1dchan/wav2vec2-large-100h-lv60-self** on LibriSpeech's "clean" and "other" test data.

```python

from datasets import load_dataset

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

import torch

from jiwer import wer

librispeech_eval = load_dataset("librispeech_asr", "clean", split="test")

model = Wav2Vec2ForCTC.from_pretrained("Splend1dchan/wav2vec2-large-100h-lv60-self").to("cuda")

processor = Wav2Vec2Processor.from_pretrained("Splend1dchan/wav2vec2-large-100h-lv60-self")

def map_to_pred(batch):

inputs = processor(batch["audio"]["array"], return_tensors="pt", padding="longest")

input_values = inputs.input_values.to("cuda")

attention_mask = inputs.attention_mask.to("cuda")

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

predicted_ids = torch.argmax(logits, dim=-1)

transcription = processor.batch_decode(predicted_ids)

batch["transcription"] = transcription

return batch

result = librispeech_eval.map(map_to_pred, remove_columns=["speech"])

print("WER:", wer(result["text"], result["transcription"]))

```

<!-- *Result (WER)*:

| "clean" | "other" |

|---|---|

| untested | untested | -->

|

Splend1dchan/wav2vec2-large-10min-lv60-self

|

Splend1dchan

| 2022-05-30T04:37:27Z

| 5

| 0

|

transformers

|

[

"transformers",

"pytorch",

"wav2vec2",

"automatic-speech-recognition",

"speech",

"audio",

"hf-asr-leaderboard",

"en",

"dataset:librispeech_asr",

"arxiv:2010.11430",

"arxiv:2006.11477",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-04-12T06:14:30Z

|

---

language: en

datasets:

- librispeech_asr

tags:

- speech

- audio

- automatic-speech-recognition

- hf-asr-leaderboard

license: apache-2.0

model-index:

- name: wav2vec2-large-10min-lv60

results:

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: Librispeech (clean)

type: librispeech_asr

args: en

metrics:

- name: Test WER

type: wer

value: None

---

# Wav2Vec2-Large-10min-Lv60 + Self-Training

# This is a direct state_dict transfer from fairseq to huggingface, the weights are identical

[Facebook's Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

The large model pretrained and fine-tuned on 10min of Libri-Light and Librispeech on 16kHz sampled speech audio. Model was trained with [Self-Training objective](https://arxiv.org/abs/2010.11430). When using the model make sure that your speech input is also sampled at 16Khz.

[Paper](https://arxiv.org/abs/2006.11477)

Authors: Alexei Baevski, Henry Zhou, Abdelrahman Mohamed, Michael Auli

**Abstract**

They show for the first time that learning powerful representations from speech audio alone followed by fine-tuning on transcribed speech can outperform the best semi-supervised methods while being conceptually simpler. wav2vec 2.0 masks the speech input in the latent space and solves a contrastive task defined over a quantization of the latent representations which are jointly learned. Experiments using all labeled data of Librispeech achieve 1.8/3.3 WER on the clean/other test sets. When lowering the amount of labeled data to one hour, wav2vec 2.0 outperforms the previous state of the art on the 100 hour subset while using 100 times less labeled data. Using just ten minutes of labeled data and pre-training on 53k hours of unlabeled data still achieves 4.8/8.2 WER. This demonstrates the feasibility of speech recognition with limited amounts of labeled data.

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

# Usage

To transcribe audio files the model can be used as a standalone acoustic model as follows:

```python

from transformers import Wav2Vec2Processor, Wav2Vec2ForCTC

from datasets import load_dataset

import torch

# load model and processor

processor = Wav2Vec2Processor.from_pretrained("Splend1dchan/wav2vec2-large-10min-lv60-self")

model = Wav2Vec2ForCTC.from_pretrained("Splend1dchan/wav2vec2-large-10min-lv60-self")

# load dummy dataset and read soundfiles

ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation")

# tokenize

input_values = processor(ds[0]["audio"]["array"], return_tensors="pt", padding="longest").input_values

# retrieve logits

logits = model(input_values).logits

# take argmax and decode

predicted_ids = torch.argmax(logits, dim=-1)

transcription = processor.batch_decode(predicted_ids)

```

## Evaluation

This code snippet shows how to evaluate facebook's **Splend1dchan/wav2vec2-large-10min-lv60-self** on LibriSpeech's "clean" and "other" test data.

```python

from datasets import load_dataset

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

import torch

from jiwer import wer

librispeech_eval = load_dataset("librispeech_asr", "clean", split="test")

model = Wav2Vec2ForCTC.from_pretrained("Splend1dchan/wav2vec2-large-10min-lv60-self").to("cuda")

processor = Wav2Vec2Processor.from_pretrained("Splend1dchan/wav2vec2-large-10min-lv60-self")

def map_to_pred(batch):

inputs = processor(batch["audio"]["array"], return_tensors="pt", padding="longest")

input_values = inputs.input_values.to("cuda")

attention_mask = inputs.attention_mask.to("cuda")

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

predicted_ids = torch.argmax(logits, dim=-1)

transcription = processor.batch_decode(predicted_ids)

batch["transcription"] = transcription

return batch

result = librispeech_eval.map(map_to_pred, remove_columns=["speech"])

print("WER:", wer(result["text"], result["transcription"]))

```

<!-- *Result (WER)*:

| "clean" | "other" |

|---|---|

| untested | untested | -->

|

KDB/bert-base-finetuned-sts

|

KDB

| 2022-05-30T03:59:09Z

| 9

| 0

|

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:klue",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-05-28T17:54:52Z

|

---

tags:

- generated_from_trainer

datasets:

- klue

metrics:

- pearsonr

model-index:

- name: bert-base-finetuned-sts

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: klue

type: klue

args: sts

metrics:

- name: Pearsonr

type: pearsonr

value: 0.8970473420720607

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-finetuned-sts

This model is a fine-tuned version of [klue/bert-base](https://huggingface.co/klue/bert-base) on the klue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4770

- Pearsonr: 0.8970

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 128

- eval_batch_size: 128

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Pearsonr |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 92 | 0.6330 | 0.8717 |

| No log | 2.0 | 184 | 0.6206 | 0.8818 |

| No log | 3.0 | 276 | 0.5010 | 0.8947 |

| No log | 4.0 | 368 | 0.4717 | 0.8956 |

| No log | 5.0 | 460 | 0.4770 | 0.8970 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

|

olpa/xlm-roberta-base-finetuned-panx-de

|

olpa

| 2022-05-30T03:26:44Z

| 4

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"xlm-roberta",

"token-classification",

"generated_from_trainer",

"dataset:xtreme",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-05-27T03:45:45Z

|

---

license: mit

tags:

- generated_from_trainer

datasets:

- xtreme

metrics:

- f1

model-index:

- name: xlm-roberta-base-finetuned-panx-de

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: xtreme

type: xtreme

args: PAN-X.de

metrics:

- name: F1

type: f1

value: 0.8627004891366169

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlm-roberta-base-finetuned-panx-de

This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1363

- F1: 0.8627

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 24

- eval_batch_size: 24

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.2539 | 1.0 | 525 | 0.1697 | 0.8179 |

| 0.1317 | 2.0 | 1050 | 0.1327 | 0.8516 |

| 0.0819 | 3.0 | 1575 | 0.1363 | 0.8627 |

### Framework versions

- Transformers 4.18.0

- Pytorch 1.11.0

- Datasets 2.1.0

- Tokenizers 0.12.1

|

stevemobs/deberta-base-combined-squad1-aqa-1epoch

|

stevemobs

| 2022-05-30T02:38:48Z

| 6

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"deberta",

"question-answering",

"generated_from_trainer",

"license:mit",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-05-30T01:14:58Z

|

---

license: mit

tags:

- generated_from_trainer

model-index:

- name: deberta-base-combined-squad1-aqa-1epoch

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# deberta-base-combined-squad1-aqa-1epoch

This model is a fine-tuned version of [microsoft/deberta-base](https://huggingface.co/microsoft/deberta-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.9431

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 12

- eval_batch_size: 12

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 1.0971 | 1.0 | 9906 | 0.9431 |

### Framework versions

- Transformers 4.18.0

- Pytorch 1.11.0

- Datasets 2.1.0

- Tokenizers 0.12.1

|

imamnurby/rob2rand_chen_w_prefix_c_fc

|

imamnurby

| 2022-05-30T02:25:04Z

| 5

| 0

|

transformers

|

[

"transformers",

"pytorch",

"encoder-decoder",

"text2text-generation",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-05-30T02:22:25Z

|

---

tags:

- generated_from_trainer

model-index:

- name: rob2rand_chen_w_prefix_c_fc

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# rob2rand_chen_w_prefix_c_fc

This model was trained from scratch on the None dataset.

It achieves the following results on the evaluation set:

- eval_loss: 0.0939

- eval_bleu: 84.4530

- eval_em: 52.0156

- eval_bleu_em: 68.2343

- eval_runtime: 21.0016

- eval_samples_per_second: 36.616

- eval_steps_per_second: 0.619

- step: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-06

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- num_epochs: 3

- mixed_precision_training: Native AMP

### Framework versions

- Transformers 4.18.0

- Pytorch 1.7.1

- Datasets 2.1.0

- Tokenizers 0.12.1

|

lopushanskyy/music-generation

|

lopushanskyy

| 2022-05-30T01:24:47Z

| 7

| 4

|

transformers

|

[

"transformers",

"private",

"audio-classification",

"license:mit",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2022-05-29T15:37:35Z

|

---

tags:

- audio-classification

license: mit

---

|

tbosse/bert-base-german-cased-finetuned-subj_preTrained_with_noisyData_v2

|

tbosse

| 2022-05-29T23:09:23Z

| 9

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-05-25T22:21:46Z

|

---

license: mit

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: bert-base-german-cased-finetuned-subj_preTrained_with_noisyData_v2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-german-cased-finetuned-subj_preTrained_with_noisyData_v2

This model is a fine-tuned version of [bert-base-german-cased](https://huggingface.co/bert-base-german-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0074

- Precision: 0.9776

- Recall: 0.9593

- F1: 0.9683

- Accuracy: 0.9981

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.038 | 1.0 | 625 | 0.0091 | 0.9694 | 0.9426 | 0.9559 | 0.9974 |

| 0.0079 | 2.0 | 1250 | 0.0074 | 0.9776 | 0.9593 | 0.9683 | 0.9981 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

|

stevemobs/deberta-base-finetuned-squad1-newsqa

|

stevemobs

| 2022-05-29T21:46:10Z

| 5

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"deberta",

"question-answering",

"generated_from_trainer",

"license:mit",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-05-29T17:38:51Z

|

---

license: mit

tags:

- generated_from_trainer

model-index:

- name: deberta-base-finetuned-squad1-newsqa

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# deberta-base-finetuned-squad1-newsqa

This model is a fine-tuned version of [stevemobs/deberta-base-finetuned-squad1](https://huggingface.co/stevemobs/deberta-base-finetuned-squad1) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7556

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 12

- eval_batch_size: 12

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 0.6703 | 1.0 | 17307 | 0.7207 |

| 0.4775 | 2.0 | 34614 | 0.7556 |

### Framework versions

- Transformers 4.18.0

- Pytorch 1.11.0

- Datasets 2.1.0

- Tokenizers 0.12.1

|

theojolliffe/bart-large-cnn-pubmed1o3-pubmed2o3-pubmed3o3-arxiv1o3

|

theojolliffe

| 2022-05-29T19:18:42Z

| 4

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bart",

"text2text-generation",

"generated_from_trainer",

"dataset:scientific_papers",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-05-28T15:31:03Z

|

---

license: mit

tags:

- generated_from_trainer

datasets:

- scientific_papers

metrics:

- rouge

model-index:

- name: bart-large-cnn-pubmed1o3-pubmed2o3-pubmed3o3-arxiv1o3

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: scientific_papers

type: scientific_papers

args: arxiv

metrics:

- name: Rouge1

type: rouge

value: 42.2455

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bart-large-cnn-pubmed1o3-pubmed2o3-pubmed3o3-arxiv1o3

This model is a fine-tuned version of [theojolliffe/bart-large-cnn-pubmed1o3-pubmed2o3-pubmed3o3](https://huggingface.co/theojolliffe/bart-large-cnn-pubmed1o3-pubmed2o3-pubmed3o3) on the scientific_papers dataset.

It achieves the following results on the evaluation set:

- Loss: 2.1825

- Rouge1: 42.2455

- Rouge2: 15.6488

- Rougel: 24.4935

- Rougelsum: 37.9427

- Gen Len: 131.1379

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:--------:|

| 2.185 | 1.0 | 33840 | 2.1825 | 42.2455 | 15.6488 | 24.4935 | 37.9427 | 131.1379 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

|

meln1k/q-FrozenLake-v1-4x4-noSlippery

|

meln1k

| 2022-05-29T17:24:40Z

| 0

| 0

| null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-05-29T17:22:14Z

|

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

---

# **Q-Learning** Agent playing **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="meln1k/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

nevepam/ppo-LunarLander-v2_

|

nevepam

| 2022-05-29T17:20:34Z

| 0

| 0

|

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-05-29T17:20:08Z

|

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- metrics:

- type: mean_reward

value: -142.97 +/- 44.00

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

felizang/q-FrozenLake-v1-4x4-noSlippery

|

felizang

| 2022-05-29T16:26:41Z

| 0

| 0

| null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-05-29T16:26:34Z

|

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

---

# **Q-Learning** Agent playing **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="felizang/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

ruselkomp/deeppavlov-framebank-full-5epochs

|

ruselkomp

| 2022-05-29T16:05:39Z

| 14

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"question-answering",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-05-28T12:29:12Z

|

---

tags:

- generated_from_trainer

model-index:

- name: deeppavlov-framebank-full-5epochs

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# deeppavlov-framebank-full-5epochs

This model is a fine-tuned version of [DeepPavlov/rubert-base-cased](https://huggingface.co/DeepPavlov/rubert-base-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.4206

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 1.0742 | 1.0 | 2827 | 1.0130 |

| 0.7934 | 2.0 | 5654 | 1.0363 |

| 0.5931 | 3.0 | 8481 | 1.1527 |

| 0.4166 | 4.0 | 11308 | 1.2754 |

| 0.3145 | 5.0 | 14135 | 1.4206 |

### Framework versions

- Transformers 4.19.0.dev0

- Pytorch 1.11.0+cu113

- Datasets 2.2.3.dev0

- Tokenizers 0.12.1

|

dbarbedillo/q-Taxi-v3

|

dbarbedillo

| 2022-05-29T15:51:03Z

| 0

| 0

| null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-05-29T15:50:53Z

|

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-Taxi-v3

results:

- metrics:

- type: mean_reward

value: 7.56 +/- 2.71

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

---

# **Q-Learning** Agent playing **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="dbarbedillo/q-Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

dbarbedillo/q-FrozenLake-v1-4x4-noSlippery

|

dbarbedillo

| 2022-05-29T15:48:08Z

| 0

| 0

| null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-05-29T15:47:58Z

|

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

---

# **Q-Learning** Agent playing **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="dbarbedillo/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

keras-io/ocr-for-captcha

|

keras-io

| 2022-05-29T15:39:12Z

| 109

| 70

|

tf-keras

|

[

"tf-keras",

"ocr",

"computer vision",

"object detection",

"image-to-text",

"license:cc0-1.0",

"region:us"

] |

image-to-text

| 2022-03-02T23:29:05Z

|

---

tags:

- ocr

- computer vision

- object detection

- image-to-text

license:

- cc0-1.0

---

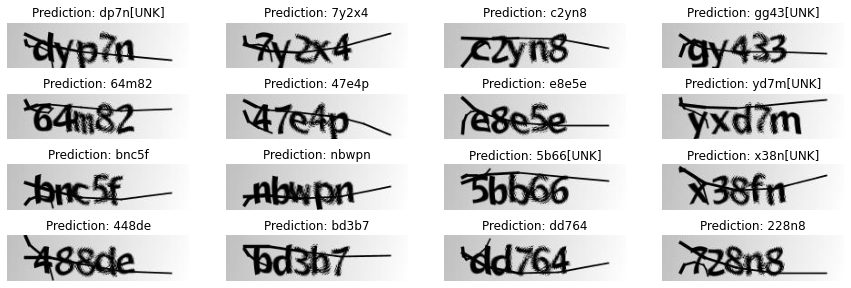

## Keras Implementation of OCR model for reading captcha 🤖🦹🏻

This repo contains the model and the notebook [to this Keras example on OCR model for reading captcha](https://keras.io/examples/vision/captcha_ocr/).

Full credits to: [Aakash Kumar Nain](https://twitter.com/A_K_Nain)

## Background Information

This example demonstrates a simple OCR model built with the Functional API. Apart from combining CNN and RNN, it also illustrates how you can instantiate a new layer and use it as an "Endpoint layer" for implementing CTC loss.

This model uses subclassing, learn more about subclassing from [this guide](https://keras.io/guides/making_new_layers_and_models_via_subclassing/).

|

jonporterjones/Taxi1

|

jonporterjones

| 2022-05-29T15:31:54Z

| 0

| 0

| null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-05-29T15:29:21Z

|

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: Taxi1

results:

- metrics:

- type: mean_reward

value: 7.56 +/- 2.71

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

---

# **Q-Learning** Agent playing **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="jonporterjones/Taxi1", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

vai6hav/wav2vec2-large-xls-r-300m-hindi-epochs60-colab

|

vai6hav

| 2022-05-29T15:04:50Z

| 3

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:common_voice",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-05-29T13:49:26Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: wav2vec2-large-xls-r-300m-hindi-epochs60-colab

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-large-xls-r-300m-hindi-epochs60-colab

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset.

It achieves the following results on the evaluation set:

- Loss: 1.7322

- Wer: 0.9188

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 60

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 6.2832 | 44.42 | 400 | 1.7322 | 0.9188 |

### Framework versions

- Transformers 4.11.3

- Pytorch 1.10.0+cu113

- Datasets 1.18.3

- Tokenizers 0.10.3

|

YeRyeongLee/bert-base-uncased-finetuned-removed-0529

|

YeRyeongLee

| 2022-05-29T15:03:49Z

| 3

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-05-29T06:03:05Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: bert-base-uncased-finetuned-removed-0529

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-removed-0529

This model is a fine-tuned version of [YeRyeongLee/bert-base-uncased-finetuned-0505-2](https://huggingface.co/YeRyeongLee/bert-base-uncased-finetuned-0505-2) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1501

- Accuracy: 0.8767

- F1: 0.8765

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:|

| No log | 1.0 | 3180 | 0.5072 | 0.8358 | 0.8373 |

| No log | 2.0 | 6360 | 0.5335 | 0.8566 | 0.8564 |

| No log | 3.0 | 9540 | 0.6317 | 0.8594 | 0.8603 |

| No log | 4.0 | 12720 | 0.6781 | 0.8723 | 0.8727 |

| No log | 5.0 | 15900 | 0.8235 | 0.8679 | 0.8682 |

| No log | 6.0 | 19080 | 0.9205 | 0.8676 | 0.8674 |

| No log | 7.0 | 22260 | 0.9898 | 0.8698 | 0.8695 |

| 0.2348 | 8.0 | 25440 | 1.0756 | 0.8695 | 0.8695 |

| 0.2348 | 9.0 | 28620 | 1.1342 | 0.8739 | 0.8735 |

| 0.2348 | 10.0 | 31800 | 1.1501 | 0.8767 | 0.8765 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.9.0

- Datasets 1.16.1

- Tokenizers 0.12.1

|

ashesicsis1/xlsr-english

|

ashesicsis1

| 2022-05-29T14:47:54Z

| 4

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:librispeech_asr",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-05-29T06:32:18Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- librispeech_asr

model-index:

- name: xlsr-english

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlsr-english

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the librispeech_asr dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3098

- Wer: 0.1451

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 3.2453 | 2.37 | 400 | 0.5789 | 0.4447 |

| 0.3736 | 4.73 | 800 | 0.3737 | 0.2850 |

| 0.1712 | 7.1 | 1200 | 0.3038 | 0.2136 |

| 0.117 | 9.47 | 1600 | 0.3016 | 0.2072 |

| 0.0897 | 11.83 | 2000 | 0.3158 | 0.1920 |

| 0.074 | 14.2 | 2400 | 0.3137 | 0.1831 |

| 0.0595 | 16.57 | 2800 | 0.2967 | 0.1745 |

| 0.0493 | 18.93 | 3200 | 0.3192 | 0.1670 |

| 0.0413 | 21.3 | 3600 | 0.3176 | 0.1644 |

| 0.0322 | 23.67 | 4000 | 0.3079 | 0.1598 |

| 0.0296 | 26.04 | 4400 | 0.2978 | 0.1511 |

| 0.0235 | 28.4 | 4800 | 0.3098 | 0.1451 |

### Framework versions

- Transformers 4.11.3

- Pytorch 1.10.0+cu113

- Datasets 1.18.3

- Tokenizers 0.10.3

|

nizamudma/bart-finetuned-cnn-3

|

nizamudma

| 2022-05-29T13:54:17Z

| 5

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bart",

"text2text-generation",

"generated_from_trainer",

"dataset:cnn_dailymail",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-05-28T17:30:32Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- cnn_dailymail

metrics:

- rouge

model-index:

- name: bart-finetuned-cnn-3

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: cnn_dailymail

type: cnn_dailymail

args: 3.0.0

metrics:

- name: Rouge1

type: rouge

value: 40.201

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bart-finetuned-cnn-3

This model is a fine-tuned version of [sshleifer/distilbart-xsum-12-3](https://huggingface.co/sshleifer/distilbart-xsum-12-3) on the cnn_dailymail dataset.

It achieves the following results on the evaluation set:

- Loss: 2.0751

- Rouge1: 40.201

- Rouge2: 18.8482

- Rougel: 29.4439

- Rougelsum: 37.416

- Gen Len: 56.7545

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:|

| 2.276 | 1.0 | 8883 | 2.1762 | 39.6581 | 18.3333 | 28.7765 | 36.7688 | 58.5386 |

| 2.0806 | 2.0 | 17766 | 2.0909 | 40.0328 | 18.8026 | 29.417 | 37.3508 | 56.6804 |

| 1.9615 | 3.0 | 26649 | 2.0751 | 40.201 | 18.8482 | 29.4439 | 37.416 | 56.7545 |

### Framework versions

- Transformers 4.16.2

- Pytorch 1.10.2+cu102

- Datasets 1.18.3

- Tokenizers 0.11.0

|

sanchit-gandhi/flax-dummy

|

sanchit-gandhi

| 2022-05-29T12:07:43Z

| 4

| 0

|

transformers

|

[

"transformers",

"jax",

"tensorboard",

"speech-encoder-decoder",

"automatic-speech-recognition",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-05-05T18:04:05Z

|

/home/sanchitgandhi/seq2seq-speech/README.md

|

sriiikar/wav2vec2-hindi-3

|

sriiikar

| 2022-05-29T11:42:20Z

| 3

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-05-29T05:25:27Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: wav2vec2-hindi-3

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-hindi-3

This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 2.0900

- Wer: 0.7281

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 40

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 4.609 | 6.41 | 1000 | 1.2290 | 0.7497 |

| 0.3754 | 12.82 | 2000 | 1.5350 | 0.7128 |

| 0.1587 | 19.23 | 3000 | 1.8671 | 0.7322 |

| 0.103 | 25.64 | 4000 | 1.9383 | 0.7300 |

| 0.0761 | 32.05 | 5000 | 2.0767 | 0.7306 |

| 0.0616 | 38.46 | 6000 | 2.0900 | 0.7281 |

### Framework versions

- Transformers 4.20.0.dev0

- Pytorch 1.11.0+cu113

- Datasets 2.2.3.dev0

- Tokenizers 0.12.1

|

vai6hav/wav2vec2-large-xls-r-300m-hindi-epochs40-colab

|

vai6hav

| 2022-05-29T10:06:38Z

| 3

| 0

|

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:common_voice",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-05-29T09:18:24Z

|

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: wav2vec2-large-xls-r-300m-hindi-epochs40-colab

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-large-xls-r-300m-hindi-epochs40-colab

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 40

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.11.3

- Pytorch 1.10.0+cu113

- Datasets 1.18.3

- Tokenizers 0.10.3

|

bigmorning/distilgpt2-lektay2-secondpos

|

bigmorning

| 2022-05-29T08:59:33Z

| 3

| 0

|

transformers

|

[

"transformers",

"tf",

"gpt2",

"text-generation",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-05-29T04:20:12Z

|

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: distilgpt2-lektay2-secondpos

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# distilgpt2-lektay2-secondpos

This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on an unknown dataset.

It achieves the following results on the evaluation set:

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 2e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

### Framework versions

- Transformers 4.19.2

- TensorFlow 2.8.0

- Datasets 2.2.2

- Tokenizers 0.12.1

|

everdoubling/byt5-Korean-base

|

everdoubling

| 2022-05-29T08:35:55Z

| 4

| 1

|

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"dataset:mc4",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-27T06:46:11Z

|

---

datasets:

- mc4

license: apache-2.0

---

# ByT5-Korean - base

ByT5-Korean is a Korean specific extension of Google's [ByT5](https://github.com/google-research/byt5).

A Korean syllable has three components (called Jamo): a beginning consonant, a middle vowel, and an optional final consonant; they are like individual characters of alphabet.

While the ByT5's utf-8 encoding allows generic encoding for multiple languages, it is unnatural for Korean because it splits the bits representation of each Jamo in the middle.

ByT5-Korean extends ByT5's utf-8 encoding with special care for Korean syllables; each Jamo is represented with a extra token.

ByT5-Korean was pre-trained on [mC4](https://www.tensorflow.org/datasets/catalog/c4#c4multilingual) with 70% Korean and 30% English.

## Encoding Scheme

```text

id: token

0: <pad>

1: <eos>

2: <unk>

3~258: utf-8 encoding

259~277: beginning consonants(초성), 19개(ㄱㄲㄴㄷㄸㄹㅁㅂㅃㅅㅆㅇㅈㅉㅊㅋㅌㅍㅎ)

278~298: middle vowel(중성), 21개(ㅏㅐㅑㅒㅓㅔㅕㅖㅗㅘㅙㅚㅛㅜㅝㅞㅟㅠㅡㅢㅣ)

299~326: final consonant(종성), 무종성+27개(ㄱㄲㄳㄴㄵㄶㄷㄹㄺㄻㄼㄽㄾㄿㅀㅁㅂㅄㅅㅆㅇㅈㅊㅋㅌㅍㅎ)

327~384: from <extra_id_0> to <extra_id_57>

```

## Example Inference

```python

import torch

from tokenizer import ByT5KoreanTokenizer # https://huggingface.co/everdoubling/byt5-Korean-base/blob/main/tokenizer.py

from transformers import T5ForConditionalGeneration

tokenizer_jamo = ByT5KoreanTokenizer()

model = T5ForConditionalGeneration.from_pretrained('everdoubling/byt5-Korean-base')

input_sentence = '한국어 위키백과(영어: Korean Wikipedia)는 한국어로 운영되는 위키백과의 다언어판 가운데 하나로서, 2002년 10월 11일에 <extra_id_0>. 또한 현재 한국어 위키백과에는 넘겨주기, 토론, 그림 등 페이지로 불리는 모든 문서를 포함하면 총 2,629,860개가 <extra_id_1>되어 있으며, 넘겨주기를 포함한 일반 문서 수는 1,278,560개,[1] 그중 넘겨주기, 막다른 문서를 제외한 일반 문서 수는 573,149개이다.'

input_ids_jamo = tokenizer_jamo(input_sentence).input_ids

outputs_jamo = model_jamo.generate(torch.tensor([input_ids_jamo]))

print(tokenizer_jamo.decode(outputs_jamo[0]))

# <pad><extra_id_0>설립되었다<extra_id_1>đě

```

Additional information coming soon...

|

Sultannn/fashion-gan

|