SmartBERT V3 CodeBERT

Overview

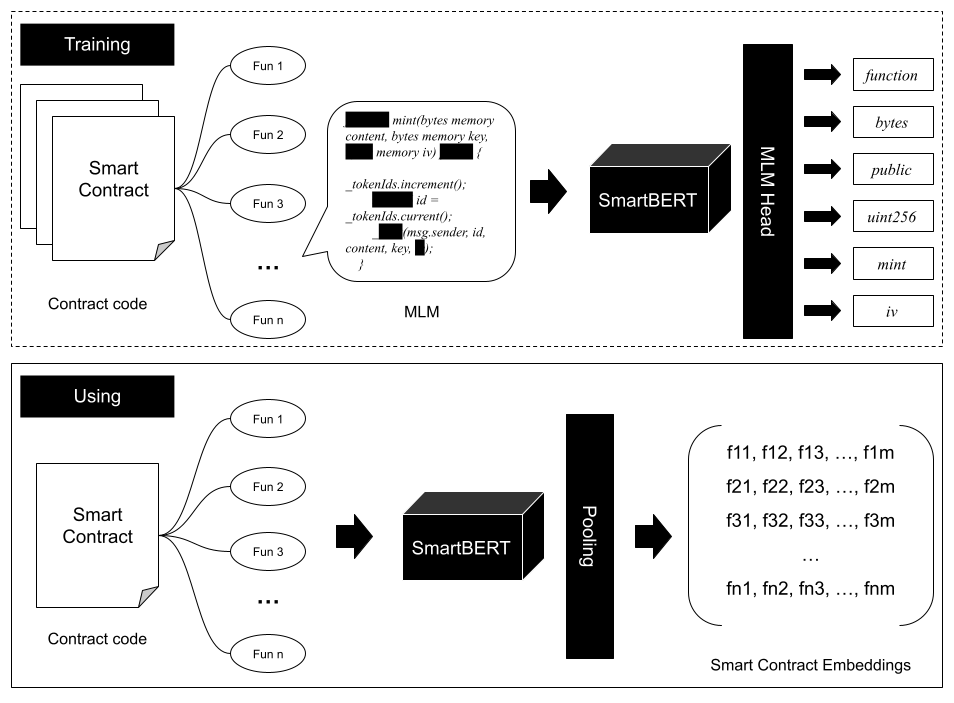

SmartBERT V3 is a pre-trained programming language model, initialized with CodeBERT-base-mlm. It has been further trained on SmartBERT V2 with an additional 64,000 smart contracts, to enhance its robustness in representing smart contract code at the function level.

- Training Data: Trained on a total of 80,000 smart contracts, including 16,000 from SmartBERT V2 and 64,000 (starts from 30001) new contracts.

- Hardware: Utilized 2 Nvidia A100 80G GPUs.

- Training Duration: Over 30 hours.

- Evaluation Data: Evaluated on 1,500 (starts from 96425) smart contracts.

Usage

from transformers import RobertaTokenizer, RobertaForMaskedLM, pipeline

model = RobertaForMaskedLM.from_pretrained('web3se/SmartBERT-v3')

tokenizer = RobertaTokenizer.from_pretrained('web3se/SmartBERT-v3')

code_example = "function totalSupply() external view <mask> (uint256);"

fill_mask = pipeline('fill-mask', model=model, tokenizer=tokenizer)

outputs = fill_mask(code_example)

print(outputs)

Preprocessing

All newline (\n) and tab (\t) characters in the function code were replaced with a single space to ensure consistency in the input data format.

Base Model

- Original Model: CodeBERT-base-mlm

Training Setup

training_args = TrainingArguments(

output_dir=OUTPUT_DIR,

overwrite_output_dir=True,

num_train_epochs=20,

per_device_train_batch_size=64,

save_steps=10000,

save_total_limit=2,

evaluation_strategy="steps",

eval_steps=10000,

resume_from_checkpoint=checkpoint

)

How to Use

To train and deploy the SmartBERT V3 model for Web API services, please refer to our GitHub repository: web3se-lab/SmartBERT.

Contributors

Citations

@article{huang2025smart,

title={Smart Contract Intent Detection with Pre-trained Programming Language Model},

author={Huang, Youwei and Li, Jianwen and Fang, Sen and Li, Yao and Yang, Peng and Hu, Bin},

journal={arXiv preprint arXiv:2508.20086},

year={2025}

}

Sponsors

- Institute of Intelligent Computing Technology, Suzhou, CAS

- CAS Mino (中科劢诺)

- Downloads last month

- 16

Model tree for web3se/SmartBERT-v3

Base model

microsoft/codebert-base-mlm