QwQwQwQwQwQ and Marigold met at a party and hit it off...

QwQ-32B-Snowdrop-v0

Has's notes: it's actually pretty damn good?!

Severian's notes: R1 at home for RP, literally. Able to handle my cards with gimmicks and subtle tricks in them. With a good reasoning starter+prompt, I'm getting consistently-structured responses that have a good amount of variation across them still while rerolling. Char/scenario portrayal is good despite my focus on writing style, lorebooks are properly referenced at times. Slop doesn't seem to be too much of an issue with thinking enabled. Some user impersonation is rarely observed. Prose is refreshing if you take advantage of what I did (writing style fixation). I know I said Marigold would be my daily driver, but this one is that now, it's that good.

Recommended settings

Context/instruct template: ChatML. Was definitely not tested with ChatML instruct and Mistral v7 template, nuh-uh.

Samplers: temperature at 0.9, min_p at 0.05, top_a at 0.3, TFS at 0.75, repetition_penalty at 1.03, DRY if you have access to it. (or not, see below.)

A virt-io derivative prompt worked best during our testing, but feel free to use what you like.

Master import for ST: https://files.catbox.moe/w812at.png

Reasoning

Feel free to test whichever reasoning setup you're most comfortable with, but here's a recommendation from me. My prompt has a line that says:

Style Preference: Encourage the usage of a Japanese light novel writing style.

Deciding to fixate on that, my reasoning starter is:

<think>Okay, in this scenario, before responding I need to consider the writing style referenced in the prompt, which is

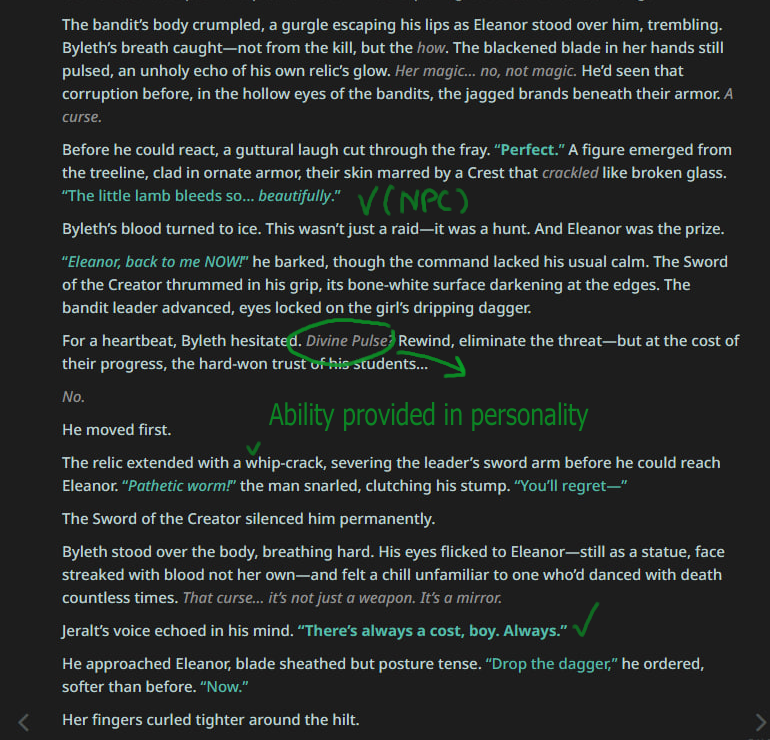

What this did for me, at least during testing is that it gave the reasoning a structure to follow across rerolls, seeking out that part of the prompt consistently. See below:

But the responses were still varied, because the next few paragraphs after these delved into character details, so on and so forth. Might want to experiment and make your own thinking/reasoning starter that focuses on what you hope to get out of the responses for best results.

— Severian

Thank you!

Big thanks to the folks in the trashpanda-org discord for testing and sending over some logs!

Reviews

PROS:

In 10 swipes, had only two minor instances of speaking for {{user}}. (Can probably be fixed with a good prompt, though.)

Creativity: 8/10 swipes provided unique text for 90% of the response, almost no cliché phrases.

Takes personality of characters into account, sticking to it well. Even without a lorebook to support it was able to retain lore-specific terms and actually remember which meant which.

NPCs: In 6/10 swipes NPC characters also partook in action, sticking to bits of information provided about them in opening message. Some of them even had their unique speech patterns. (Certain with a proper lorebook it would cook.)

Unfiltered, graphic descriptions of fight scenes. Magic, physical attacks - everything was taken into account with no holding back.

CONS:

Some swipes were a bit OOC. Some swipes were bland, providing little to no input or any weight on the roleplay context.

Out of all models I've tried recently, this one definitely has most potential. With proper prompting I think this beast would be genuinely one of the best models for unique scenarios.

— Sellvene

It's one of the -maybe THE- best small thinking models right now. It sticks to character really well, slops are almost non-existent though they are still there of course, it proceeds with the story well and listens to the prompt. I LOVE R1 but I love snowdrop even more right now because answers feel more geniune and less agressive compared to R1.

— Carmenta

Writes better than GPT 4.5. Overall, I think censorship is fucking up more unhinged bots and it's too tame for my liking. Another thing I noticed is that, it's sticking too much to being "right" to the character and too afraid to go off the rails.

— Myscell

I'm fainting, the character breakdown in it's thinking is similar like R1 does. Character handling looks amazing. Broo if a merge this good then, I'm looking forward to that QwQ finetune.

— Sam

Negligible slop, no positivity bias which is good though. I like the model so far, R1 at home.

— Raihanbook

Overall, I think this is a real solid model. Cot is great, listens to my prompt extremely well. Number 1 for reasoning, honestly. And the way it portrays the character and persona details? Perfect. Narration, perfect. I have very little complaints about this model, ya'll cooked.

— Moothdragon

On my end, posivity bias isn't really there 🤔 Character and scenario portrayal is good. The prose too, I like it. Between this and Marigold, I feel like I can lean into snowboard (I mean Snowdrop) more. For now though, it is still Marigold.

— Azula

Honestly i am impressed and I like it.

— OMGWTFBBQ

It's pretty damn good. Better than Mullein, I think.

— br

So far, it fucking SLAPS. I don't think it's tried to pull POV once yet.

— Overloke

Just us having fun, don't mind it

Some logs

(After a session started with Gemini)

(After a session started with Gemini)

Merge Details

Merge Method

This model was merged using the TIES merge method using Qwen/Qwen2.5-32B as a base.

Models Merged

The following models were included in the merge:

Configuration

The following YAML configuration was used to produce this model:

models:

- model: trashpanda-org/Qwen2.5-32B-Marigold-v0-exp

parameters:

weight: 1

density: 1

- model: trashpanda-org/Qwen2.5-32B-Marigold-v0

parameters:

weight: 1

density: 1

- model: Qwen/QwQ-32B

parameters:

weight: 0.9

density: 0.9

merge_method: ties

base_model: Qwen/Qwen2.5-32B

parameters:

weight: 0.9

density: 0.9

normalize: true

int8_mask: true

tokenizer_source: Qwen/Qwen2.5-32B-Instruct

dtype: bfloat16

- Downloads last month

- 1,241