Spaces:

Paused

Paused

Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +6 -0

- .gitignore +7 -0

- LICENSE +21 -0

- Readme.md +673 -0

- THIRD-PARTY-LICENSES +143 -0

- benchmark-openvino.bat +23 -0

- benchmark.bat +23 -0

- configs/lcm-lora-models.txt +4 -0

- configs/lcm-models.txt +8 -0

- configs/openvino-lcm-models.txt +9 -0

- configs/stable-diffusion-models.txt +7 -0

- controlnet_models/Readme.txt +3 -0

- docs/images/2steps-inference.jpg +0 -0

- docs/images/ARCGPU.png +0 -0

- docs/images/fastcpu-cli.png +0 -0

- docs/images/fastcpu-webui.png +3 -0

- docs/images/fastsdcpu-android-termux-pixel7.png +3 -0

- docs/images/fastsdcpu-api.png +0 -0

- docs/images/fastsdcpu-gui.jpg +3 -0

- docs/images/fastsdcpu-mac-gui.jpg +0 -0

- docs/images/fastsdcpu-screenshot.png +3 -0

- docs/images/fastsdcpu-webui.png +3 -0

- docs/images/fastsdcpu_flux_on_cpu.png +3 -0

- install-mac.sh +31 -0

- install.bat +29 -0

- install.sh +28 -0

- lora_models/HoloEnV2.safetensors +3 -0

- lora_models/Readme.txt +3 -0

- models/gguf/clip/readme.txt +1 -0

- models/gguf/diffusion/readme.txt +1 -0

- models/gguf/t5xxl/readme.txt +1 -0

- models/gguf/vae/readme.txt +1 -0

- requirements.txt +18 -0

- src/__init__.py +0 -0

- src/app.py +535 -0

- src/app_settings.py +124 -0

- src/backend/__init__.py +0 -0

- src/backend/annotators/canny_control.py +15 -0

- src/backend/annotators/control_interface.py +12 -0

- src/backend/annotators/depth_control.py +15 -0

- src/backend/annotators/image_control_factory.py +31 -0

- src/backend/annotators/lineart_control.py +11 -0

- src/backend/annotators/mlsd_control.py +10 -0

- src/backend/annotators/normal_control.py +10 -0

- src/backend/annotators/pose_control.py +10 -0

- src/backend/annotators/shuffle_control.py +10 -0

- src/backend/annotators/softedge_control.py +10 -0

- src/backend/api/models/response.py +16 -0

- src/backend/api/web.py +103 -0

- src/backend/base64_image.py +21 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,9 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

docs/images/fastcpu-webui.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

docs/images/fastsdcpu-android-termux-pixel7.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

docs/images/fastsdcpu-gui.jpg filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

docs/images/fastsdcpu-screenshot.png filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

docs/images/fastsdcpu-webui.png filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

docs/images/fastsdcpu_flux_on_cpu.png filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

env

|

| 2 |

+

*.bak

|

| 3 |

+

*.pyc

|

| 4 |

+

__pycache__

|

| 5 |

+

results

|

| 6 |

+

# excluding user settings for the GUI frontend

|

| 7 |

+

configs/settings.yaml

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 Rupesh Sreeraman

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

Readme.md

ADDED

|

@@ -0,0 +1,673 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# FastSD CPU :sparkles:[](https://github.com/openvinotoolkit/awesome-openvino)

|

| 2 |

+

|

| 3 |

+

<div align="center">

|

| 4 |

+

<a href="https://trendshift.io/repositories/3957" target="_blank"><img src="https://trendshift.io/api/badge/repositories/3957" alt="rupeshs%2Ffastsdcpu | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a>

|

| 5 |

+

</div>

|

| 6 |

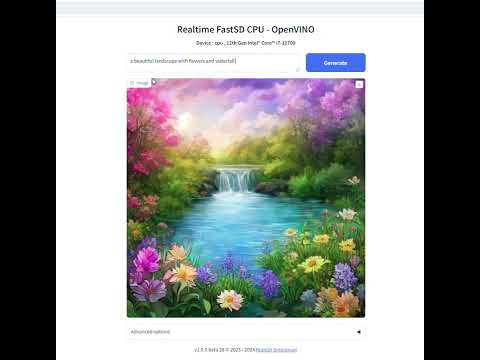

+

|

| 7 |

+

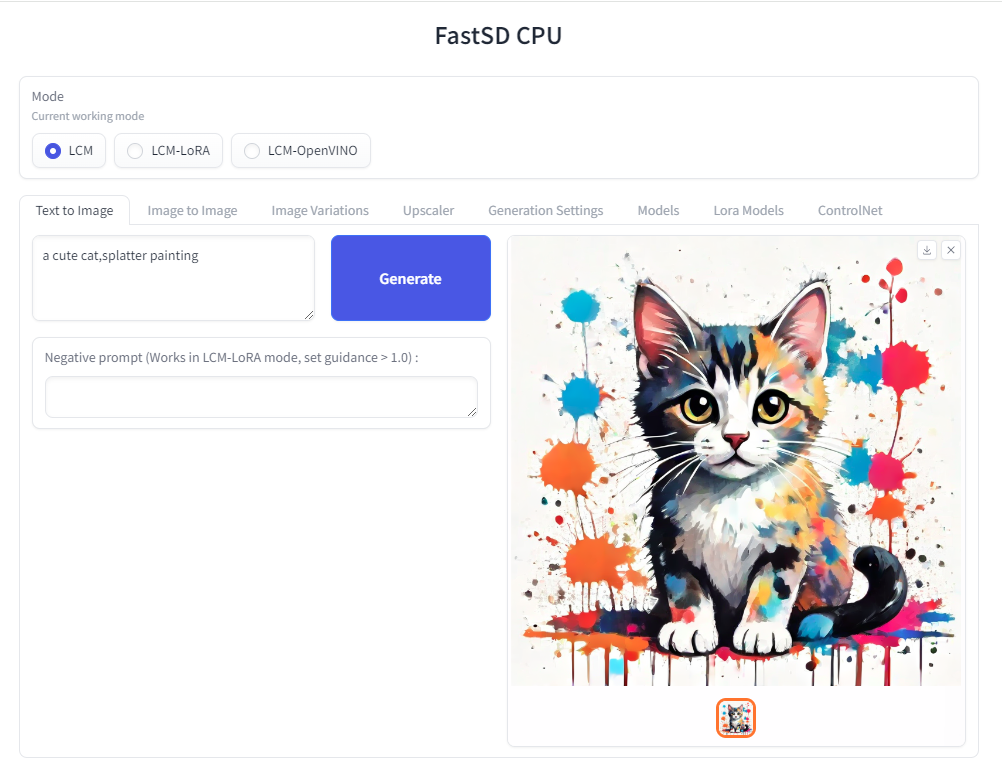

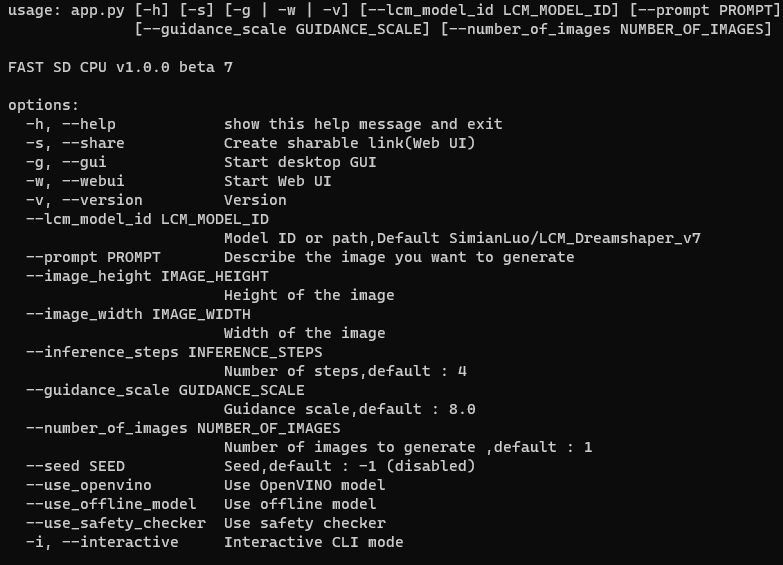

FastSD CPU is a faster version of Stable Diffusion on CPU. Based on [Latent Consistency Models](https://github.com/luosiallen/latent-consistency-model) and

|

| 8 |

+

[Adversarial Diffusion Distillation](https://nolowiz.com/fast-stable-diffusion-on-cpu-using-fastsd-cpu-and-openvino/).

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

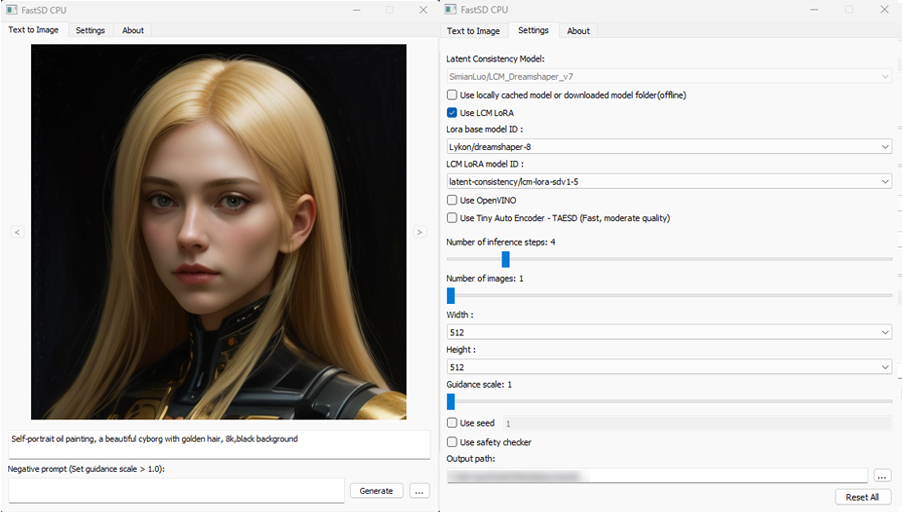

The following interfaces are available :

|

| 12 |

+

|

| 13 |

+

- Desktop GUI, basic text to image generation (Qt,faster)

|

| 14 |

+

- WebUI (Advanced features,Lora,controlnet etc)

|

| 15 |

+

- CLI (CommandLine Interface)

|

| 16 |

+

|

| 17 |

+

🚀 Using __OpenVINO(SDXS-512-0.9)__, it took __0.82 seconds__ (__820 milliseconds__) to create a single 512x512 image on a __Core i7-12700__.

|

| 18 |

+

|

| 19 |

+

## Table of Contents

|

| 20 |

+

|

| 21 |

+

- [Supported Platforms](#Supported platforms)

|

| 22 |

+

- [Dependencies](#dependencies)

|

| 23 |

+

- [Memory requirements](#memory-requirements)

|

| 24 |

+

- [Features](#features)

|

| 25 |

+

- [Benchmarks](#fast-inference-benchmarks)

|

| 26 |

+

- [OpenVINO Support](#openvino)

|

| 27 |

+

- [Installation](#installation)

|

| 28 |

+

- [AI PC Support - OpenVINO](#ai-pc-support)

|

| 29 |

+

- [GGUF support (Flux)](#gguf-support)

|

| 30 |

+

- [Real-time text to image (EXPERIMENTAL)](#real-time-text-to-image)

|

| 31 |

+

- [Models](#models)

|

| 32 |

+

- [How to use Lora models](#useloramodels)

|

| 33 |

+

- [How to use controlnet](#usecontrolnet)

|

| 34 |

+

- [Android](#android)

|

| 35 |

+

- [Raspberry Pi 4](#raspberry)

|

| 36 |

+

- [Orange Pi 5](#orangepi)

|

| 37 |

+

- [API Support](#apisupport)

|

| 38 |

+

- [License](#license)

|

| 39 |

+

- [Contributors](#contributors)

|

| 40 |

+

|

| 41 |

+

## Supported platforms⚡️

|

| 42 |

+

|

| 43 |

+

FastSD CPU works on the following platforms:

|

| 44 |

+

|

| 45 |

+

- Windows

|

| 46 |

+

- Linux

|

| 47 |

+

- Mac

|

| 48 |

+

- Android + Termux

|

| 49 |

+

- Raspberry PI 4

|

| 50 |

+

|

| 51 |

+

## Dependencies

|

| 52 |

+

|

| 53 |

+

- Python 3.10 or Python 3.11 (Please ensure that you have a working Python 3.10 or Python 3.11 installation available on the system)

|

| 54 |

+

|

| 55 |

+

## Memory requirements

|

| 56 |

+

|

| 57 |

+

Minimum system RAM requirement for FastSD CPU.

|

| 58 |

+

|

| 59 |

+

Model (LCM,OpenVINO): SD Turbo, 1 step, 512 x 512

|

| 60 |

+

|

| 61 |

+

Model (LCM-LoRA): Dreamshaper v8, 3 step, 512 x 512

|

| 62 |

+

|

| 63 |

+

| Mode | Min RAM |

|

| 64 |

+

| --------------------- | ------------- |

|

| 65 |

+

| LCM | 2 GB |

|

| 66 |

+

| LCM-LoRA | 4 GB |

|

| 67 |

+

| OpenVINO | 11 GB |

|

| 68 |

+

|

| 69 |

+

If we enable Tiny decoder(TAESD) we can save some memory(2GB approx) for example in OpenVINO mode memory usage will become 9GB.

|

| 70 |

+

|

| 71 |

+

:exclamation: Please note that guidance scale >1 increases RAM usage and slow inference speed.

|

| 72 |

+

|

| 73 |

+

## Features

|

| 74 |

+

|

| 75 |

+

- Desktop GUI, web UI and CLI

|

| 76 |

+

- Supports 256,512,768,1024 image sizes

|

| 77 |

+

- Supports Windows,Linux,Mac

|

| 78 |

+

- Saves images and diffusion setting used to generate the image

|

| 79 |

+

- Settings to control,steps,guidance and seed

|

| 80 |

+

- Added safety checker setting

|

| 81 |

+

- Maximum inference steps increased to 25

|

| 82 |

+

- Added [OpenVINO](https://github.com/openvinotoolkit/openvino) support

|

| 83 |

+

- Fixed OpenVINO image reproducibility issue

|

| 84 |

+

- Fixed OpenVINO high RAM usage,thanks [deinferno](https://github.com/deinferno)

|

| 85 |

+

- Added multiple image generation support

|

| 86 |

+

- Application settings

|

| 87 |

+

- Added Tiny Auto Encoder for SD (TAESD) support, 1.4x speed boost (Fast,moderate quality)

|

| 88 |

+

- Safety checker disabled by default

|

| 89 |

+

- Added SDXL,SSD1B - 1B LCM models

|

| 90 |

+

- Added LCM-LoRA support, works well for fine-tuned Stable Diffusion model 1.5 or SDXL models

|

| 91 |

+

- Added negative prompt support in LCM-LoRA mode

|

| 92 |

+

- LCM-LoRA models can be configured using text configuration file

|

| 93 |

+

- Added support for custom models for OpenVINO (LCM-LoRA baked)

|

| 94 |

+

- OpenVINO models now supports negative prompt (Set guidance >1.0)

|

| 95 |

+

- Real-time inference support,generates images while you type (experimental)

|

| 96 |

+

- Fast 2,3 steps inference

|

| 97 |

+

- Lcm-Lora fused models for faster inference

|

| 98 |

+

- Supports integrated GPU(iGPU) using OpenVINO (export DEVICE=GPU)

|

| 99 |

+

- 5.7x speed using OpenVINO(steps: 2,tiny autoencoder)

|

| 100 |

+

- Image to Image support (Use Web UI)

|

| 101 |

+

- OpenVINO image to image support

|

| 102 |

+

- Fast 1 step inference (SDXL Turbo)

|

| 103 |

+

- Added SD Turbo support

|

| 104 |

+

- Added image to image support for Turbo models (Pytorch and OpenVINO)

|

| 105 |

+

- Added image variations support

|

| 106 |

+

- Added 2x upscaler (EDSR and Tiled SD upscale (experimental)),thanks [monstruosoft](https://github.com/monstruosoft) for SD upscale

|

| 107 |

+

- Works on Android + Termux + PRoot

|

| 108 |

+

- Added interactive CLI,thanks [monstruosoft](https://github.com/monstruosoft)

|

| 109 |

+

- Added basic lora support to CLI and WebUI

|

| 110 |

+

- ONNX EDSR 2x upscale

|

| 111 |

+

- Add SDXL-Lightning support

|

| 112 |

+

- Add SDXL-Lightning OpenVINO support (int8)

|

| 113 |

+

- Add multilora support,thanks [monstruosoft](https://github.com/monstruosoft)

|

| 114 |

+

- Add basic ControlNet v1.1 support(LCM-LoRA mode),thanks [monstruosoft](https://github.com/monstruosoft)

|

| 115 |

+

- Add ControlNet annotators(Canny,Depth,LineArt,MLSD,NormalBAE,Pose,SoftEdge,Shuffle)

|

| 116 |

+

- Add SDXS-512 0.9 support

|

| 117 |

+

- Add SDXS-512 0.9 OpenVINO,fast 1 step inference (0.8 seconds to generate 512x512 image)

|

| 118 |

+

- Default model changed to SDXS-512-0.9

|

| 119 |

+

- Faster realtime image generation

|

| 120 |

+

- Add NPU device check

|

| 121 |

+

- Revert default model to SDTurbo

|

| 122 |

+

- Update realtime UI

|

| 123 |

+

- Add hypersd support

|

| 124 |

+

- 1 step fast inference support for SDXL and SD1.5

|

| 125 |

+

- Experimental support for single file Safetensors SD 1.5 models(Civitai models), simply add local model path to configs/stable-diffusion-models.txt file.

|

| 126 |

+

- Add REST API support

|

| 127 |

+

- Add Aura SR (4x)/GigaGAN based upscaler support

|

| 128 |

+

- Add Aura SR v2 upscaler support

|

| 129 |

+

- Add FLUX.1 schnell OpenVINO int 4 support

|

| 130 |

+

- Add CLIP skip support

|

| 131 |

+

- Add token merging support

|

| 132 |

+

- Add Intel AI PC support

|

| 133 |

+

- AI PC NPU(Power efficient inference using OpenVINO) supports, text to image ,image to image and image variations support

|

| 134 |

+

- Add [TAEF1 (Tiny autoencoder for FLUX.1) openvino](https://huggingface.co/rupeshs/taef1-openvino) support

|

| 135 |

+

- Add Image to Image and Image Variations Qt GUI support,thanks [monstruosoft](https://github.com/monstruosoft)

|

| 136 |

+

|

| 137 |

+

<a id="fast-inference-benchmarks"></a>

|

| 138 |

+

|

| 139 |

+

## Fast Inference Benchmarks

|

| 140 |

+

|

| 141 |

+

### 🚀 Fast 1 step inference with Hyper-SD

|

| 142 |

+

|

| 143 |

+

#### Stable diffuion 1.5

|

| 144 |

+

|

| 145 |

+

Works with LCM-LoRA mode.

|

| 146 |

+

Fast 1 step inference supported on `runwayml/stable-diffusion-v1-5` model,select `rupeshs/hypersd-sd1-5-1-step-lora` lcm_lora model from the settings.

|

| 147 |

+

|

| 148 |

+

#### Stable diffuion XL

|

| 149 |

+

|

| 150 |

+

Works with LCM and LCM-OpenVINO mode.

|

| 151 |

+

|

| 152 |

+

- *Hyper-SD SDXL 1 step* - [rupeshs/hyper-sd-sdxl-1-step](https://huggingface.co/rupeshs/hyper-sd-sdxl-1-step)

|

| 153 |

+

|

| 154 |

+

- *Hyper-SD SDXL 1 step OpenVINO* - [rupeshs/hyper-sd-sdxl-1-step-openvino-int8](https://huggingface.co/rupeshs/hyper-sd-sdxl-1-step-openvino-int8)

|

| 155 |

+

|

| 156 |

+

#### Inference Speed

|

| 157 |

+

|

| 158 |

+

Tested on Core i7-12700 to generate __768x768__ image(1 step).

|

| 159 |

+

|

| 160 |

+

| Diffusion Pipeline | Latency |

|

| 161 |

+

| --------------------- | ------------- |

|

| 162 |

+

| Pytorch | 19s |

|

| 163 |

+

| OpenVINO | 13s |

|

| 164 |

+

| OpenVINO + TAESDXL | 6.3s |

|

| 165 |

+

|

| 166 |

+

### Fastest 1 step inference (SDXS-512-0.9)

|

| 167 |

+

|

| 168 |

+

:exclamation:This is an experimental model, only text to image workflow is supported.

|

| 169 |

+

|

| 170 |

+

#### Inference Speed

|

| 171 |

+

|

| 172 |

+

Tested on Core i7-12700 to generate __512x512__ image(1 step).

|

| 173 |

+

|

| 174 |

+

__SDXS-512-0.9__

|

| 175 |

+

|

| 176 |

+

| Diffusion Pipeline | Latency |

|

| 177 |

+

| --------------------- | ------------- |

|

| 178 |

+

| Pytorch | 4.8s |

|

| 179 |

+

| OpenVINO | 3.8s |

|

| 180 |

+

| OpenVINO + TAESD | __0.82s__ |

|

| 181 |

+

|

| 182 |

+

### 🚀 Fast 1 step inference (SD/SDXL Turbo - Adversarial Diffusion Distillation,ADD)

|

| 183 |

+

|

| 184 |

+

Added support for ultra fast 1 step inference using [sdxl-turbo](https://huggingface.co/stabilityai/sdxl-turbo) model

|

| 185 |

+

|

| 186 |

+

:exclamation: These SD turbo models are intended for research purpose only.

|

| 187 |

+

|

| 188 |

+

#### Inference Speed

|

| 189 |

+

|

| 190 |

+

Tested on Core i7-12700 to generate __512x512__ image(1 step).

|

| 191 |

+

|

| 192 |

+

__SD Turbo__

|

| 193 |

+

|

| 194 |

+

| Diffusion Pipeline | Latency |

|

| 195 |

+

| --------------------- | ------------- |

|

| 196 |

+

| Pytorch | 7.8s |

|

| 197 |

+

| OpenVINO | 5s |

|

| 198 |

+

| OpenVINO + TAESD | 1.7s |

|

| 199 |

+

|

| 200 |

+

__SDXL Turbo__

|

| 201 |

+

|

| 202 |

+

| Diffusion Pipeline | Latency |

|

| 203 |

+

| --------------------- | ------------- |

|

| 204 |

+

| Pytorch | 10s |

|

| 205 |

+

| OpenVINO | 5.6s |

|

| 206 |

+

| OpenVINO + TAESDXL | 2.5s |

|

| 207 |

+

|

| 208 |

+

### 🚀 Fast 2 step inference (SDXL-Lightning - Adversarial Diffusion Distillation)

|

| 209 |

+

|

| 210 |

+

SDXL-Lightning works with LCM and LCM-OpenVINO mode.You can select these models from app settings.

|

| 211 |

+

|

| 212 |

+

Tested on Core i7-12700 to generate __768x768__ image(2 steps).

|

| 213 |

+

|

| 214 |

+

| Diffusion Pipeline | Latency |

|

| 215 |

+

| --------------------- | ------------- |

|

| 216 |

+

| Pytorch | 18s |

|

| 217 |

+

| OpenVINO | 12s |

|

| 218 |

+

| OpenVINO + TAESDXL | 10s |

|

| 219 |

+

|

| 220 |

+

- *SDXL-Lightning* - [rupeshs/SDXL-Lightning-2steps](https://huggingface.co/rupeshs/SDXL-Lightning-2steps)

|

| 221 |

+

|

| 222 |

+

- *SDXL-Lightning OpenVINO* - [rupeshs/SDXL-Lightning-2steps-openvino-int8](https://huggingface.co/rupeshs/SDXL-Lightning-2steps-openvino-int8)

|

| 223 |

+

|

| 224 |

+

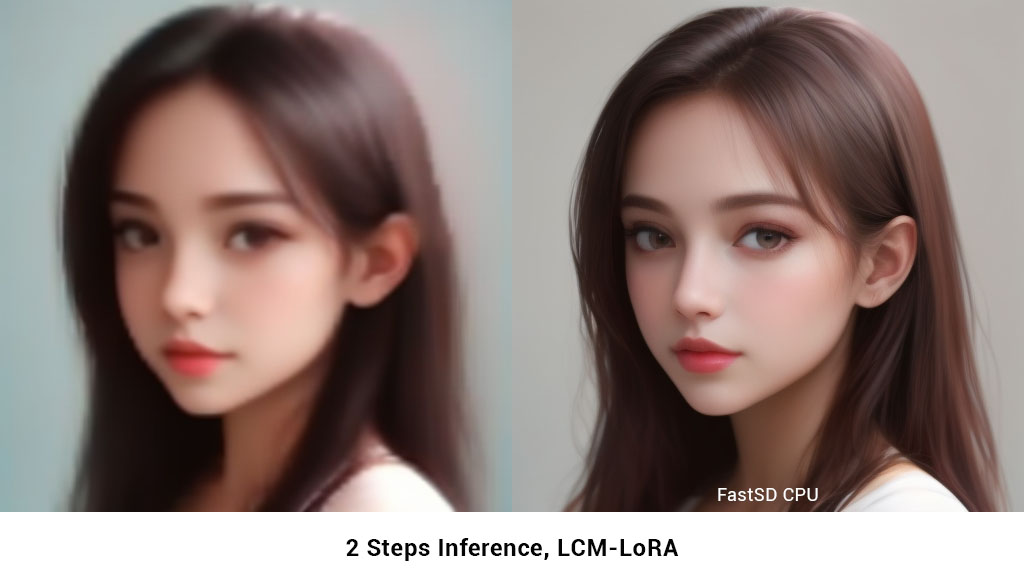

### 2 Steps fast inference (LCM)

|

| 225 |

+

|

| 226 |

+

FastSD CPU supports 2 to 3 steps fast inference using LCM-LoRA workflow. It works well with SD 1.5 models.

|

| 227 |

+

|

| 228 |

+

|

| 229 |

+

|

| 230 |

+

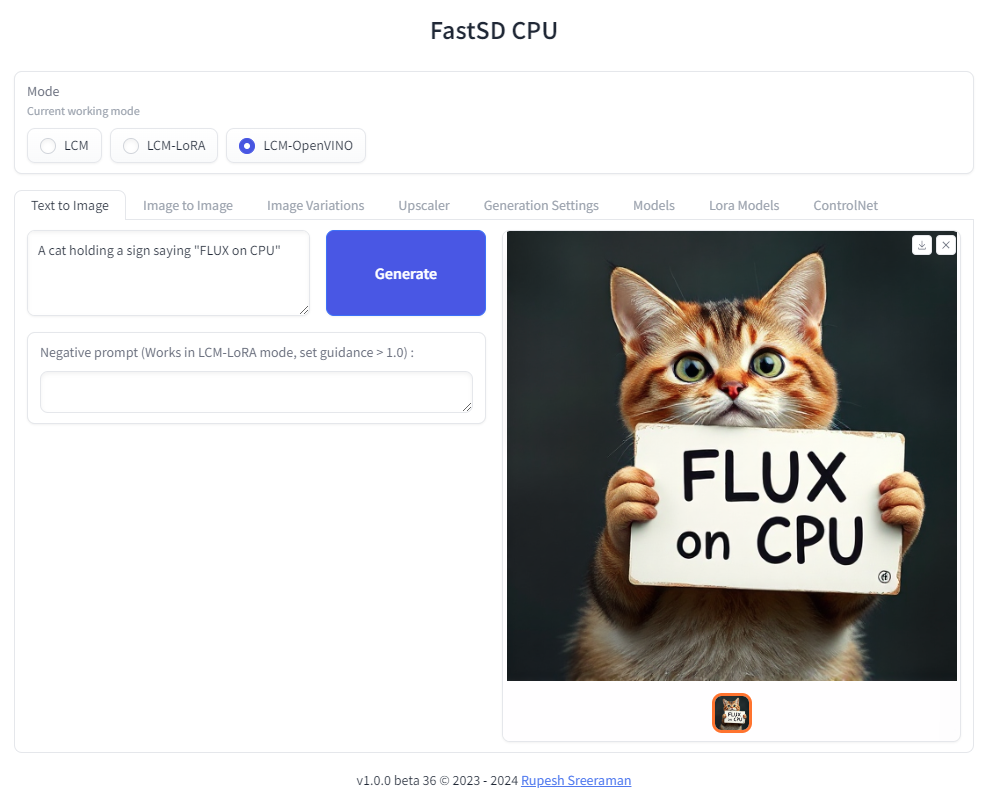

### FLUX.1-schnell OpenVINO support

|

| 231 |

+

|

| 232 |

+

|

| 233 |

+

|

| 234 |

+

:exclamation: Important - Please note the following points with FLUX workflow

|

| 235 |

+

|

| 236 |

+

- As of now only text to image generation mode is supported

|

| 237 |

+

- Use OpenVINO mode

|

| 238 |

+

- Use int4 model - *rupeshs/FLUX.1-schnell-openvino-int4*

|

| 239 |

+

- 512x512 image generation needs around __30GB__ system RAM

|

| 240 |

+

|

| 241 |

+

Tested on Intel Core i7-12700 to generate __512x512__ image(3 steps).

|

| 242 |

+

|

| 243 |

+

| Diffusion Pipeline | Latency |

|

| 244 |

+

| --------------------- | ------------- |

|

| 245 |

+

| OpenVINO | 4 min 30sec |

|

| 246 |

+

|

| 247 |

+

### Benchmark scripts

|

| 248 |

+

|

| 249 |

+

To benchmark run the following batch file on Windows:

|

| 250 |

+

|

| 251 |

+

- `benchmark.bat` - To benchmark Pytorch

|

| 252 |

+

- `benchmark-openvino.bat` - To benchmark OpenVINO

|

| 253 |

+

|

| 254 |

+

Alternatively you can run benchmarks by passing `-b` command line argument in CLI mode.

|

| 255 |

+

<a id="openvino"></a>

|

| 256 |

+

|

| 257 |

+

## OpenVINO support

|

| 258 |

+

|

| 259 |

+

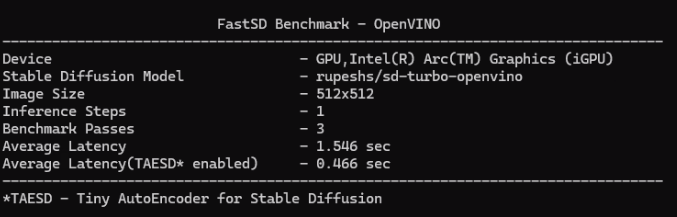

Fast SD CPU utilizes [OpenVINO](https://www.intel.com/content/www/us/en/developer/tools/openvino-toolkit/overview.html) to speed up the inference speed.

|

| 260 |

+

Thanks [deinferno](https://github.com/deinferno) for the OpenVINO model contribution.

|

| 261 |

+

We can get 2x speed improvement when using OpenVINO.

|

| 262 |

+

Thanks [Disty0](https://github.com/Disty0) for the conversion script.

|

| 263 |

+

|

| 264 |

+

### OpenVINO SDXL models

|

| 265 |

+

|

| 266 |

+

These are models converted to use directly use it with FastSD CPU. These models are compressed to int8 to reduce the file size (10GB to 4.4 GB) using [NNCF](https://github.com/openvinotoolkit/nncf)

|

| 267 |

+

|

| 268 |

+

- Hyper-SD SDXL 1 step - [rupeshs/hyper-sd-sdxl-1-step-openvino-int8](https://huggingface.co/rupeshs/hyper-sd-sdxl-1-step-openvino-int8)

|

| 269 |

+

- SDXL Lightning 2 steps - [rupeshs/SDXL-Lightning-2steps-openvino-int8](https://huggingface.co/rupeshs/SDXL-Lightning-2steps-openvino-int8)

|

| 270 |

+

|

| 271 |

+

### OpenVINO SD Turbo models

|

| 272 |

+

|

| 273 |

+

We have converted SD/SDXL Turbo models to OpenVINO for fast inference on CPU. These models are intended for research purpose only. Also we converted TAESDXL MODEL to OpenVINO and

|

| 274 |

+

|

| 275 |

+

- *SD Turbo OpenVINO* - [rupeshs/sd-turbo-openvino](https://huggingface.co/rupeshs/sd-turbo-openvino)

|

| 276 |

+

- *SDXL Turbo OpenVINO int8* - [rupeshs/sdxl-turbo-openvino-int8](https://huggingface.co/rupeshs/sdxl-turbo-openvino-int8)

|

| 277 |

+

- *TAESDXL OpenVINO* - [rupeshs/taesdxl-openvino](https://huggingface.co/rupeshs/taesdxl-openvino)

|

| 278 |

+

|

| 279 |

+

You can directly use these models in FastSD CPU.

|

| 280 |

+

|

| 281 |

+

### Convert SD 1.5 models to OpenVINO LCM-LoRA fused models

|

| 282 |

+

|

| 283 |

+

We first creates LCM-LoRA baked in model,replaces the scheduler with LCM and then converts it into OpenVINO model. For more details check [LCM OpenVINO Converter](https://github.com/rupeshs/lcm-openvino-converter), you can use this tools to convert any StableDiffusion 1.5 fine tuned models to OpenVINO.

|

| 284 |

+

|

| 285 |

+

<a id="ai-pc-support"></a>

|

| 286 |

+

|

| 287 |

+

## Intel AI PC support - OpenVINO (CPU, GPU, NPU)

|

| 288 |

+

|

| 289 |

+

Fast SD now supports AI PC with Intel® Core™ Ultra Processors. [To learn more about AI PC and OpenVINO](https://nolowiz.com/ai-pc-and-openvino-quick-and-simple-guide/).

|

| 290 |

+

|

| 291 |

+

### GPU

|

| 292 |

+

|

| 293 |

+

For GPU mode `set device=GPU` and run webui. FastSD GPU benchmark on AI PC as shown below.

|

| 294 |

+

|

| 295 |

+

|

| 296 |

+

|

| 297 |

+

### NPU

|

| 298 |

+

|

| 299 |

+

FastSD CPU now supports power efficient NPU (Neural Processing Unit) that comes with Intel Core Ultra processors.

|

| 300 |

+

|

| 301 |

+

FastSD tested with following Intel processor's NPUs:

|

| 302 |

+

|

| 303 |

+

- Intel Core Ultra Series 1 (Meteor Lake)

|

| 304 |

+

- Intel Core Ultra Series 2 (Lunar Lake)

|

| 305 |

+

|

| 306 |

+

Currently FastSD support this model for NPU [rupeshs/sd15-lcm-square-openvino-int8](https://huggingface.co/rupeshs/sd15-lcm-square-openvino-int8).

|

| 307 |

+

|

| 308 |

+

Supports following modes on NPU :

|

| 309 |

+

|

| 310 |

+

- Text to image

|

| 311 |

+

- Image to image

|

| 312 |

+

- Image variations

|

| 313 |

+

|

| 314 |

+

To run model in NPU follow these steps (Please make sure that your AI PC's NPU driver is the latest):

|

| 315 |

+

|

| 316 |

+

- Start webui

|

| 317 |

+

- Select LCM-OpenVINO mode

|

| 318 |

+

- Select the models settings tab and select OpenVINO model `rupeshs/sd15-lcm-square-openvino-int8`

|

| 319 |

+

- Set device envionment variable `set DEVICE=NPU`

|

| 320 |

+

- Now it will run on the NPU

|

| 321 |

+

|

| 322 |

+

This is heterogeneous computing since text encoder and Unet will use NPU and VAE will use GPU for processing. Thanks to OpenVINO.

|

| 323 |

+

|

| 324 |

+

Please note that tiny auto encoder will not work in NPU mode.

|

| 325 |

+

|

| 326 |

+

*Thanks to Intel for providing AI PC dev kit and Tiber cloud access to test FastSD, special thanks to [Pooja Baraskar](https://github.com/Pooja-B),[Dmitriy Pastushenkov](https://github.com/DimaPastushenkov).*

|

| 327 |

+

|

| 328 |

+

<a id="gguf-support"></a>

|

| 329 |

+

|

| 330 |

+

## GGUF support - Flux

|

| 331 |

+

|

| 332 |

+

[GGUF](https://github.com/ggerganov/ggml/blob/master/docs/gguf.md) Flux model supported via [stablediffusion.cpp](https://github.com/leejet/stable-diffusion.cpp) shared library. Currently Flux Schenell model supported.

|

| 333 |

+

|

| 334 |

+

To use GGUF model use web UI and select GGUF mode.

|

| 335 |

+

|

| 336 |

+

Tested on Windows and Linux.

|

| 337 |

+

|

| 338 |

+

:exclamation: Main advantage here we reduced minimum system RAM required for Flux workflow to around __12 GB__.

|

| 339 |

+

|

| 340 |

+

Supported mode - Text to image

|

| 341 |

+

|

| 342 |

+

### How to run Flux GGUF model

|

| 343 |

+

|

| 344 |

+

- Download stablediffusion.cpp prebuilt shared library and place it inside fastsdcpu folder

|

| 345 |

+

For Windows users, download [stable-diffusion.dll](https://huggingface.co/rupeshs/FastSD-Flux-GGUF/blob/main/stable-diffusion.dll)

|

| 346 |

+

|

| 347 |

+

For Linux users download [libstable-diffusion.so](https://huggingface.co/rupeshs/FastSD-Flux-GGUF/blob/main/libstable-diffusion.so)

|

| 348 |

+

|

| 349 |

+

You can also build the library manully by following the guide *"Build stablediffusion.cpp shared library for GGUF flux model support"*

|

| 350 |

+

|

| 351 |

+

- Download __diffusion model__ from [flux1-schnell-q4_0.gguf](https://huggingface.co/rupeshs/FastSD-Flux-GGUF/blob/main/flux1-schnell-q4_0.gguf) and place it inside `models/gguf/diffusion` directory

|

| 352 |

+

- Download __clip model__ from [clip_l_q4_0.gguf](https://huggingface.co/rupeshs/FastSD-Flux-GGUF/blob/main/clip_l_q4_0.gguf) and place it inside `models/gguf/clip` directory

|

| 353 |

+

- Download __T5-XXL model__ from [t5xxl_q4_0.gguf](https://huggingface.co/rupeshs/FastSD-Flux-GGUF/blob/main/t5xxl_q4_0.gguf) and place it inside `models/gguf/t5xxl` directory

|

| 354 |

+

- Download __VAE model__ from [ae.safetensors](https://huggingface.co/black-forest-labs/FLUX.1-schnell/blob/main/ae.safetensors) and place it inside `models/gguf/vae` directory

|

| 355 |

+

- Start web UI and select GGUF mode

|

| 356 |

+

- Select the models settings tab and select GGUF diffusion,clip_l,t5xxl and VAE models.

|

| 357 |

+

- Enter your prompt and generate image

|

| 358 |

+

|

| 359 |

+

### Build stablediffusion.cpp shared library for GGUF flux model support(Optional)

|

| 360 |

+

|

| 361 |

+

To build the stablediffusion.cpp library follow these steps

|

| 362 |

+

|

| 363 |

+

- `git clone https://github.com/leejet/stable-diffusion.cpp`

|

| 364 |

+

- `cd stable-diffusion.cpp`

|

| 365 |

+

- `git pull origin master`

|

| 366 |

+

- `git submodule init`

|

| 367 |

+

- `git submodule update`

|

| 368 |

+

- `git checkout 14206fd48832ab600d9db75f15acb5062ae2c296`

|

| 369 |

+

- `cmake . -DSD_BUILD_SHARED_LIBS=ON`

|

| 370 |

+

- `cmake --build . --config Release`

|

| 371 |

+

- Copy the stablediffusion dll/so file to fastsdcpu folder

|

| 372 |

+

|

| 373 |

+

<a id="real-time-text-to-image"></a>

|

| 374 |

+

|

| 375 |

+

## Real-time text to image (EXPERIMENTAL)

|

| 376 |

+

|

| 377 |

+

We can generate real-time text to images using FastSD CPU.

|

| 378 |

+

|

| 379 |

+

__CPU (OpenVINO)__

|

| 380 |

+

|

| 381 |

+

Near real-time inference on CPU using OpenVINO, run the `start-realtime.bat` batch file and open the link in browser (Resolution : 512x512,Latency : 0.82s on Intel Core i7)

|

| 382 |

+

|

| 383 |

+

Watch YouTube video :

|

| 384 |

+

|

| 385 |

+

[](https://www.youtube.com/watch?v=0XMiLc_vsyI)

|

| 386 |

+

|

| 387 |

+

## Models

|

| 388 |

+

|

| 389 |

+

To use single file [Safetensors](https://huggingface.co/docs/safetensors/en/index) SD 1.5 models(Civit AI) follow this [YouTube tutorial](https://www.youtube.com/watch?v=zZTfUZnXJVk). Use LCM-LoRA Mode for single file safetensors.

|

| 390 |

+

|

| 391 |

+

Fast SD supports LCM models and LCM-LoRA models.

|

| 392 |

+

|

| 393 |

+

### LCM Models

|

| 394 |

+

|

| 395 |

+

These models can be configured in `configs/lcm-models.txt` file.

|

| 396 |

+

|

| 397 |

+

### OpenVINO models

|

| 398 |

+

|

| 399 |

+

These are LCM-LoRA baked in models. These models can be configured in `configs/openvino-lcm-models.txt` file

|

| 400 |

+

|

| 401 |

+

### LCM-LoRA models

|

| 402 |

+

|

| 403 |

+

These models can be configured in `configs/lcm-lora-models.txt` file.

|

| 404 |

+

|

| 405 |

+

- *lcm-lora-sdv1-5* - distilled consistency adapter for [runwayml/stable-diffusion-v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5)

|

| 406 |

+

- *lcm-lora-sdxl* - Distilled consistency adapter for [stable-diffusion-xl-base-1.0](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0)

|

| 407 |

+

- *lcm-lora-ssd-1b* - Distilled consistency adapter for [segmind/SSD-1B](https://huggingface.co/segmind/SSD-1B)

|

| 408 |

+

|

| 409 |

+

These models are used with Stablediffusion base models `configs/stable-diffusion-models.txt`.

|

| 410 |

+

|

| 411 |

+

:exclamation: Currently no support for OpenVINO LCM-LoRA models.

|

| 412 |

+

|

| 413 |

+

### How to add new LCM-LoRA models

|

| 414 |

+

|

| 415 |

+

To add new model follow the steps:

|

| 416 |

+

For example we will add `wavymulder/collage-diffusion`, you can give Stable diffusion 1.5 Or SDXL,SSD-1B fine tuned models.

|

| 417 |

+

|

| 418 |

+

1. Open `configs/stable-diffusion-models.txt` file in text editor.

|

| 419 |

+

2. Add the model ID `wavymulder/collage-diffusion` or locally cloned path.

|

| 420 |

+

|

| 421 |

+

Updated file as shown below :

|

| 422 |

+

|

| 423 |

+

```Lykon/dreamshaper-8

|

| 424 |

+

Fictiverse/Stable_Diffusion_PaperCut_Model

|

| 425 |

+

stabilityai/stable-diffusion-xl-base-1.0

|

| 426 |

+

runwayml/stable-diffusion-v1-5

|

| 427 |

+

segmind/SSD-1B

|

| 428 |

+

stablediffusionapi/anything-v5

|

| 429 |

+

wavymulder/collage-diffusion

|

| 430 |

+

```

|

| 431 |

+

|

| 432 |

+

Similarly we can update `configs/lcm-lora-models.txt` file with lcm-lora ID.

|

| 433 |

+

|

| 434 |

+

### How to use LCM-LoRA models offline

|

| 435 |

+

|

| 436 |

+

Please follow the steps to run LCM-LoRA models offline :

|

| 437 |

+

|

| 438 |

+

- In the settings ensure that "Use locally cached model" setting is ticked.

|

| 439 |

+

- Download the model for example `latent-consistency/lcm-lora-sdv1-5`

|

| 440 |

+

Run the following commands:

|

| 441 |

+

|

| 442 |

+

```

|

| 443 |

+

git lfs install

|

| 444 |

+

git clone https://huggingface.co/latent-consistency/lcm-lora-sdv1-5

|

| 445 |

+

```

|

| 446 |

+

|

| 447 |

+

Copy the cloned model folder path for example "D:\demo\lcm-lora-sdv1-5" and update the `configs/lcm-lora-models.txt` file as shown below :

|

| 448 |

+

|

| 449 |

+

```

|

| 450 |

+

D:\demo\lcm-lora-sdv1-5

|

| 451 |

+

latent-consistency/lcm-lora-sdxl

|

| 452 |

+

latent-consistency/lcm-lora-ssd-1b

|

| 453 |

+

```

|

| 454 |

+

|

| 455 |

+

- Open the app and select the newly added local folder in the combo box menu.

|

| 456 |

+

- That's all!

|

| 457 |

+

<a id="useloramodels"></a>

|

| 458 |

+

|

| 459 |

+

## How to use Lora models

|

| 460 |

+

|

| 461 |

+

Place your lora models in "lora_models" folder. Use LCM or LCM-Lora mode.

|

| 462 |

+

You can download lora model (.safetensors/Safetensor) from [Civitai](https://civitai.com/) or [Hugging Face](https://huggingface.co/)

|

| 463 |

+

E.g: [cutecartoonredmond](https://civitai.com/models/207984/cutecartoonredmond-15v-cute-cartoon-lora-for-liberteredmond-sd-15?modelVersionId=234192)

|

| 464 |

+

<a id="usecontrolnet"></a>

|

| 465 |

+

|

| 466 |

+

## ControlNet support

|

| 467 |

+

|

| 468 |

+

We can use ControlNet in LCM-LoRA mode.

|

| 469 |

+

|

| 470 |

+

Download ControlNet models from [ControlNet-v1-1](https://huggingface.co/comfyanonymous/ControlNet-v1-1_fp16_safetensors/tree/main).Download and place controlnet models in "controlnet_models" folder.

|

| 471 |

+

|

| 472 |

+

Use the medium size models (723 MB)(For example : <https://huggingface.co/comfyanonymous/ControlNet-v1-1_fp16_safetensors/blob/main/control_v11p_sd15_canny_fp16.safetensors>)

|

| 473 |

+

|

| 474 |

+

## Installation

|

| 475 |

+

|

| 476 |

+

### FastSD CPU on Windows

|

| 477 |

+

|

| 478 |

+

|

| 479 |

+

|

| 480 |

+

:exclamation:__You must have a working Python installation.(Recommended : Python 3.10 or 3.11 )__

|

| 481 |

+

|

| 482 |

+

To install FastSD CPU on Windows run the following steps :

|

| 483 |

+

|

| 484 |

+

- Clone/download this repo or download [release](https://github.com/rupeshs/fastsdcpu/releases).

|

| 485 |

+

- Double click `install.bat` (It will take some time to install,depending on your internet speed.)

|

| 486 |

+

- You can run in desktop GUI mode or web UI mode.

|

| 487 |

+

|

| 488 |

+

#### Desktop GUI

|

| 489 |

+

|

| 490 |

+

- To start desktop GUI double click `start.bat`

|

| 491 |

+

|

| 492 |

+

#### Web UI

|

| 493 |

+

|

| 494 |

+

- To start web UI double click `start-webui.bat`

|

| 495 |

+

|

| 496 |

+

### FastSD CPU on Linux

|

| 497 |

+

|

| 498 |

+

:exclamation:__Ensure that you have Python 3.9 or 3.10 or 3.11 version installed.__

|

| 499 |

+

|

| 500 |

+

- Clone/download this repo or download [release](https://github.com/rupeshs/fastsdcpu/releases).

|

| 501 |

+

- In the terminal, enter into fastsdcpu directory

|

| 502 |

+

- Run the following command

|

| 503 |

+

|

| 504 |

+

`chmod +x install.sh`

|

| 505 |

+

|

| 506 |

+

`./install.sh`

|

| 507 |

+

|

| 508 |

+

#### To start Desktop GUI

|

| 509 |

+

|

| 510 |

+

`./start.sh`

|

| 511 |

+

|

| 512 |

+

#### To start Web UI

|

| 513 |

+

|

| 514 |

+

`./start-webui.sh`

|

| 515 |

+

|

| 516 |

+

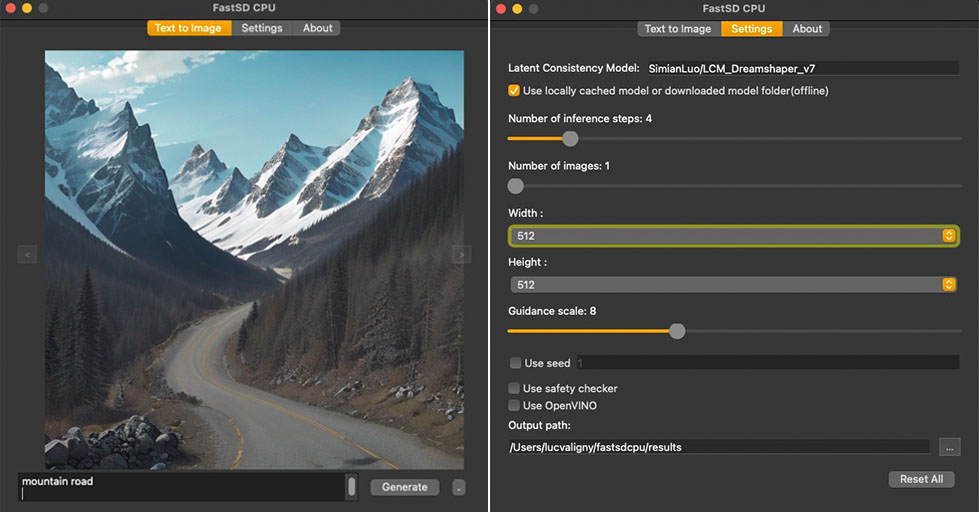

### FastSD CPU on Mac

|

| 517 |

+

|

| 518 |

+

|

| 519 |

+

|

| 520 |

+

:exclamation:__Ensure that you have Python 3.9 or 3.10 or 3.11 version installed.__

|

| 521 |

+

|

| 522 |

+

Run the following commands to install FastSD CPU on Mac :

|

| 523 |

+

|

| 524 |

+

- Clone/download this repo or download [release](https://github.com/rupeshs/fastsdcpu/releases).

|

| 525 |

+

- In the terminal, enter into fastsdcpu directory

|

| 526 |

+

- Run the following command

|

| 527 |

+

|

| 528 |

+

`chmod +x install-mac.sh`

|

| 529 |

+

|

| 530 |

+

`./install-mac.sh`

|

| 531 |

+

|

| 532 |

+

#### To start Desktop GUI

|

| 533 |

+

|

| 534 |

+

`./start.sh`

|

| 535 |

+

|

| 536 |

+

#### To start Web UI

|

| 537 |

+

|

| 538 |

+

`./start-webui.sh`

|

| 539 |

+

|

| 540 |

+

Thanks [Autantpourmoi](https://github.com/Autantpourmoi) for Mac testing.

|

| 541 |

+

|

| 542 |

+

:exclamation:We don't support OpenVINO on Mac (M1/M2/M3 chips, but *does* work on Intel chips).

|

| 543 |

+

|

| 544 |

+

If you want to increase image generation speed on Mac(M1/M2 chip) try this:

|

| 545 |

+

|

| 546 |

+

`export DEVICE=mps` and start app `start.sh`

|

| 547 |

+

|

| 548 |

+

#### Web UI screenshot

|

| 549 |

+

|

| 550 |

+

|

| 551 |

+

|

| 552 |

+

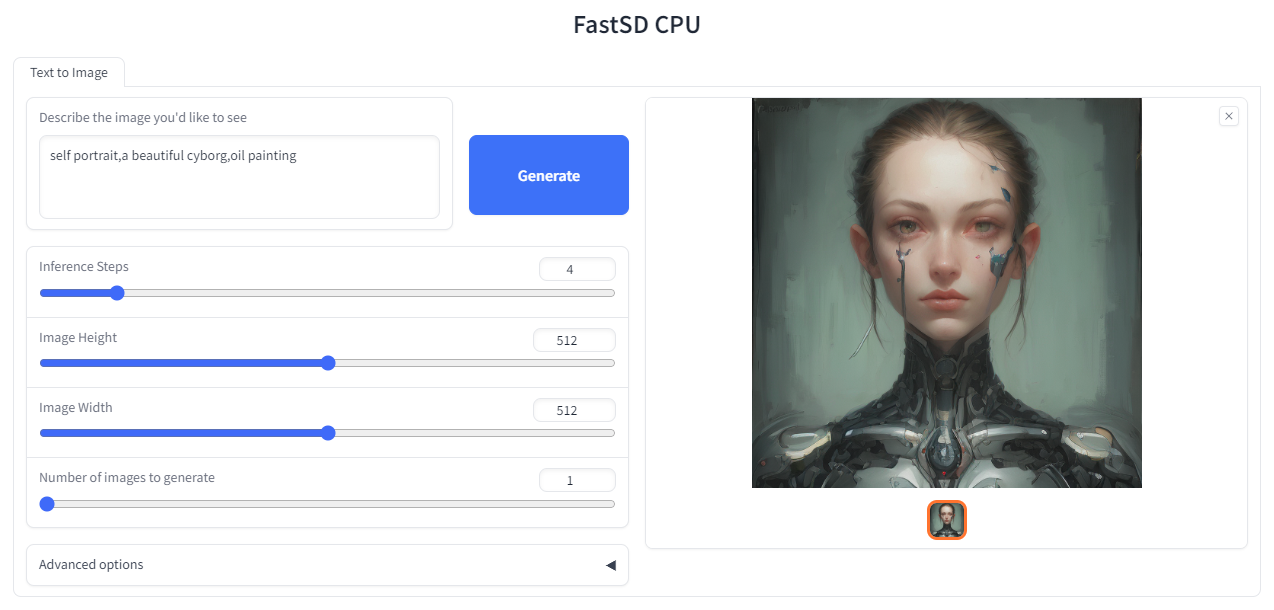

### Google Colab

|

| 553 |

+

|

| 554 |

+

Due to the limitation of using CPU/OpenVINO inside colab, we are using GPU with colab.

|

| 555 |

+

[](https://colab.research.google.com/drive/1SuAqskB-_gjWLYNRFENAkIXZ1aoyINqL?usp=sharing)

|

| 556 |

+

|

| 557 |

+

### CLI mode (Advanced users)

|

| 558 |

+

|

| 559 |

+

|

| 560 |

+

|

| 561 |

+

Open the terminal and enter into fastsdcpu folder.

|

| 562 |

+

Activate virtual environment using the command:

|

| 563 |

+

|

| 564 |

+

##### Windows users

|

| 565 |

+

|

| 566 |

+

(Suppose FastSD CPU available in the directory "D:\fastsdcpu")

|

| 567 |

+

`D:\fastsdcpu\env\Scripts\activate.bat`

|

| 568 |

+

|

| 569 |

+

##### Linux users

|

| 570 |

+

|

| 571 |

+

`source env/bin/activate`

|

| 572 |

+

|

| 573 |

+

Start CLI `src/app.py -h`

|

| 574 |

+

|

| 575 |

+

<a id="android"></a>

|

| 576 |

+

|

| 577 |

+

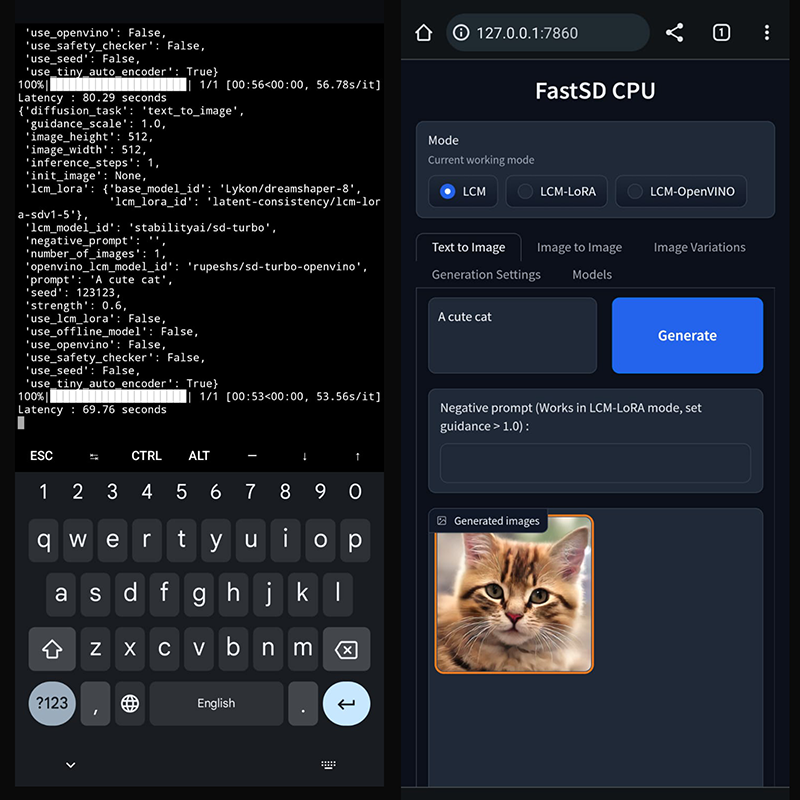

## Android (Termux + PRoot)

|

| 578 |

+

|

| 579 |

+

FastSD CPU running on Google Pixel 7 Pro.

|

| 580 |

+

|

| 581 |

+

|

| 582 |

+

|

| 583 |

+

### 1. Prerequisites

|

| 584 |

+

|

| 585 |

+

First you have to [install Termux](https://wiki.termux.com/wiki/Installing_from_F-Droid) and [install PRoot](https://wiki.termux.com/wiki/PRoot). Then install and login to Ubuntu in PRoot.

|

| 586 |

+

|

| 587 |

+

### 2. Install FastSD CPU

|

| 588 |

+

|

| 589 |

+

Run the following command to install without Qt GUI.

|

| 590 |

+

|

| 591 |

+

`proot-distro login ubuntu`

|

| 592 |

+

|

| 593 |

+

`./install.sh --disable-gui`

|

| 594 |

+

|

| 595 |

+

After the installation you can use WebUi.

|

| 596 |

+

|

| 597 |

+

`./start-webui.sh`

|

| 598 |

+

|

| 599 |

+

Note : If you get `libgl.so.1` import error run `apt-get install ffmpeg`.

|

| 600 |

+

|

| 601 |

+

Thanks [patienx](https://github.com/patientx) for this guide [Step by step guide to installing FASTSDCPU on ANDROID](https://github.com/rupeshs/fastsdcpu/discussions/123)

|

| 602 |

+

|

| 603 |

+

Another step by step guide to run FastSD on Android is [here](https://nolowiz.com/how-to-install-and-run-fastsd-cpu-on-android-temux-step-by-step-guide/)

|

| 604 |

+

|

| 605 |

+

<a id="raspberry"></a>

|

| 606 |

+

|

| 607 |

+

## Raspberry PI 4 support

|

| 608 |

+

|

| 609 |

+

Thanks [WGNW_MGM] for Raspberry PI 4 testing.FastSD CPU worked without problems.

|

| 610 |

+

System configuration - Raspberry Pi 4 with 4GB RAM, 8GB of SWAP memory.

|

| 611 |

+

|

| 612 |

+

<a id="orangepi"></a>

|

| 613 |

+

|

| 614 |

+

## Orange Pi 5 support

|

| 615 |

+

|

| 616 |

+

Thanks [khanumballz](https://github.com/khanumballz) for testing FastSD CPU with Orange PI 5.

|

| 617 |

+

[Here is a video of FastSD CPU running on Orange Pi 5](https://www.youtube.com/watch?v=KEJiCU0aK8o).

|

| 618 |

+

|

| 619 |

+

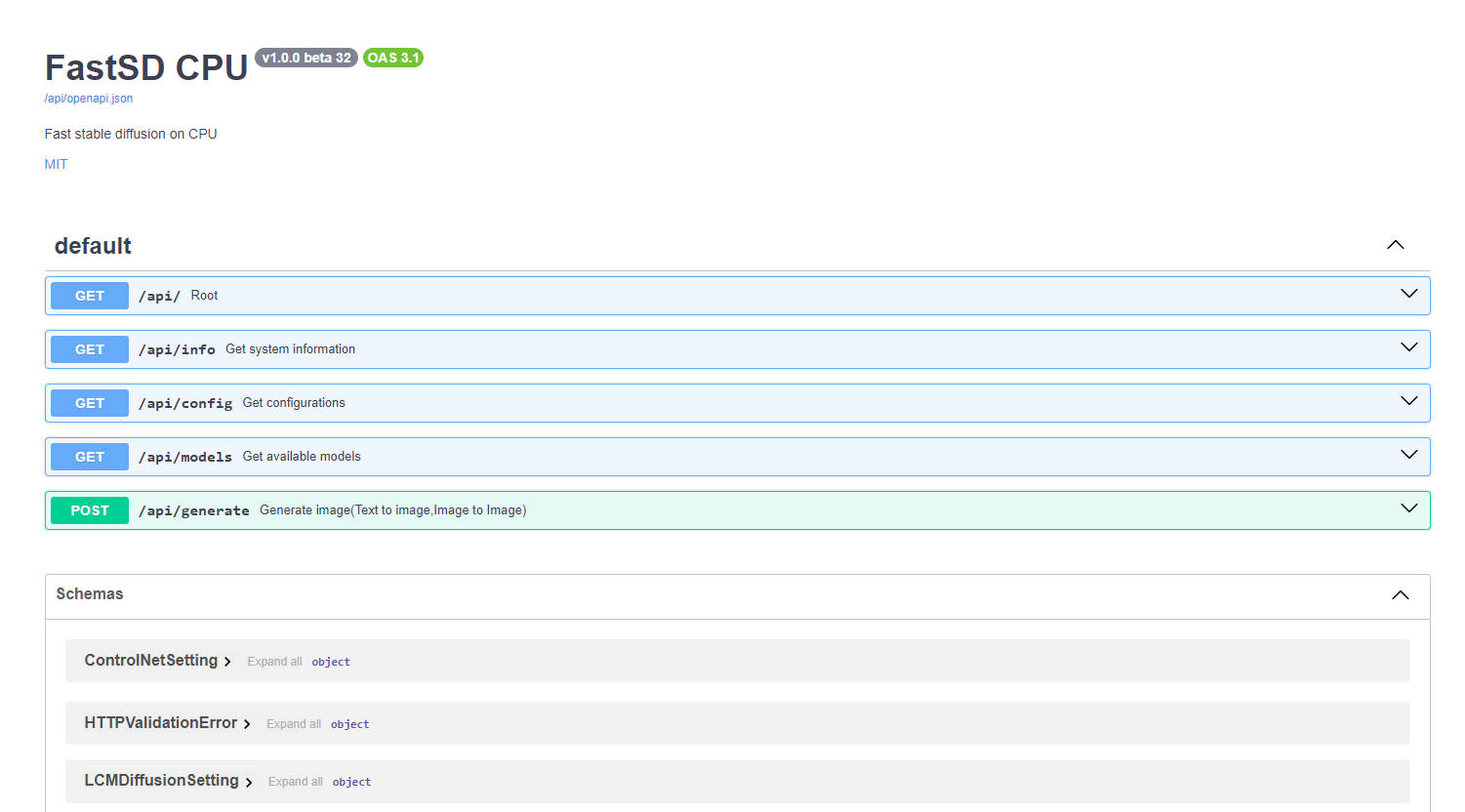

<a id="apisupport"></a>

|

| 620 |

+

|

| 621 |

+

## API support

|

| 622 |

+

|

| 623 |

+

|

| 624 |

+

|

| 625 |

+

FastSD CPU supports basic API endpoints. Following API endpoints are available :

|

| 626 |

+

|

| 627 |

+

- /api/info - To get system information

|

| 628 |

+

- /api/config - Get configuration

|

| 629 |

+

- /api/models - List all available models

|

| 630 |

+

- /api/generate - Generate images (Text to image,image to image)

|

| 631 |

+

|

| 632 |

+

To start FastAPI in webserver mode run:

|

| 633 |

+

``python src/app.py --api``

|

| 634 |

+

|

| 635 |

+

or use `start-webserver.sh` for Linux and `start-webserver.bat` for Windows.

|

| 636 |

+

|

| 637 |

+

Access API documentation locally at <http://localhost:8000/api/docs> .

|

| 638 |

+

|

| 639 |

+

Generated image is JPEG image encoded as base64 string.

|

| 640 |

+

In the image-to-image mode input image should be encoded as base64 string.

|

| 641 |

+

|

| 642 |

+

To generate an image a minimal request `POST /api/generate` with body :

|

| 643 |

+

|

| 644 |

+

```

|

| 645 |

+

{

|

| 646 |

+

"prompt": "a cute cat",

|

| 647 |

+

"use_openvino": true

|

| 648 |

+

}

|

| 649 |

+

```

|

| 650 |

+

|

| 651 |

+

## Known issues

|

| 652 |

+

|

| 653 |

+

- TAESD will not work with OpenVINO image to image workflow

|

| 654 |

+

|

| 655 |

+

## License

|

| 656 |

+

|

| 657 |

+

The fastsdcpu project is available as open source under the terms of the [MIT license](https://github.com/rupeshs/fastsdcpu/blob/main/LICENSE)

|

| 658 |

+

|

| 659 |

+

## Disclaimer

|

| 660 |

+

|

| 661 |

+

Users are granted the freedom to create images using this tool, but they are obligated to comply with local laws and utilize it responsibly. The developers will not assume any responsibility for potential misuse by users.

|

| 662 |

+

|

| 663 |

+

<a id="contributors"></a>

|

| 664 |

+

|

| 665 |

+

## Thanks to all our contributors

|

| 666 |

+

|

| 667 |

+

Original Author & Maintainer - [Rupesh Sreeraman](https://github.com/rupeshs)

|

| 668 |

+

|

| 669 |

+

We thank all contributors for their time and hard work!

|

| 670 |

+

|

| 671 |

+

<a href="https://github.com/rupeshs/fastsdcpu/graphs/contributors">

|

| 672 |

+

<img src="https://contrib.rocks/image?repo=rupeshs/fastsdcpu" />

|

| 673 |

+

</a>

|

THIRD-PARTY-LICENSES

ADDED

|

@@ -0,0 +1,143 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

stablediffusion.cpp - MIT

|

| 2 |

+

|

| 3 |

+

OpenVINO stablediffusion engine - Apache 2

|

| 4 |

+

|

| 5 |

+

SD Turbo - STABILITY AI NON-COMMERCIAL RESEARCH COMMUNITY LICENSE AGREEMENT

|

| 6 |

+

|

| 7 |

+

MIT License

|

| 8 |

+

|

| 9 |

+

Copyright (c) 2023 leejet

|

| 10 |

+

|

| 11 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 12 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 13 |

+

in the Software without restriction, including without limitation the rights

|

| 14 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 15 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 16 |

+

furnished to do so, subject to the following conditions:

|

| 17 |

+

|

| 18 |

+

The above copyright notice and this permission notice shall be included in all

|

| 19 |

+

copies or substantial portions of the Software.

|

| 20 |

+

|

| 21 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 22 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 23 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 24 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 25 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 26 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 27 |

+

SOFTWARE.

|

| 28 |

+

|

| 29 |

+

ERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 30 |

+

|

| 31 |

+

Definitions.

|

| 32 |

+

|

| 33 |

+

"License" shall mean the terms and conditions for use, reproduction, and distribution as defined by Sections 1 through 9 of this document.

|

| 34 |

+

|

| 35 |

+

"Licensor" shall mean the copyright owner or entity authorized by the copyright owner that is granting the License.

|

| 36 |

+

|

| 37 |

+