url stringlengths 61 61 | repository_url stringclasses 1

value | labels_url stringlengths 75 75 | comments_url stringlengths 70 70 | events_url stringlengths 68 68 | html_url stringlengths 49 51 | id int64 986M 1.61B | node_id stringlengths 18 32 | number int64 2.87k 5.61k | title stringlengths 1 290 | user dict | labels list | state stringclasses 2

values | locked bool 1

class | assignee dict | assignees list | milestone dict | comments sequence | created_at timestamp[s] | updated_at timestamp[s] | closed_at timestamp[s] | author_association stringclasses 3

values | active_lock_reason null | body stringlengths 2 36.2k ⌀ | reactions dict | timeline_url stringlengths 70 70 | performed_via_github_app null | state_reason stringclasses 3

values | draft bool 2

classes | pull_request dict | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/5609 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5609/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5609/comments | https://api.github.com/repos/huggingface/datasets/issues/5609/events | https://github.com/huggingface/datasets/issues/5609 | 1,610,062,862 | I_kwDODunzps5f95wO | 5,609 | `load_from_disk` vs `load_dataset` performance. | {

"login": "davidgilbertson",

"id": 4443482,

"node_id": "MDQ6VXNlcjQ0NDM0ODI=",

"avatar_url": "https://avatars.githubusercontent.com/u/4443482?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/davidgilbertson",

"html_url": "https://github.com/davidgilbertson",

"followers_url": "https://api.github.com/users/davidgilbertson/followers",

"following_url": "https://api.github.com/users/davidgilbertson/following{/other_user}",

"gists_url": "https://api.github.com/users/davidgilbertson/gists{/gist_id}",

"starred_url": "https://api.github.com/users/davidgilbertson/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/davidgilbertson/subscriptions",

"organizations_url": "https://api.github.com/users/davidgilbertson/orgs",

"repos_url": "https://api.github.com/users/davidgilbertson/repos",

"events_url": "https://api.github.com/users/davidgilbertson/events{/privacy}",

"received_events_url": "https://api.github.com/users/davidgilbertson/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [] | 2023-03-05T05:27:15 | 2023-03-05T05:27:15 | null | NONE | null | ### Describe the bug

I have downloaded `openwebtext` (~12GB) and filtered out a small amount of junk (it's still huge). Now, I would like to use this filtered version for future work. It seems I have two choices:

1. Use `load_dataset` each time, relying on the cache mechanism, and re-run my filtering.

2. `save_to_disk` and then use `load_from_disk` to load the filtered version.

The performance of these two approaches is wildly different:

* Using `load_dataset` takes about 20 seconds to load the dataset, and a few seconds to re-filter (thanks to the brilliant filter/map caching)

* Using `load_from_disk` takes 14 minutes! And the second time I tried, the session just crashed (on a machine with 32GB of RAM)

I don't know if you'd call this a bug, but it seems like there shouldn't need to be two methods to load from disk, or that they should not take such wildly different amounts of time, or that one should not crash. Or maybe that the docs could offer some guidance about when to pick which method and why two methods exist, or just how do most people do it?

Something I couldn't work out from reading the docs was this: can I modify a dataset from the hub, save it (locally) and use `load_dataset` to load it? This [post seemed to suggest that the answer is no](https://discuss.huggingface.co/t/save-and-load-datasets/9260).

### Steps to reproduce the bug

See above

### Expected behavior

Load times should be about the same.

### Environment info

- `datasets` version: 2.9.0

- Platform: Linux-5.10.102.1-microsoft-standard-WSL2-x86_64-with-glibc2.31

- Python version: 3.10.8

- PyArrow version: 11.0.0

- Pandas version: 1.5.3 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5609/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5609/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5608 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5608/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5608/comments | https://api.github.com/repos/huggingface/datasets/issues/5608/events | https://github.com/huggingface/datasets/issues/5608 | 1,609,996,563 | I_kwDODunzps5f9pkT | 5,608 | audiofolder only creates dataset of 13 rows (files) when the data folder it's reading from has 20,000 mp3 files. | {

"login": "jcho19",

"id": 107211437,

"node_id": "U_kgDOBmPqrQ",

"avatar_url": "https://avatars.githubusercontent.com/u/107211437?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/jcho19",

"html_url": "https://github.com/jcho19",

"followers_url": "https://api.github.com/users/jcho19/followers",

"following_url": "https://api.github.com/users/jcho19/following{/other_user}",

"gists_url": "https://api.github.com/users/jcho19/gists{/gist_id}",

"starred_url": "https://api.github.com/users/jcho19/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jcho19/subscriptions",

"organizations_url": "https://api.github.com/users/jcho19/orgs",

"repos_url": "https://api.github.com/users/jcho19/repos",

"events_url": "https://api.github.com/users/jcho19/events{/privacy}",

"received_events_url": "https://api.github.com/users/jcho19/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [] | 2023-03-05T00:14:45 | 2023-03-05T00:14:45 | null | NONE | null | ### Describe the bug

x = load_dataset("audiofolder", data_dir="x")

When running this, x is a dataset of 13 rows (files) when it should be 20,000 rows (files) as the data_dir "x" has 20,000 mp3 files. Does anyone know what could possibly cause this (naming convention of mp3 files, etc.)

### Steps to reproduce the bug

x = load_dataset("audiofolder", data_dir="x")

### Expected behavior

x = load_dataset("audiofolder", data_dir="x") should create a dataset of 20,000 rows (files).

### Environment info

- `datasets` version: 2.9.0

- Platform: Linux-3.10.0-1160.80.1.el7.x86_64-x86_64-with-glibc2.17

- Python version: 3.9.16

- PyArrow version: 11.0.0

- Pandas version: 1.5.3 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5608/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5608/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5607 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5607/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5607/comments | https://api.github.com/repos/huggingface/datasets/issues/5607/events | https://github.com/huggingface/datasets/pull/5607 | 1,609,166,035 | PR_kwDODunzps5LQPbG | 5,607 | Don't save dataset info to cache dir when skipping verifications | {

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_5607). All of your documentation changes will be reflected on that endpoint."

] | 2023-03-03T19:50:29 | 2023-03-03T20:13:36 | null | CONTRIBUTOR | null | I think it makes sense not to save `dataset_info.json` file to a dataset cache directory when loading dataset with `verification_mode="no_checks"` because otherwise when next time the dataset is loaded **without** `verification_mode="no_checks"`, it will be loaded successfully, despite some values in info might not correspond to the ones in the repo which was the reason for using `verification_mode="no_checks"` first. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5607/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5607/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5607",

"html_url": "https://github.com/huggingface/datasets/pull/5607",

"diff_url": "https://github.com/huggingface/datasets/pull/5607.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5607.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/5606 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5606/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5606/comments | https://api.github.com/repos/huggingface/datasets/issues/5606/events | https://github.com/huggingface/datasets/issues/5606 | 1,608,911,632 | I_kwDODunzps5f5gsQ | 5,606 | Add `Dataset.to_list` to the API | {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

},

{

"id": 1935892877,

"node_id": "MDU6... | open | false | null | [] | null | [

"Hello, I have an interest in this issue.\r\nIs the `Dataset.to_dict` you are describing correct in the code here?\r\n\r\nhttps://github.com/huggingface/datasets/blob/35b789e8f6826b6b5a6b48fcc2416c890a1f326a/src/datasets/arrow_dataset.py#L4633-L4667"

] | 2023-03-03T16:17:10 | 2023-03-04T06:27:08 | null | CONTRIBUTOR | null | Since there is `Dataset.from_list` in the API, we should also add `Dataset.to_list` to be consistent.

Regarding the implementation, we can re-use `Dataset.to_dict`'s code and replace the `to_pydict` calls with `to_pylist`. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5606/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5606/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5605 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5605/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5605/comments | https://api.github.com/repos/huggingface/datasets/issues/5605/events | https://github.com/huggingface/datasets/pull/5605 | 1,608,865,460 | PR_kwDODunzps5LPPf5 | 5,605 | Update README logo | {

"login": "gary149",

"id": 3841370,

"node_id": "MDQ6VXNlcjM4NDEzNzA=",

"avatar_url": "https://avatars.githubusercontent.com/u/3841370?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/gary149",

"html_url": "https://github.com/gary149",

"followers_url": "https://api.github.com/users/gary149/followers",

"following_url": "https://api.github.com/users/gary149/following{/other_user}",

"gists_url": "https://api.github.com/users/gary149/gists{/gist_id}",

"starred_url": "https://api.github.com/users/gary149/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/gary149/subscriptions",

"organizations_url": "https://api.github.com/users/gary149/orgs",

"repos_url": "https://api.github.com/users/gary149/repos",

"events_url": "https://api.github.com/users/gary149/events{/privacy}",

"received_events_url": "https://api.github.com/users/gary149/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Are you sure it's safe to remove? https://github.com/huggingface/datasets/pull/3866",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benc... | 2023-03-03T15:46:31 | 2023-03-03T21:57:18 | 2023-03-03T21:50:17 | CONTRIBUTOR | null | null | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5605/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5605/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5605",

"html_url": "https://github.com/huggingface/datasets/pull/5605",

"diff_url": "https://github.com/huggingface/datasets/pull/5605.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5605.patch",

"merged_at": "2023-03-03T21:50:17"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/5604 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5604/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5604/comments | https://api.github.com/repos/huggingface/datasets/issues/5604/events | https://github.com/huggingface/datasets/issues/5604 | 1,608,304,775 | I_kwDODunzps5f3MiH | 5,604 | Problems with downloading The Pile | {

"login": "sentialx",

"id": 11065386,

"node_id": "MDQ6VXNlcjExMDY1Mzg2",

"avatar_url": "https://avatars.githubusercontent.com/u/11065386?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/sentialx",

"html_url": "https://github.com/sentialx",

"followers_url": "https://api.github.com/users/sentialx/followers",

"following_url": "https://api.github.com/users/sentialx/following{/other_user}",

"gists_url": "https://api.github.com/users/sentialx/gists{/gist_id}",

"starred_url": "https://api.github.com/users/sentialx/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sentialx/subscriptions",

"organizations_url": "https://api.github.com/users/sentialx/orgs",

"repos_url": "https://api.github.com/users/sentialx/repos",

"events_url": "https://api.github.com/users/sentialx/events{/privacy}",

"received_events_url": "https://api.github.com/users/sentialx/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [] | 2023-03-03T09:52:08 | 2023-03-03T09:52:08 | null | NONE | null | ### Describe the bug

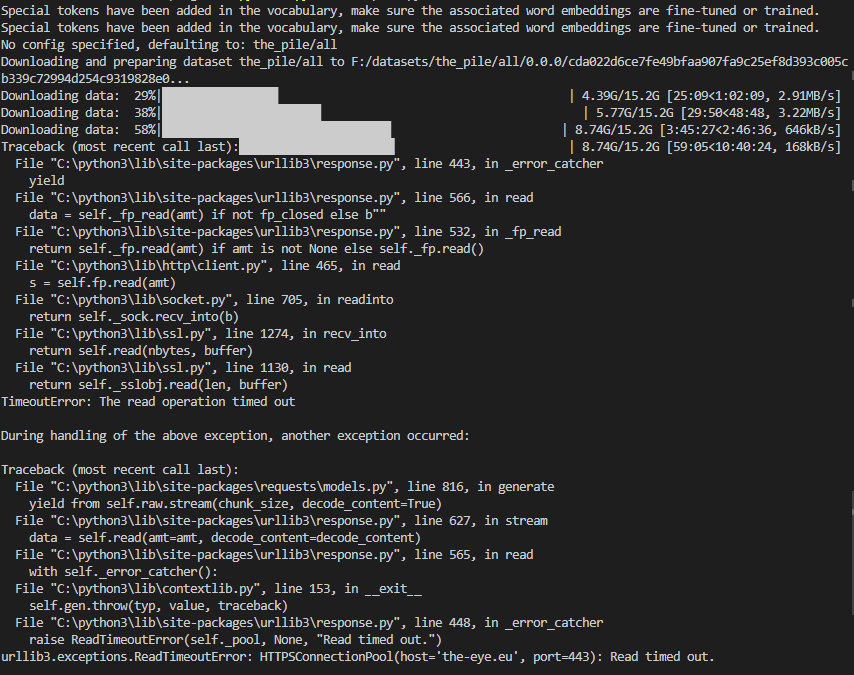

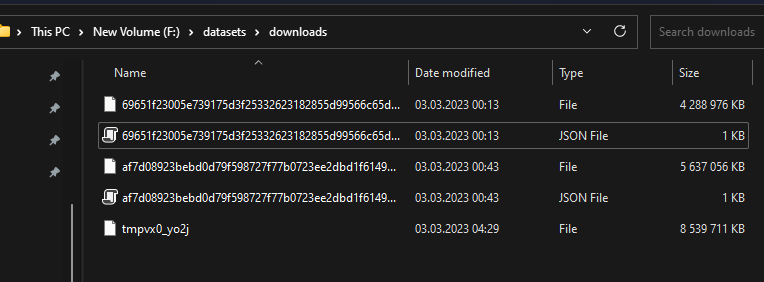

The downloads in the screenshot seem to be interrupted after some time and the last download throws a "Read timed out" error.

Here are the downloaded files:

They should be all 14GB like here (https://the-eye.eu/public/AI/pile/train/).

Alternatively, can I somehow download the files by myself and use the datasets preparing script?

### Steps to reproduce the bug

dataset = load_dataset('the_pile', split='train', cache_dir='F:\datasets')

### Expected behavior

The files should be downloaded correctly.

### Environment info

- `datasets` version: 2.10.1

- Platform: Windows-10-10.0.22623-SP0

- Python version: 3.10.5

- PyArrow version: 9.0.0

- Pandas version: 1.4.2 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5604/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5604/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5603 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5603/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5603/comments | https://api.github.com/repos/huggingface/datasets/issues/5603/events | https://github.com/huggingface/datasets/pull/5603 | 1,607,143,509 | PR_kwDODunzps5LJZzG | 5,603 | Don't compute checksums if not necessary in `datasets-cli test` | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-03-02T16:42:39 | 2023-03-03T15:45:32 | 2023-03-03T15:38:28 | MEMBER | null | we only need them if there exists a `dataset_infos.json` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5603/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5603/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5603",

"html_url": "https://github.com/huggingface/datasets/pull/5603",

"diff_url": "https://github.com/huggingface/datasets/pull/5603.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5603.patch",

"merged_at": "2023-03-03T15:38:28"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/5602 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5602/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5602/comments | https://api.github.com/repos/huggingface/datasets/issues/5602/events | https://github.com/huggingface/datasets/pull/5602 | 1,607,054,110 | PR_kwDODunzps5LJGfa | 5,602 | Return dict structure if columns are lists - to_tf_dataset | {

"login": "amyeroberts",

"id": 22614925,

"node_id": "MDQ6VXNlcjIyNjE0OTI1",

"avatar_url": "https://avatars.githubusercontent.com/u/22614925?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/amyeroberts",

"html_url": "https://github.com/amyeroberts",

"followers_url": "https://api.github.com/users/amyeroberts/followers",

"following_url": "https://api.github.com/users/amyeroberts/following{/other_user}",

"gists_url": "https://api.github.com/users/amyeroberts/gists{/gist_id}",

"starred_url": "https://api.github.com/users/amyeroberts/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/amyeroberts/subscriptions",

"organizations_url": "https://api.github.com/users/amyeroberts/orgs",

"repos_url": "https://api.github.com/users/amyeroberts/repos",

"events_url": "https://api.github.com/users/amyeroberts/events{/privacy}",

"received_events_url": "https://api.github.com/users/amyeroberts/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_5602). All of your documentation changes will be reflected on that endpoint.",

"This is a great PR! Thinking about the UX though, maybe we could do it without the extra argument? Before this PR, the logic in `to_tf_dataset` was... | 2023-03-02T15:51:12 | 2023-03-03T21:21:39 | null | CONTRIBUTOR | null | This PR introduces new logic to `to_tf_dataset` affecting the returned data structure, enabling a dictionary structure to be returned, even if only one feature column is selected.

If the passed in `columns` or `label_cols` to `to_tf_dataset` are a list, they are returned as a dictionary, respectively. If they are a string, the tensor is returned.

An outline of the behaviour:

```

dataset,to_tf_dataset(columns=["col_1"], label_cols="col_2")

# ({'col_1': col_1}, col_2}

dataset,to_tf_dataset(columns="col1", label_cols="col_2")

# (col1, col2)

dataset,to_tf_dataset(columns="col1")

# col1

dataset,to_tf_dataset(columns=["col_1"], labels=["col_2"])

# ({'col1': tensor}, {'col2': tensor}}

dataset,to_tf_dataset(columns="col_1", labels=["col_2"])

# (col1, {'col2': tensor}}

```

## Motivation

Currently, when calling `to_tf_dataset`, the returned dataset will always return a tuple structure if a single feature column is used. This can cause issues when calling `model.fit` on models which train without labels e.g. [TFVitMAEForPreTraining](https://github.com/huggingface/transformers/blob/b6f47b539377ac1fd845c7adb4ccaa5eb514e126/src/transformers/models/vit_mae/modeling_vit_mae.py#L849). Specifically, [this line](https://github.com/huggingface/transformers/blob/d9e28d91a8b2d09b51a33155d3a03ad9fcfcbd1f/src/transformers/modeling_tf_utils.py#L1521) where it's assumed the input `x` is a dictionary if there is no label.

## Example

Previous behaviour

```python

In [1]: import tensorflow as tf

...: from datasets import load_dataset

...:

...:

...: def transform(batch):

...: def _transform_img(img):

...: img = img.convert("RGB")

...: img = tf.keras.utils.img_to_array(img)

...: img = tf.image.resize(img, (224, 224))

...: img /= 255.0

...: img = tf.transpose(img, perm=[2, 0, 1])

...: return img

...: batch['pixel_values'] = [_transform_img(pil_img) for pil_img in batch['img']]

...: return batch

...:

...:

...: def collate_fn(examples):

...: pixel_values = tf.stack([example["pixel_values"] for example in examples])

...: return {"pixel_values": pixel_values}

...:

...:

...: dataset = load_dataset('cifar10')['train']

...: dataset = dataset.with_transform(transform)

...: dataset.to_tf_dataset(batch_size=8, columns=['pixel_values'], collate_fn=collate_fn)

Out[1]: <PrefetchDataset element_spec=TensorSpec(shape=(None, 3, 224, 224), dtype=tf.float32, name=None)>

```

New behaviour

```python

In [1]: import tensorflow as tf

...: from datasets import load_dataset

...:

...:

...: def transform(batch):

...: def _transform_img(img):

...: img = img.convert("RGB")

...: img = tf.keras.utils.img_to_array(img)

...: img = tf.image.resize(img, (224, 224))

...: img /= 255.0

...: img = tf.transpose(img, perm=[2, 0, 1])

...: return img

...: batch['pixel_values'] = [_transform_img(pil_img) for pil_img in batch['img']]

...: return batch

...:

...:

...: def collate_fn(examples):

...: pixel_values = tf.stack([example["pixel_values"] for example in examples])

...: return {"pixel_values": pixel_values}

...:

...:

...: dataset = load_dataset('cifar10')['train']

...: dataset = dataset.with_transform(transform)

...: dataset.to_tf_dataset(batch_size=8, columns=['pixel_values'], collate_fn=collate_fn)

Out[1]: <PrefetchDataset element_spec={'pixel_values': TensorSpec(shape=(None, 3, 224, 224), dtype=tf.float32, name=None)}>

``` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5602/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5602/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5602",

"html_url": "https://github.com/huggingface/datasets/pull/5602",

"diff_url": "https://github.com/huggingface/datasets/pull/5602.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5602.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/5601 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5601/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5601/comments | https://api.github.com/repos/huggingface/datasets/issues/5601/events | https://github.com/huggingface/datasets/issues/5601 | 1,606,685,976 | I_kwDODunzps5fxBUY | 5,601 | Authorization error | {

"login": "OleksandrKorovii",

"id": 107404835,

"node_id": "U_kgDOBmbeIw",

"avatar_url": "https://avatars.githubusercontent.com/u/107404835?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/OleksandrKorovii",

"html_url": "https://github.com/OleksandrKorovii",

"followers_url": "https://api.github.com/users/OleksandrKorovii/followers",

"following_url": "https://api.github.com/users/OleksandrKorovii/following{/other_user}",

"gists_url": "https://api.github.com/users/OleksandrKorovii/gists{/gist_id}",

"starred_url": "https://api.github.com/users/OleksandrKorovii/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/OleksandrKorovii/subscriptions",

"organizations_url": "https://api.github.com/users/OleksandrKorovii/orgs",

"repos_url": "https://api.github.com/users/OleksandrKorovii/repos",

"events_url": "https://api.github.com/users/OleksandrKorovii/events{/privacy}",

"received_events_url": "https://api.github.com/users/OleksandrKorovii/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [] | 2023-03-02T12:08:39 | 2023-03-03T07:32:54 | null | NONE | null | ### Describe the bug

Get `Authorization error` when try to push data into hugginface datasets hub.

### Steps to reproduce the bug

I did all steps in the [tutorial](https://huggingface.co/docs/datasets/share),

1. `huggingface-cli login` with WRITE token

2. `git lfs install`

3. `git clone https://huggingface.co/datasets/namespace/your_dataset_name`

4.

```

cp /somewhere/data/*.json .

git lfs track *.json

git add .gitattributes

git add *.json

git commit -m "add json files"

```

but when I execute `git push` I got the error:

```

Uploading LFS objects: 0% (0/1), 0 B | 0 B/s, done.

batch response: Authorization error.

error: failed to push some refs to 'https://huggingface.co/datasets/zeusfsx/ukrainian-news'

```

Size of data ~100Gb. I have five json files - different parts.

### Expected behavior

All my data pushed into hub

### Environment info

- `datasets` version: 2.10.1

- Platform: macOS-13.2.1-arm64-arm-64bit

- Python version: 3.10.10

- PyArrow version: 11.0.0

- Pandas version: 1.5.3 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5601/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5601/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5600 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5600/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5600/comments | https://api.github.com/repos/huggingface/datasets/issues/5600/events | https://github.com/huggingface/datasets/issues/5600 | 1,606,585,596 | I_kwDODunzps5fwoz8 | 5,600 | Dataloader getitem not working for DreamboothDatasets | {

"login": "salahiguiliz",

"id": 76955987,

"node_id": "MDQ6VXNlcjc2OTU1OTg3",

"avatar_url": "https://avatars.githubusercontent.com/u/76955987?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/salahiguiliz",

"html_url": "https://github.com/salahiguiliz",

"followers_url": "https://api.github.com/users/salahiguiliz/followers",

"following_url": "https://api.github.com/users/salahiguiliz/following{/other_user}",

"gists_url": "https://api.github.com/users/salahiguiliz/gists{/gist_id}",

"starred_url": "https://api.github.com/users/salahiguiliz/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/salahiguiliz/subscriptions",

"organizations_url": "https://api.github.com/users/salahiguiliz/orgs",

"repos_url": "https://api.github.com/users/salahiguiliz/repos",

"events_url": "https://api.github.com/users/salahiguiliz/events{/privacy}",

"received_events_url": "https://api.github.com/users/salahiguiliz/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [] | 2023-03-02T11:00:27 | 2023-03-02T11:00:27 | null | NONE | null | ### Describe the bug

Dataloader getitem is not working as before (see example of DreamboothDatasets)

moving to 2.8.0 solved the issue.

### Steps to reproduce the bug

1- using DreamBoothDataset to load some images

2- error after loading when trying to visualise the images

### Expected behavior

I was expecting a numpy array of the image

### Environment info

- Platform: Linux-5.10.147+-x86_64-with-glibc2.29

- Python version: 3.8.10

- PyArrow version: 9.0.0

- Pandas version: 1.3.5 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5600/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5600/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5598 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5598/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5598/comments | https://api.github.com/repos/huggingface/datasets/issues/5598/events | https://github.com/huggingface/datasets/pull/5598 | 1,605,018,478 | PR_kwDODunzps5LCMiX | 5,598 | Fix push_to_hub with no dataset_infos | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-03-01T13:54:06 | 2023-03-02T13:47:13 | 2023-03-02T13:40:17 | MEMBER | null | As reported in https://github.com/vijaydwivedi75/lrgb/issues/10, `push_to_hub` fails if the remote repository already exists and has a README.md without `dataset_info` in the YAML tags

cc @clefourrier | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5598/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5598/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5598",

"html_url": "https://github.com/huggingface/datasets/pull/5598",

"diff_url": "https://github.com/huggingface/datasets/pull/5598.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5598.patch",

"merged_at": "2023-03-02T13:40:17"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/5597 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5597/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5597/comments | https://api.github.com/repos/huggingface/datasets/issues/5597/events | https://github.com/huggingface/datasets/issues/5597 | 1,604,928,721 | I_kwDODunzps5fqUTR | 5,597 | in-place dataset update | {

"login": "speedcell4",

"id": 3585459,

"node_id": "MDQ6VXNlcjM1ODU0NTk=",

"avatar_url": "https://avatars.githubusercontent.com/u/3585459?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/speedcell4",

"html_url": "https://github.com/speedcell4",

"followers_url": "https://api.github.com/users/speedcell4/followers",

"following_url": "https://api.github.com/users/speedcell4/following{/other_user}",

"gists_url": "https://api.github.com/users/speedcell4/gists{/gist_id}",

"starred_url": "https://api.github.com/users/speedcell4/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/speedcell4/subscriptions",

"organizations_url": "https://api.github.com/users/speedcell4/orgs",

"repos_url": "https://api.github.com/users/speedcell4/repos",

"events_url": "https://api.github.com/users/speedcell4/events{/privacy}",

"received_events_url": "https://api.github.com/users/speedcell4/received_events",

"type": "User",

"site_admin": false

} | [

{

"id": 1935892913,

"node_id": "MDU6TGFiZWwxOTM1ODkyOTEz",

"url": "https://api.github.com/repos/huggingface/datasets/labels/wontfix",

"name": "wontfix",

"color": "ffffff",

"default": true,

"description": "This will not be worked on"

}

] | closed | false | null | [] | null | [

"We won't support in-place modifications since `datasets` is based on the Apache Arrow format which doesn't support in-place modifications.\r\n\r\nIn your case the old dataset is garbage collected pretty quickly so you won't have memory issues.\r\n\r\nNote that datasets loaded from disk (memory mapped) are not load... | 2023-03-01T12:58:18 | 2023-03-02T13:30:41 | 2023-03-02T03:47:00 | NONE | null | ### Motivation

For the circumstance that I creat an empty `Dataset` and keep appending new rows into it, I found that it leads to creating a new dataset at each call. It looks quite memory-consuming. I just wonder if there is any more efficient way to do this.

```python

from datasets import Dataset

ds = Dataset.from_list([])

ds.add_item({'a': [1, 2, 3], 'b': 4})

print(ds)

>>> Dataset({

>>> features: [],

>>> num_rows: 0

>>> })

ds = ds.add_item({'a': [1, 2, 3], 'b': 4})

print(ds)

>>> Dataset({

>>> features: ['a', 'b'],

>>> num_rows: 1

>>> })

```

### Feature request

Call for in-place dataset update functions, that update the existing `Dataset` in place without creating a new copy. The interface is supposed to keep the same style as PyTorch, such as the in-place version of a `function` is named `function_`. For example, the in-pace version of `add_item`, i.e., `add_item_`, immediately updates the `Dataset`.

```python

from datasets import Dataset

ds = Dataset.from_list([])

ds.add_item({'a': [1, 2, 3], 'b': 4})

print(ds)

>>> Dataset({

>>> features: [],

>>> num_rows: 0

>>> })

ds.add_item_({'a': [1, 2, 3], 'b': 4})

print(ds)

>>> Dataset({

>>> features: ['a', 'b'],

>>> num_rows: 1

>>> })

```

### Related Functions

* `.map`

* `.filter`

* `.add_item` | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5597/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5597/timeline | null | completed | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5596 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5596/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5596/comments | https://api.github.com/repos/huggingface/datasets/issues/5596/events | https://github.com/huggingface/datasets/issues/5596 | 1,604,919,993 | I_kwDODunzps5fqSK5 | 5,596 | [TypeError: Couldn't cast array of type] Can only load a subset of the dataset | {

"login": "loubnabnl",

"id": 44069155,

"node_id": "MDQ6VXNlcjQ0MDY5MTU1",

"avatar_url": "https://avatars.githubusercontent.com/u/44069155?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/loubnabnl",

"html_url": "https://github.com/loubnabnl",

"followers_url": "https://api.github.com/users/loubnabnl/followers",

"following_url": "https://api.github.com/users/loubnabnl/following{/other_user}",

"gists_url": "https://api.github.com/users/loubnabnl/gists{/gist_id}",

"starred_url": "https://api.github.com/users/loubnabnl/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/loubnabnl/subscriptions",

"organizations_url": "https://api.github.com/users/loubnabnl/orgs",

"repos_url": "https://api.github.com/users/loubnabnl/repos",

"events_url": "https://api.github.com/users/loubnabnl/events{/privacy}",

"received_events_url": "https://api.github.com/users/loubnabnl/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"Apparently some JSON objects have a `\"labels\"` field. Since this field is not present in every object, you must specify all the fields types in the README.md\r\n\r\nEDIT: actually specifying the feature types doesn’t solve the issue, it raises an error because “labels” is missing in the data",

"We've updated t... | 2023-03-01T12:53:08 | 2023-03-02T11:12:11 | 2023-03-02T11:12:11 | NONE | null | ### Describe the bug

I'm trying to load this [dataset](https://huggingface.co/datasets/bigcode-data/the-stack-gh-issues) which consists of jsonl files and I get the following error:

```

casted_values = _c(array.values, feature[0])

File "/opt/conda/lib/python3.7/site-packages/datasets/table.py", line 1839, in wrapper

return func(array, *args, **kwargs)

File "/opt/conda/lib/python3.7/site-packages/datasets/table.py", line 2132, in cast_array_to_feature

raise TypeError(f"Couldn't cast array of type\n{array.type}\nto\n{feature}")

TypeError: Couldn't cast array of type

struct<type: string, action: string, datetime: timestamp[s], author: string, title: string, description: string, comment_id: int64, comment: string, labels: list<item: string>>

to

{'type': Value(dtype='string', id=None), 'action': Value(dtype='string', id=None), 'datetime': Value(dtype='timestamp[s]', id=None), 'author': Value(dtype='string', id=None), 'title': Value(dtype='string', id=None), 'description': Value(dtype='string', id=None), 'comment_id': Value(dtype='int64', id=None), 'comment': Value(dtype='string', id=None)}

```

But I can succesfully load a subset of the dataset, for example this works:

```python

ds = load_dataset('bigcode-data/the-stack-gh-issues', split="train", data_files=[f"data/data-{x}.jsonl" for x in range(10)])

```

and `ds.features` returns:

```

{'repo': Value(dtype='string', id=None),

'org': Value(dtype='string', id=None),

'issue_id': Value(dtype='int64', id=None),

'issue_number': Value(dtype='int64', id=None),

'pull_request': {'user_login': Value(dtype='string', id=None),

'repo': Value(dtype='string', id=None),

'number': Value(dtype='int64', id=None)},

'events': [{'type': Value(dtype='string', id=None),

'action': Value(dtype='string', id=None),

'datetime': Value(dtype='timestamp[s]', id=None),

'author': Value(dtype='string', id=None),

'title': Value(dtype='string', id=None),

'description': Value(dtype='string', id=None),

'comment_id': Value(dtype='int64', id=None),

'comment': Value(dtype='string', id=None)}]}

```

So I'm not sure if there's an issue with just some of the files. Grateful if you have any suggestions to fix the issue.

Side note:

I saw this related [issue](https://github.com/huggingface/datasets/issues/3637) and tried to write a loading script to have `events` as a `Sequence` and not `list` [here](https://huggingface.co/datasets/bigcode-data/the-stack-gh-issues/blob/main/loading.py) (the script was renamed). It worked with a subset locally but doesn't for the remote dataset it can't find https://huggingface.co/datasets/bigcode-data/the-stack-gh-issues/resolve/main/data.

### Steps to reproduce the bug

```python

from datasets import load_dataset

ds = load_dataset('bigcode-data/the-stack-gh-issues', split="train")

```

### Expected behavior

Load the entire dataset succesfully.

### Environment info

- `datasets` version: 2.10.1

- Platform: Linux-4.19.0-23-cloud-amd64-x86_64-with-debian-10.13

- Python version: 3.7.12

- PyArrow version: 9.0.0

- Pandas version: 1.3.4 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5596/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5596/timeline | null | completed | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5595 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5595/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5595/comments | https://api.github.com/repos/huggingface/datasets/issues/5595/events | https://github.com/huggingface/datasets/pull/5595 | 1,604,070,629 | PR_kwDODunzps5K--V9 | 5,595 | Unpins sqlAlchemy | {

"login": "lazarust",

"id": 46943923,

"node_id": "MDQ6VXNlcjQ2OTQzOTIz",

"avatar_url": "https://avatars.githubusercontent.com/u/46943923?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lazarust",

"html_url": "https://github.com/lazarust",

"followers_url": "https://api.github.com/users/lazarust/followers",

"following_url": "https://api.github.com/users/lazarust/following{/other_user}",

"gists_url": "https://api.github.com/users/lazarust/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lazarust/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lazarust/subscriptions",

"organizations_url": "https://api.github.com/users/lazarust/orgs",

"repos_url": "https://api.github.com/users/lazarust/repos",

"events_url": "https://api.github.com/users/lazarust/events{/privacy}",

"received_events_url": "https://api.github.com/users/lazarust/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_5595). All of your documentation changes will be reflected on that endpoint."

] | 2023-03-01T01:33:45 | 2023-03-03T16:44:09 | null | NONE | null | Closes #5477 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5595/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5595/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5595",

"html_url": "https://github.com/huggingface/datasets/pull/5595",

"diff_url": "https://github.com/huggingface/datasets/pull/5595.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5595.patch",

"merged_at": null

} | true |

https://api.github.com/repos/huggingface/datasets/issues/5594 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5594/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5594/comments | https://api.github.com/repos/huggingface/datasets/issues/5594/events | https://github.com/huggingface/datasets/issues/5594 | 1,603,980,995 | I_kwDODunzps5fms7D | 5,594 | Error while downloading the xtreme udpos dataset | {

"login": "simran-khanuja",

"id": 24687672,

"node_id": "MDQ6VXNlcjI0Njg3Njcy",

"avatar_url": "https://avatars.githubusercontent.com/u/24687672?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/simran-khanuja",

"html_url": "https://github.com/simran-khanuja",

"followers_url": "https://api.github.com/users/simran-khanuja/followers",

"following_url": "https://api.github.com/users/simran-khanuja/following{/other_user}",

"gists_url": "https://api.github.com/users/simran-khanuja/gists{/gist_id}",

"starred_url": "https://api.github.com/users/simran-khanuja/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/simran-khanuja/subscriptions",

"organizations_url": "https://api.github.com/users/simran-khanuja/orgs",

"repos_url": "https://api.github.com/users/simran-khanuja/repos",

"events_url": "https://api.github.com/users/simran-khanuja/events{/privacy}",

"received_events_url": "https://api.github.com/users/simran-khanuja/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"Hi! I cannot reproduce this error on my machine.\r\n\r\nThe raised error could mean that one of the downloaded files is corrupted. To verify this is not the case, you can run `load_dataset` as follows:\r\n```python\r\ntrain_dataset = load_dataset('xtreme', 'udpos.English', split=\"train\", cache_dir=args.cache_dir... | 2023-02-28T23:40:53 | 2023-03-01T22:07:07 | null | NONE | null | ### Describe the bug

Hi,

I am facing an error while downloading the xtreme udpos dataset using load_dataset. I have datasets 2.10.1 installed

```Downloading and preparing dataset xtreme/udpos.Arabic to /compute/tir-1-18/skhanuja/multilingual_ft/cache/data/xtreme/udpos.Arabic/1.0.0/29f5d57a48779f37ccb75cb8708d1095448aad0713b425bdc1ff9a4a128a56e4...

Downloading data: 16%|██████████████▏ | 56.9M/355M [03:11<16:43, 297kB/s]

Generating train split: 0%| | 0/6075 [00:00<?, ? examples/s]Traceback (most recent call last):

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/builder.py", line 1608, in _prepare_split_single

for key, record in generator:

File "/home/skhanuja/.cache/huggingface/modules/datasets_modules/datasets/xtreme/29f5d57a48779f37ccb75cb8708d1095448aad0713b425bdc1ff9a4a128a56e4/xtreme.py", line 732, in _generate_examples

yield from UdposParser.generate_examples(config=self.config, filepath=filepath, **kwargs)

File "/home/skhanuja/.cache/huggingface/modules/datasets_modules/datasets/xtreme/29f5d57a48779f37ccb75cb8708d1095448aad0713b425bdc1ff9a4a128a56e4/xtreme.py", line 921, in generate_examples

for path, file in filepath:

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/download/download_manager.py", line 158, in __iter__

yield from self.generator(*self.args, **self.kwargs)

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/download/download_manager.py", line 211, in _iter_from_path

yield from cls._iter_tar(f)

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/download/download_manager.py", line 167, in _iter_tar

for tarinfo in stream:

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/tarfile.py", line 2475, in __iter__

tarinfo = self.next()

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/tarfile.py", line 2344, in next

raise ReadError("unexpected end of data")

tarfile.ReadError: unexpected end of data

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/skhanuja/Optimal-Resource-Allocation-for-Multilingual-Finetuning/src/train_al.py", line 855, in <module>

main()

File "/home/skhanuja/Optimal-Resource-Allocation-for-Multilingual-Finetuning/src/train_al.py", line 487, in main

train_dataset = load_dataset(dataset_name, source_language, split="train", cache_dir=args.cache_dir, download_mode="force_redownload")

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/load.py", line 1782, in load_dataset

builder_instance.download_and_prepare(

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/builder.py", line 872, in download_and_prepare

self._download_and_prepare(

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/builder.py", line 1649, in _download_and_prepare

super()._download_and_prepare(

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/builder.py", line 967, in _download_and_prepare

self._prepare_split(split_generator, **prepare_split_kwargs)

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/builder.py", line 1488, in _prepare_split

for job_id, done, content in self._prepare_split_single(

File "/home/skhanuja/miniconda3/envs/multilingual_ft/lib/python3.10/site-packages/datasets/builder.py", line 1644, in _prepare_split_single

raise DatasetGenerationError("An error occurred while generating the dataset") from e

datasets.builder.DatasetGenerationError: An error occurred while generating the dataset

```

### Steps to reproduce the bug

```

train_dataset = load_dataset('xtreme', 'udpos.English', split="train", cache_dir=args.cache_dir, download_mode="force_redownload")

```

### Expected behavior

Download the udpos dataset

### Environment info

- `datasets` version: 2.10.1

- Platform: Linux-3.10.0-957.1.3.el7.x86_64-x86_64-with-glibc2.17

- Python version: 3.10.8

- PyArrow version: 10.0.1

- Pandas version: 1.5.2 | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5594/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5594/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/5592 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5592/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5592/comments | https://api.github.com/repos/huggingface/datasets/issues/5592/events | https://github.com/huggingface/datasets/pull/5592 | 1,603,619,124 | PR_kwDODunzps5K9dWr | 5,592 | Fix docstring example | {

"login": "stevhliu",

"id": 59462357,

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/stevhliu",

"html_url": "https://github.com/stevhliu",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | rea... | 2023-02-28T18:42:37 | 2023-02-28T19:26:33 | 2023-02-28T19:19:15 | MEMBER | null | Fixes #5581 to use the correct output for the `set_format` method. | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5592/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5592/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5592",

"html_url": "https://github.com/huggingface/datasets/pull/5592",

"diff_url": "https://github.com/huggingface/datasets/pull/5592.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5592.patch",

"merged_at": "2023-02-28T19:19:15"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/5591 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5591/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5591/comments | https://api.github.com/repos/huggingface/datasets/issues/5591/events | https://github.com/huggingface/datasets/pull/5591 | 1,603,571,407 | PR_kwDODunzps5K9S79 | 5,591 | set dev version | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_5591). All of your documentation changes will be reflected on that endpoint.",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchma... | 2023-02-28T18:09:05 | 2023-02-28T18:16:31 | 2023-02-28T18:09:15 | MEMBER | null | null | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5591/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5591/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5591",

"html_url": "https://github.com/huggingface/datasets/pull/5591",

"diff_url": "https://github.com/huggingface/datasets/pull/5591.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5591.patch",

"merged_at": "2023-02-28T18:09:15"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/5590 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5590/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5590/comments | https://api.github.com/repos/huggingface/datasets/issues/5590/events | https://github.com/huggingface/datasets/pull/5590 | 1,603,549,504 | PR_kwDODunzps5K9N_H | 5,590 | Release: 2.10.1 | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | closed | false | null | [] | null | [

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | read_batch_formatted_as_numpy after write_flattened_sequence | read_batch_formatted_a... | 2023-02-28T17:58:11 | 2023-02-28T18:16:27 | 2023-02-28T18:06:08 | MEMBER | null | null | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5590/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5590/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5590",

"html_url": "https://github.com/huggingface/datasets/pull/5590",

"diff_url": "https://github.com/huggingface/datasets/pull/5590.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5590.patch",

"merged_at": "2023-02-28T18:06:08"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/5589 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5589/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5589/comments | https://api.github.com/repos/huggingface/datasets/issues/5589/events | https://github.com/huggingface/datasets/pull/5589 | 1,603,535,704 | PR_kwDODunzps5K9K1i | 5,589 | Revert "pass the dataset features to the IterableDataset.from_generator" | {

"login": "lhoestq",

"id": 42851186,

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/lhoestq",

"html_url": "https://github.com/lhoestq",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"type": "User",

"site_admin": false

} | [] | open | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_5589). All of your documentation changes will be reflected on that endpoint.",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchma... | 2023-02-28T17:52:04 | 2023-03-03T16:52:24 | null | MEMBER | null | This reverts commit b91070b9c09673e2e148eec458036ab6a62ac042 (temporarily)

It hurts iterable dataset performance a lot (e.g. x4 slower because it encodes+decodes images unnecessarily). I think we need to fix this before re-adding it

cc @mariosasko @Hubert-Bonisseur | {

"url": "https://api.github.com/repos/huggingface/datasets/issues/5589/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

} | https://api.github.com/repos/huggingface/datasets/issues/5589/timeline | null | null | false | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5589",

"html_url": "https://github.com/huggingface/datasets/pull/5589",

"diff_url": "https://github.com/huggingface/datasets/pull/5589.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/5589.patch",

"merged_at": null

} | true |

End of preview. Expand in Data Studio

- Downloads last month

- 11