Sophia Vincoff

commited on

Commit

·

bae913a

1

Parent(s):

8d9d9da

caid benchmark

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- fuson_plm/benchmarking/caid/README.md +294 -0

- fuson_plm/benchmarking/caid/__init__.py +0 -0

- fuson_plm/benchmarking/caid/analyze_fusion_preds.py +158 -0

- fuson_plm/benchmarking/caid/clean.py +671 -0

- fuson_plm/benchmarking/caid/color_disordered_residues.ipynb +849 -0

- fuson_plm/benchmarking/caid/config.py +14 -0

- fuson_plm/benchmarking/caid/disorder_coloring_data/normalized_disorder_propensities_source_data.csv +3 -0

- fuson_plm/benchmarking/caid/model.py +26 -0

- fuson_plm/benchmarking/caid/plot.py +1030 -0

- fuson_plm/benchmarking/caid/process_fusion_structures.py +799 -0

- fuson_plm/benchmarking/caid/processed_data/CAID-2_Disorder_NOX_Processed.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/IDP-CRF_Training_Dataset.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/AlphaFold-disorder_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/AlphaFold-rsa_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/DISOPRED3-diso_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/DeepIDP-2L_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/DisoPred_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/Dispredict3_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/ESpritz-D_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/IDP-Fusion_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/IUPred3_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/disomine_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/flDPlr2_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/flDPnn2_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/flDPnn_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/caid2_competition_results/flDPtr_CAID-2_Disorder_NOX.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/figures/fusion_disorder/plddt_sequence_EML4-ALK.png +0 -0

- fuson_plm/benchmarking/caid/processed_data/figures/fusion_disorder/plddt_sequence_EML4::ALK_source_data.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/figures/fusion_disorder/plddt_sequence_EWSR1-FLI1.png +0 -0

- fuson_plm/benchmarking/caid/processed_data/figures/fusion_disorder/plddt_sequence_EWSR1::FLI1_source_data.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/figures/fusion_disorder/plddt_sequence_PAX3-FOXO1.png +0 -0

- fuson_plm/benchmarking/caid/processed_data/figures/fusion_disorder/plddt_sequence_PAX3::FOXO1_source_data.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/figures/fusion_disorder/plddt_sequence_SS18-SSX1.png +0 -0

- fuson_plm/benchmarking/caid/processed_data/figures/fusion_disorder/plddt_sequence_SS18::SSX1_source_data.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/figures/histograms/disorder_nox_histogram.png +0 -0

- fuson_plm/benchmarking/caid/processed_data/figures/histograms/disorder_nox_histogram_source_data.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/figures/histograms/fusions_histogram.png +0 -0

- fuson_plm/benchmarking/caid/processed_data/figures/histograms/fusions_histogram_source_data.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/figures/histograms/heads_histogram.png +0 -0

- fuson_plm/benchmarking/caid/processed_data/figures/histograms/heads_histogram_source_data.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/figures/histograms/tails_histogram.png +0 -0

- fuson_plm/benchmarking/caid/processed_data/figures/histograms/tails_histogram_source_data.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/flDPnn_Training_Dataset.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/flDPnn_Validation_Dataset.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/fusionpdb/FusionPDB_level2-3_cleaned_FusionGID_info.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/fusionpdb/FusionPDB_level2-3_cleaned_structure_info.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/fusionpdb/fusion_heads_and_tails.csv +3 -0

- fuson_plm/benchmarking/caid/processed_data/fusionpdb/heads_tails_structural_data.csv +3 -0

- fuson_plm/benchmarking/caid/raw_data/caid2_competition_results/AlphaFold-disorder.caid +0 -0

- fuson_plm/benchmarking/caid/raw_data/caid2_competition_results/AlphaFold-rsa.caid +0 -0

fuson_plm/benchmarking/caid/README.md

ADDED

|

@@ -0,0 +1,294 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

## CAID Benchmark

|

| 2 |

+

|

| 3 |

+

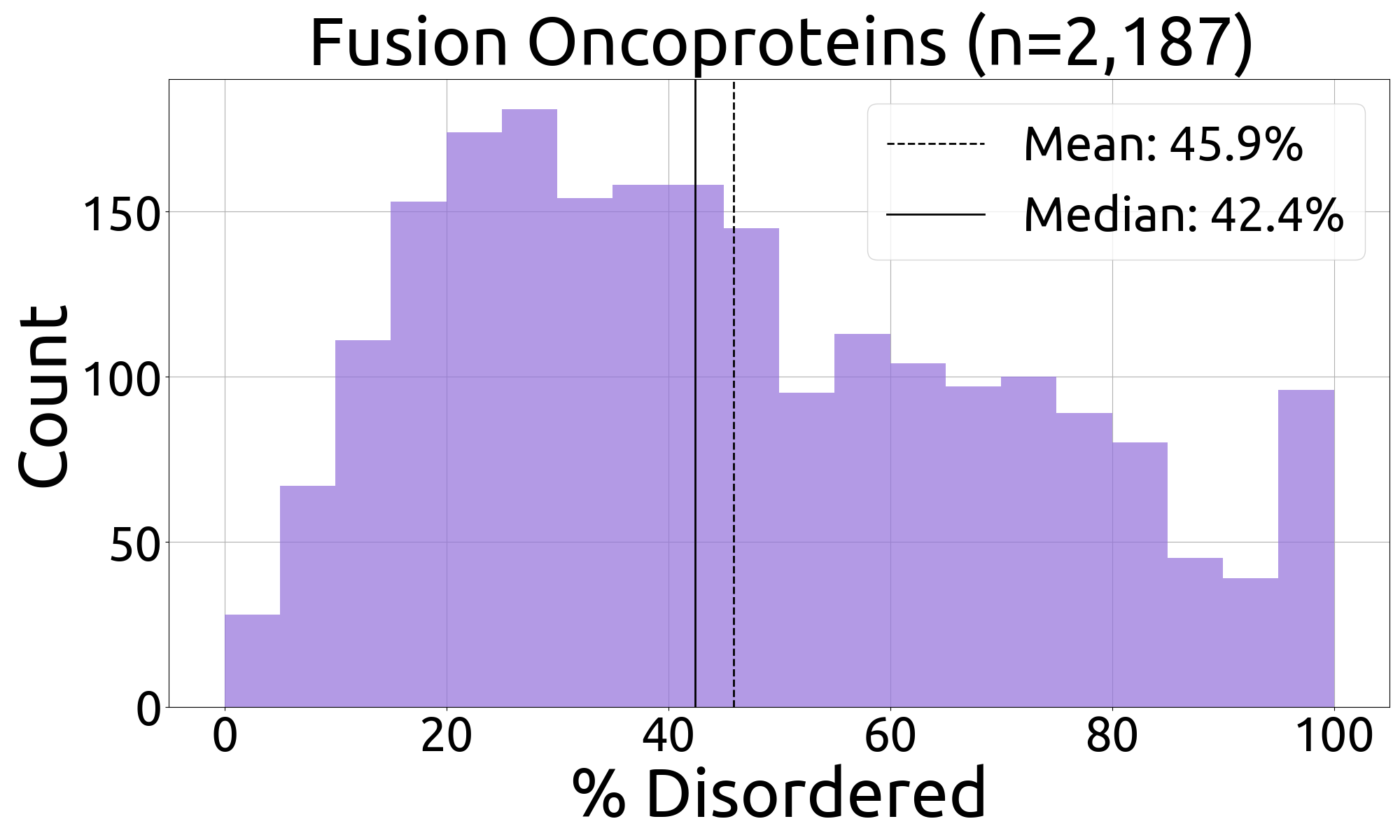

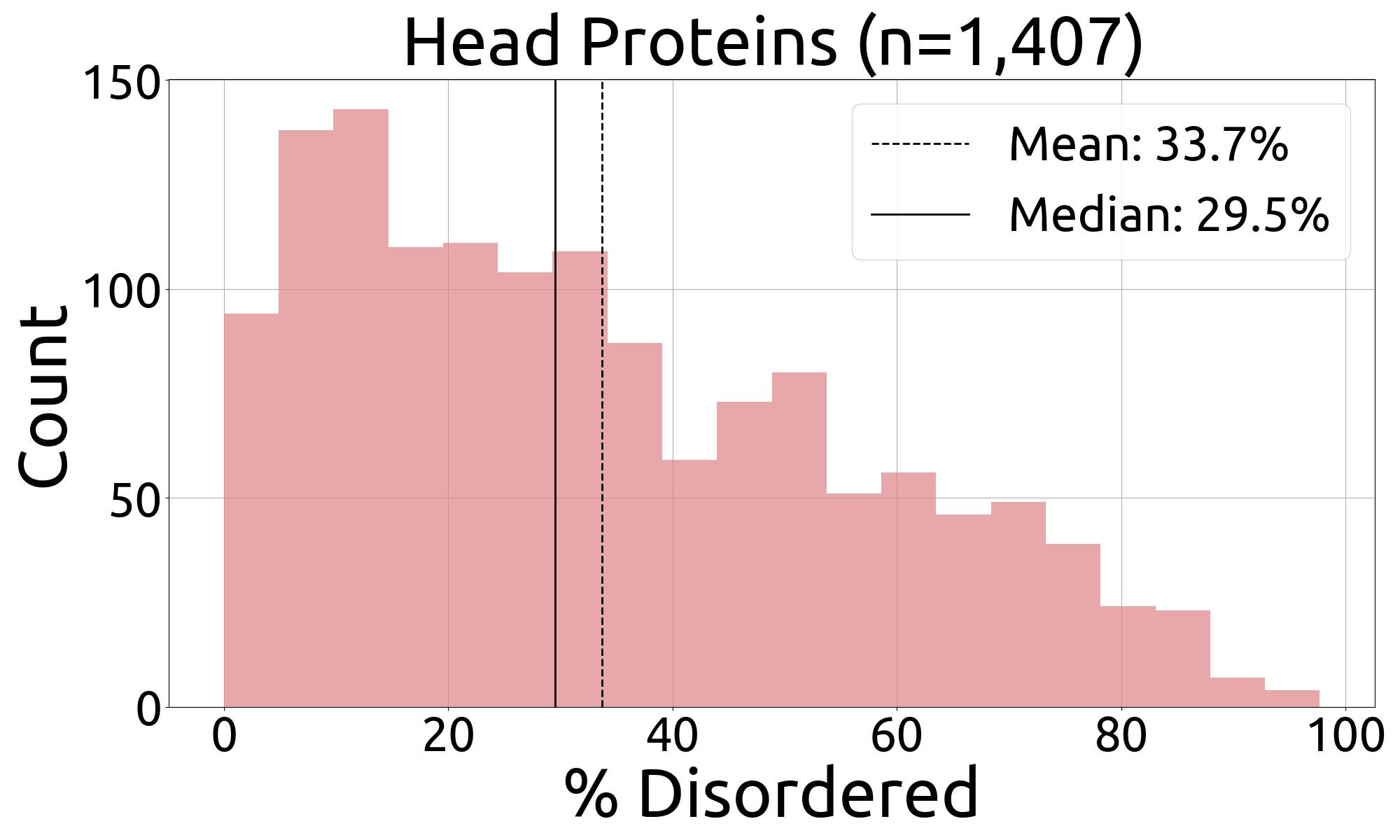

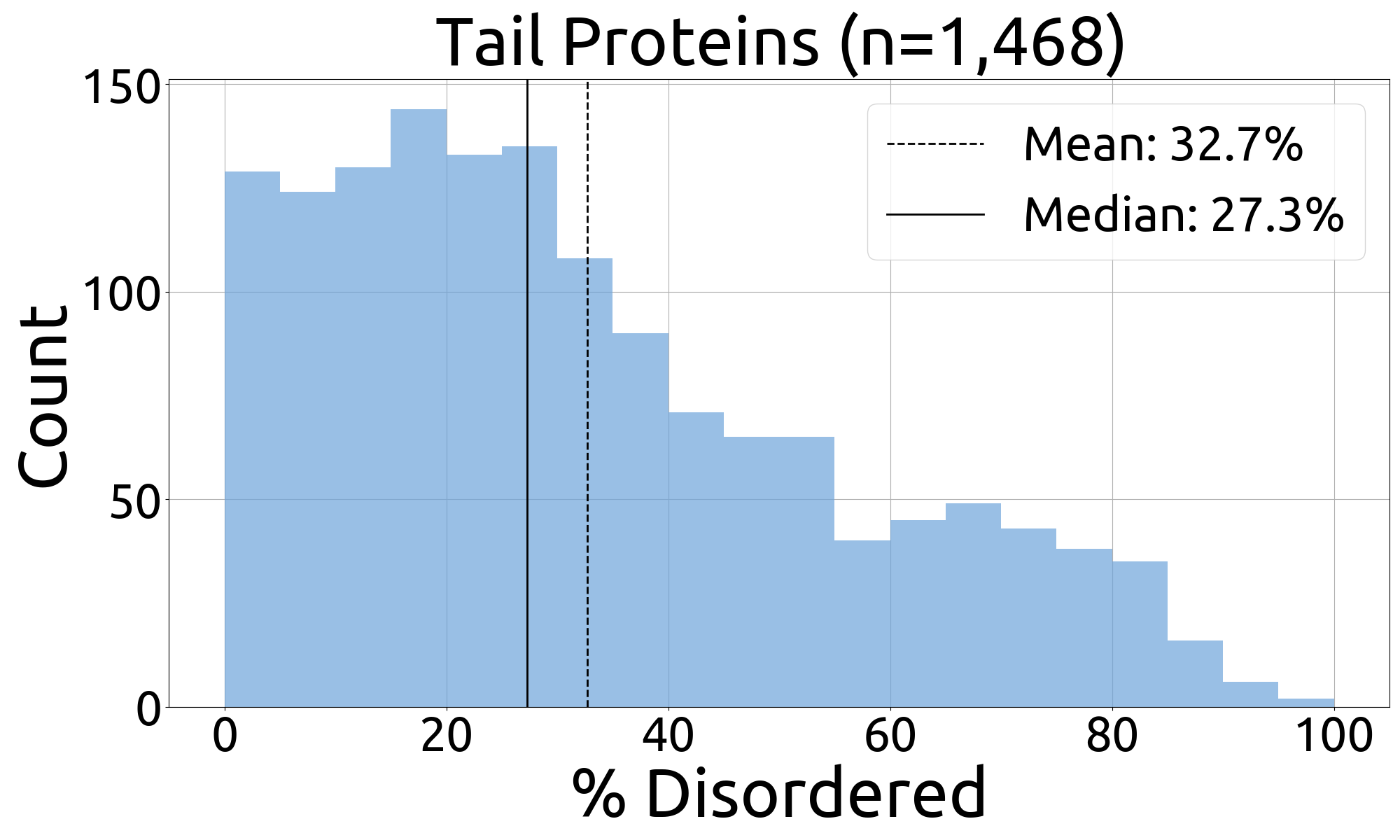

This folder contains all the data and code needed to perform the **CAID benchmark**, where FusOn-pLM-Diso (a classifier built on FusOn-pLM embeddings) is used to predict per-residue disorder propensities (Figure 4C-F) and plot disorder properties (Figure 1C-1D, S1)

|

| 4 |

+

|

| 5 |

+

### TL;DR

|

| 6 |

+

The order in which to run the scripts:

|

| 7 |

+

|

| 8 |

+

```

|

| 9 |

+

python scrape_fusionpdb.py # pull FusionPDB structures

|

| 10 |

+

python process_fusion_structures.py # process FusionPDB structures, and head/tail protein structures

|

| 11 |

+

python clean.py # clean disorder data and structure data. Assemble train/test/benchmark splits

|

| 12 |

+

python train.py # train models

|

| 13 |

+

python analyze_fusion_preds.py # make box chart and line plot of model performance on fusion proteins

|

| 14 |

+

python plot.py # plot AUROC of model performance, and additional figures based on disorder data

|

| 15 |

+

```

|

| 16 |

+

|

| 17 |

+

Additional notes:

|

| 18 |

+

|

| 19 |

+

* `color_disorder_residues.ipynb` is used to plot fusion structures with pLDDT or disorder prediction color overlays.

|

| 20 |

+

* We recommend using `nohup` to run longer scripts like `scrape_fusionpdb.py`, `process_fusion_structures.py`, `clean.py`, and `train.py`

|

| 21 |

+

|

| 22 |

+

### Downloading raw disorder data

|

| 23 |

+

Per-residue disorder predictions were used to train and test FusOn-pLM-Diso.

|

| 24 |

+

|

| 25 |

+

1. **flDPnn** ([Hu et al. 2021](https://doi.org/10.1038/s41467-021-24773-7))

|

| 26 |

+

1. At this [link](http://biomine.cs.vcu.edu/servers/flDPnn/?fbclid=IwZXh0bgNhZW0CMTEAAR0KO5CkNdkGC9e5O32S0QoG3BWOw6_egbnioXQNBSv3UC-m_b_dxh70Nnk_aem_z285WFCHdBLw3vOj7LL37A), scroll down to the bottom to find links to the [training](http://biomine.cs.vcu.edu/servers/flDPnn/data/flDPnn_Training_Annotation.txt) and [validation](http://biomine.cs.vcu.edu/servers/flDPnn/data/flDPnn_Validation_Annotation.txt) sets.

|

| 27 |

+

2. **IDP-CRF** ([Liu et al. 2018](https://doi.org/10.3390/ijms19092483))

|

| 28 |

+

1. Download zipped data from [this link](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6164615/bin/ijms-19-02483-s001.zip), remove header and footer, and save as a FASTA file

|

| 29 |

+

3. **CAID2-Disorder-NOX** ([Del Conte et al. 2023](https://doi.org/10.1002/prot.26582))

|

| 30 |

+

1. Go to [CAID Round 2 Results](https://caid.idpcentral.org/challenge/results?fbclid=IwZXh0bgNhZW0CMTEAAR12dKaA0KywcT71FnyXIrrNS91pwGREsLiq5c2RmfdYl7L0VdUNG7jYai8_aem_tW6Wm9_11ZuiI_GKzbNZjA). Scroll to "Here you can download the references used in the CAID-2 challenge" and you'll find the following links.

|

| 31 |

+

1. [disorder_nox.fasta](https://caid.idpcentral.org/assets/sections/challenge/static/references/2/disorder_nox.fasta)

|

| 32 |

+

2. [predictions](https://caid.idpcentral.org/assets/sections/challenge/static/predictions/2/predictions.zip) made by all CAID2 participants; AUROC curves can be reconstructed from these

|

| 33 |

+

|

| 34 |

+

Raw disorder data are stored in `caid/raw_data`

|

| 35 |

+

```

|

| 36 |

+

benchmarking/

|

| 37 |

+

└── caid/

|

| 38 |

+

└── raw_data/

|

| 39 |

+

└── caid2_competition_results/...

|

| 40 |

+

└── caid2_train_and_test_data/

|

| 41 |

+

├── CAID-2_Disorder_NOX_Testing_Sequences.fasta

|

| 42 |

+

├── flDPnn_Training_Dataset.txt

|

| 43 |

+

├── flDPnn_Validation_Annotation.txt

|

| 44 |

+

├── IDP-CRF_Training_Dataset.txt

|

| 45 |

+

```

|

| 46 |

+

- 📁 **`raw_data/caid2_competition_results/`**: folder containing raw predictions from CAID2 competitors, downloaded directly from the CAID2 website. Models: AlphaFold-disorder, AlphaFOld-rsa, DeepIDP-2L, disomine, DisoPred, DISOPRED3-diso, Dispredict3, ESpritz-D, flDPlr2, flDPnn, flDPnn2, flDPtr, IDP-Fusion, IUPred3.

|

| 47 |

+

- **`raw_data/caid2_train_and_test_data/CAID-2_Disorder_NOX_Testing_Sequences.fasta`**: Disorder-NOX dataset (used as the test set in this benchmark)

|

| 48 |

+

- **`raw_data/caid2_train_and_test_data/flDPnn_Training_Dataset.txt`**: training set for flDPnn

|

| 49 |

+

- **`raw_data/caid2_train_and_test_dataflDPnn_Validation_Dataset.txt`**: validation set for flDPnn

|

| 50 |

+

- **`raw_data/IDP-CRF_Training_Dataset.txt`**: training set for IDP-CRF

|

| 51 |

+

|

| 52 |

+

### Processing disorder data

|

| 53 |

+

|

| 54 |

+

```

|

| 55 |

+

benchmarking/

|

| 56 |

+

└── caid/

|

| 57 |

+

└── processed_data/

|

| 58 |

+

└── caid2_competition_results/...

|

| 59 |

+

├── CAID-2_Disorder_NOX_Processed.csv

|

| 60 |

+

├── flDPnn_Training_Dataset.csv

|

| 61 |

+

├── flDPnn_Validation_Dataset.csv

|

| 62 |

+

├── IDP-CRF_Training_Dataset.csv

|

| 63 |

+

└── splits/

|

| 64 |

+

├── splits.csv

|

| 65 |

+

├── train_df.csv

|

| 66 |

+

├── test_df.csv

|

| 67 |

+

├── fusion_bench_df.csv

|

| 68 |

+

```

|

| 69 |

+

|

| 70 |

+

The **`clean.py`** processes and combines the raw data files, generating the following files in 📁`processed_data/`:

|

| 71 |

+

- 📁 **`caid2_competition_results/`**: a folder with table versions of all the files in 📁 `raw_data/caid2_competition_results/`

|

| 72 |

+

- **`CAID-2_Disorder_NOX_Processed.csv`**: a table of test data, made by parsing `raw_data/caid2_train_and_test_data/CAID-2_Disorder_NOX_Testing_Sequences.fasta`

|

| 73 |

+

- **`flDPnn_Training_Dataset.csv`**: a table of flDPnn's training data, made by parsing `raw_data/caid2_train_and_test_data/flDPnn_Training_Dataset.txt`

|

| 74 |

+

- **`flDPnn_Validation_Dataset.csv`**: a table of flDPnn's validation data, made by parsing `raw_data/caid2_train_and_test_data/flDPnn_Validation_Dataset.txt`

|

| 75 |

+

- **`IDP-CRF_Training_Dataset.csv`**: a table of IDP-CRF's training data, made by parsing `raw_data/caid2_train_and_test_data/CRF_Training_Dataset.txt`

|

| 76 |

+

|

| 77 |

+

`clean.py` also generates **the final train-test splits and fusion oncoprotein benchmarking file used to train and evaluate the disorder predictors.** These are stored in 📁`splits/`

|

| 78 |

+

- **`splits.csv`**: sequences, IDs, split (either "Train", "Test", or "Fusion_Benchmark"), andpper-residue disorder labels based on AlphaFold-pLDDT (1 (disordered) if pLDDT< 68.8, 0 (ordered) if >=68.8)

|

| 79 |

+

- **`train_df.csv`**: just the Train set portion of `splits.csv`

|

| 80 |

+

- **`test_df.csv`**: just the Test set portion of `splits.csv`

|

| 81 |

+

- **`fusion_bench_df.csv`**: just the Fusion_Benchmark portion of `splits.csv`. Includes 524 fusion oncoproteins from the FusOn-pLM test set whose structures were collected from FusionPDB (see "Downloading and Processing FusionPDB data

|

| 82 |

+

|

| 83 |

+

|

| 84 |

+

### Downloading and Processing FusionPDB data

|

| 85 |

+

The structures of fusion oncoproteins from the FusionPDB database were used to evaluate FusOn-pLM-Diso's performance on fusion oncoproteins. This data was collected by running `scrape_fusionpdb.py`, followed by `process_fusion_structures.py`. These scripts populated the `raw_data` and `processed_data` files simultaneously.

|

| 86 |

+

|

| 87 |

+

Listed below are all the relevant files:

|

| 88 |

+

|

| 89 |

+

```

|

| 90 |

+

benchmarking/

|

| 91 |

+

└── caid/

|

| 92 |

+

└── raw_data/

|

| 93 |

+

└── fusionpdb/

|

| 94 |

+

└── structures/... # created by scrape_fusionpdb.py (folder not included in repo)

|

| 95 |

+

└── head_tail_af2db_structures/... # created by process_fusion_structures.py (folder not included in repo)

|

| 96 |

+

├── FusionPDB_level2_curated_09_05_2024.csv

|

| 97 |

+

├── FusionPDB_level2_fusion_structure_links.csv

|

| 98 |

+

├── FusionPDB_level3_curated_09_05_2024.csv

|

| 99 |

+

├── FusionPDB_level3_fusion_structure_links.csv

|

| 100 |

+

├── fusionpdb_structureless_ids.txt

|

| 101 |

+

├── hgene_tgene_uniprot_idmap_07_10_2024.txt

|

| 102 |

+

├── level2_head_tail_info.txt

|

| 103 |

+

├── level3_head_tail_info.txt

|

| 104 |

+

├── not_in_afdb_idmap.txt

|

| 105 |

+

└── processed_data/

|

| 106 |

+

└── fusion_pdb/

|

| 107 |

+

└── intermediates/

|

| 108 |

+

├── giant_level_2-3_fusion_protein_head_tail_info.csv

|

| 109 |

+

├── giant_level2-3_fusion_protein_structure_links.csv

|

| 110 |

+

├── giant_level2-3_fusion_protein_structures_processed.csv

|

| 111 |

+

├── uniprotids_not_in_afdb.txt

|

| 112 |

+

├── unmapped_parts.tt

|

| 113 |

+

├── fusion_heads_and_tails.csv

|

| 114 |

+

├── FusionPDB_level2-3_cleaned_FusionGID_info.csv

|

| 115 |

+

├── FusionPDB_level2-3_cleaned_structure_info.csv

|

| 116 |

+

├── heads_tails_structural_data.csv

|

| 117 |

+

```

|

| 118 |

+

|

| 119 |

+

#### ⚙️ Pipeline

|

| 120 |

+

|

| 121 |

+

Here we describe what each script does and which files each script creates.

|

| 122 |

+

1. 🐍 **`scrape_fusionpdb.py`**

|

| 123 |

+

1. Scrapes metadata for FusionPDB Level 2 and Level 3

|

| 124 |

+

1. Pulls the online tables for [Level 2](https://compbio.uth.edu/FusionPDB/gene_search_result_0.cgi?type=chooseLevel&chooseLevel=level2) and [Level 3](https://compbio.uth.edu/FusionPDB/gene_search_result_0.cgi?type=chooseLevel&chooseLevel=level3), saving results to `raw_data/FusionPDB_level2_curated_09_05_2024.csv` and `raw_data/FusionPDB_level3_curated_09_05_2024.csv` respectively.

|

| 125 |

+

2. Retrieves structure links

|

| 126 |

+

1. Using the tables collected in step (i), visits the page for each fusion oncoprotein (FO) in FusionPDB Level 2 and 3, and downloads all AlphaFold2 structure links for each FO.

|

| 127 |

+

2. Saves results directly to `raw_data/FusionPDB_level2_fusion_structure_links.csv` and `raw_data/FusionPDB_level3_fusion_structure_links.csv`, respectively

|

| 128 |

+

3. Retrieves FO head gene and tail gene info

|

| 129 |

+

1. Using the tables collected in step (i), visits the page for each fusion oncoprotein (FO) in FusionPDB Level 2 and 3 to download head/tail info. Collects HGID and TGID (GeneIDs for head and tail) and UniProt accessions for each.

|

| 130 |

+

2. Saves results directly to `raw_data/level2_head_tail_info.txt` and `raw_data/level3_head_tail_info.txt`, respectively.

|

| 131 |

+

4. Combines Level 2 and 3 head/tail data

|

| 132 |

+

1. Merges `raw_data/level2_head_tail_info.txt` and `raw_data/level3_head_tail_info.txt` into a dataframe.

|

| 133 |

+

2. Saves result at `processed_data/fusionpdb/fusion_heads_and_tails.csv` (columns="FusionGID","HGID","TGID","HGUniProtAcc","TGUniProtAcc")

|

| 134 |

+

5. Combines Level 2 and 3 structure link data

|

| 135 |

+

1. Joins structure link data with metadata for each of levels 2 and 3, then combines the result.

|

| 136 |

+

2. Saves result at `processed_data/fusionpdb/intermediates/giant_level2-3_fusion_protein_structure_links.csv`

|

| 137 |

+

6. Combines structure link data and metadata (result of step (v)) with head and tail data (result of step (iv)), and resolves any missing head/tail UniProt IDs.

|

| 138 |

+

1. Merges the data

|

| 139 |

+

2. Checks how many rows have either missing or wrong UniProt accessions for the head or tail gene, and compiles the gene symbols for online quering in the UniProt ID Mapping tool (`processed_data/fusionpdb/intermediates/unmapped_parts.txt`)

|

| 140 |

+

3. Reads the UniProt ID Mapping result. Combines this data with FusionPDB-scraped data by matching FusionPDB's HGID (GeneID for head) and TGID (GeneID for tail) with the GeneID returned by UniProt.

|

| 141 |

+

4. For any FO where FusionPDB lacked a UniProt ID for the head/tail, this ID is filled in from the UniProt ID Mapping result.

|

| 142 |

+

5. Saves result to `processed_data/fusionpdb/intermediates/giant_level2-3_fusion_protein_head_tail_info.csv`. Columns: "FusionGID","FusionGene","Hgene","Tgene","URL","HGID","TGID","HGUniProtAcc","TGUniProtAcc","HGUniProtAcc_Source","TGUniProtAcc_Source", where the "_Source" columns indicate whether the UniProt ID came from FusionPDB, or from the ID Map.

|

| 143 |

+

7. Downloads AlphaFold2 structures of FOs from FusionPDB.

|

| 144 |

+

1. Using structure links from `processed_data/fusionpdb/intermediates/giant_level2-3_fusion_protein_structure_links.csv` (step (v)), directly downloads `.pdb` and `.cif` files.

|

| 145 |

+

2. Saves results in 📁`raw_data/fusionpdb/structures`

|

| 146 |

+

|

| 147 |

+

<br>

|

| 148 |

+

|

| 149 |

+

2. 🐍 **`process_fusion_structures.py`**

|

| 150 |

+

1. Determines pLDDT(s) for each FO structure.

|

| 151 |

+

1. For each structure in 📁`raw_data/fusionpdb_structures/`, determines amino acid sequence, per-residue pLDDT, and average pLDDT from the AlphaFold2 structure.

|

| 152 |

+

2. Saves results in `processed_data/fusionpdb/intermediates/giant_level2-3_fusion_protein_structures_processed.csv`.

|

| 153 |

+

2. Downloads AlphaFold2 structures for all head and tail proteins

|

| 154 |

+

1. Reads `processed_data/fusionpdb/intermediates/giant_level2-3_fusion_protein_head_tail_info.csv` and collects all unique UniProt IDs for all head/tail proteins.

|

| 155 |

+

2. For each UniProt ID, queries the AlphaFoldDB, downloads the AlphaFold2 structure (if available), and saves it to 📁`raw_data/fusionpdb/head_tail_af2db_structures/`. Saves files converted from PDB to CIF format in `mmcif_converted_files`. Then, extracts the sequence, per-residue pLDDT, and average pLDDT from the file.

|

| 156 |

+

3. Saves any UniProt IDs that did not have structures in the AlphaFoldDB to: `processed_data/fusionpdb/intermediates/uniprotids_not_in_afdb.txt`. Most of these were very long, but the shorter ones were folded and their average pLDDTs were manually inputted. These were put back into the AlphaFold ID map to look for alternative UniProt IDs, and their results are in `not_in_afdb_idmap.txt`.

|

| 157 |

+

4. Saves results to `processed_data/fusionpdb/heads_tails_structural_data.csv`

|

| 158 |

+

3. Cleans the dataase of level 2&3 structural info

|

| 159 |

+

1. Drops rows where no structure was successfully downloaded

|

| 160 |

+

2. Drops rows where the FO sequence from FusionPDB does not match the FO sequence from its own AlphaFold2 structure file

|

| 161 |

+

3. ⭐️Saves **two final, cleaned databases**⭐️:

|

| 162 |

+

1. ⭐️ **`FusionPDB_level2-3_cleaned_FusionGID_info.csv`**: includes ful IDs and structural information for the Hgene and Tgene of each FO. Columns="FusionGID","FusionGene","Hgene","Tgene","URL","HGID","TGID","HGUniProtAcc","TGUniProtAcc","HGUniProtAcc_Source","TGUniProtAcc_Source","HG_pLDDT","HG_AA_pLDDTs","HG_Seq","TG_pLDDT","TG_AA_pLDDTs","TG_Seq".

|

| 163 |

+

2. ⭐️ **`FusionPDB_level2-3_cleaned_structure_info.csv`**: includes full structural information for each FO. Columns= "FusionGID","FusionGene","Fusion_Seq","Fusion_Length","Hgene","Hchr","Hbp","Hstrand","Tgene","Tchr","Tbp","Tstrand","Level","Fusion_Structure_Link","Fusion_Structure_Type","Fusion_pLDDT","Fusion_AA_pLDDTs","Fusion_Seq_Source"

|

| 164 |

+

|

| 165 |

+

|

| 166 |

+

### Training

|

| 167 |

+

|

| 168 |

+

The model is defined in `model.py` and `utils.py`. Training configs can be provided in `config.py`:

|

| 169 |

+

|

| 170 |

+

```

|

| 171 |

+

# Which models to benchmark

|

| 172 |

+

BENCHMARK_FUSONPLM = True

|

| 173 |

+

# FUSONPLM_CKPTS. If you've traiend your own model, this is a dictionary: key = run name, values = epochs

|

| 174 |

+

# If you want to use the trained FusOn-pLM, instead FUSONPLM_CKPTS="FusOn-pLM"

|

| 175 |

+

FUSONPLM_CKPTS= "FusOn-pLM"

|

| 176 |

+

|

| 177 |

+

BENCHMARK_ESM = True

|

| 178 |

+

|

| 179 |

+

# GPU configs

|

| 180 |

+

CUDA_VISIBLE_DEVICES="0"

|

| 181 |

+

|

| 182 |

+

# Overwriting configs

|

| 183 |

+

PERMISSION_TO_OVERWRITE_EMBEDDINGS = False # if False, script will halt if it believes these embeddings have already been made.

|

| 184 |

+

PERMISSION_TO_OVERWRITE_MODELS = False # if False, script will halt if it believes these embeddings have already been made.

|

| 185 |

+

```

|

| 186 |

+

|

| 187 |

+

<br>

|

| 188 |

+

|

| 189 |

+

`train.py` trains the models using embeddings indicated in `config.py`. It also performs a hyperparameter screen. Model raw outputs (probabilities) and performance metrics are saved in `trained_models`. For example, FusOn-pLM-Diso raw outputs (ESM-2-650M-Diso has a folder in the same format, and future trained models will as well):

|

| 190 |

+

|

| 191 |

+

```

|

| 192 |

+

benchmarking/

|

| 193 |

+

└── caid/

|

| 194 |

+

└── trained_models/

|

| 195 |

+

└── esm2_t33_650M_UR50D/best/

|

| 196 |

+

└── fuson_plm/best/

|

| 197 |

+

├── caid_hyperparam_screen_fusion_benchmark_metrics.csv

|

| 198 |

+

├── caid_hyperparam_screen_fusion_benchmark_probs.csv

|

| 199 |

+

├── caid_hyperparam_screen_test_metrics.csv

|

| 200 |

+

├── caid_hyperparam_screen_test_probs.csv

|

| 201 |

+

├── caid_train_losses.csv

|

| 202 |

+

├── params.txt

|

| 203 |

+

```

|

| 204 |

+

|

| 205 |

+

- **`caid_hyperparam_screen_fusion_benchmark_metrics.csv`**: performance metrics (Accuracy, Precision, Recall, F1 Score, AUROC) for the top model on the fusion benchmark set (`splits/fusion_bench_df.csv`)

|

| 206 |

+

- **`caid_hyperparam_screen_fusion_benchmark_probs.csv`**: for the fusion benchmark, raw probabilities of class 1 (disorder), threshold used to assign 0/1 based on maximized F1 score, prediction labels based on probabilities and threshold

|

| 207 |

+

- **`caid_hyperparam_screen_test_metrics.csv`**: same as `caid_hyperparam_screen_fusion_benchmark_metrics.csv`, but for CAID2 Disorder-NOX (`splits/test_df.csv`)

|

| 208 |

+

- **`caid_hyperparam_screen_test_probs.csv`**: same as `caid_hyperparam_screen_fusion_benchmark_probs`, but for CAID2 Disorder-NOX

|

| 209 |

+

- **`caid_train_losses.csv`**: train losses over the 2 training epochs for top-performing model

|

| 210 |

+

- **`params.txt`**: hyperparameters of top performing model

|

| 211 |

+

|

| 212 |

+

The training script also populates the `results` directory. Results from the FusOn-pLM manuscript are found in `results/final`. A few extra data files and plots are added by `analyze_fus`

|

| 213 |

+

|

| 214 |

+

```

|

| 215 |

+

benchmarking/

|

| 216 |

+

└── caid/

|

| 217 |

+

└── results/final

|

| 218 |

+

├── best_caid_model_results.csv

|

| 219 |

+

├── caid_hyperparam_screen_test_metrics.csv

|

| 220 |

+

├── caid_hyperparam_screen_fusion_benchmark_metrics.csv

|

| 221 |

+

├── caid_hyperparam_screen_train_losses.csv

|

| 222 |

+

├── fusion_disorder_boxplots.png

|

| 223 |

+

├── fusion_pred_disorder_r2.png

|

| 224 |

+

├── fusion_disorder_boxplots_source_data.csv

|

| 225 |

+

├── fusion_pred_disorder_r2_source_data.csv

|

| 226 |

+

├── CAID2_FusOn-pLM-Diso_with_ESM_AUROC_curve.png

|

| 227 |

+

├── CAID_fpr_tpr_source_data.csv

|

| 228 |

+

├── CAID_prediction_source_data.csv

|

| 229 |

+

```

|

| 230 |

+

|

| 231 |

+

- **`best_caid_model_results.csv`**: Summary file of hyperparameters, test set statistics, and fusion benchmark statistics for the best model of each type screened (ESM-2-650M, FusOn-pLM)

|

| 232 |

+

- **`caid_hyperparam_screen_fusion_benchmark_metrics.csv`**: Fusion benchmark set statistics for full hyperparameter screen

|

| 233 |

+

- **`caid_hyperparam_screen_fusion_benchmark_metrics.csv`**: Test set statistics for full hyperparameter screen

|

| 234 |

+

- **`caid_hyperparam_screen_train_losses.csv`**: Train losses for full hyperparameter screen

|

| 235 |

+

- 📊 **`fusion_disorder_boxplots.png`**: Fig. 4E, left (data directly used to produce the plot at `fusion_disorder_boxplots_source_data.csv`)

|

| 236 |

+

- 📊 **`fusion_pred_disorder_r2_source_data.csv`**: Fig. 4E, right (data directly used to produce the plot at `fusion_pred_disorder_r2_source_data.csv`)

|

| 237 |

+

- 📊 **`CAID2_FusOn-pLM-Diso_with_ESM_AUROC_curve.png`**: Fig. 4D (probabilities used at `CAID_prediction_source_data.csv`, FPR/TPR relationships directly used to make the plot at `CAID_fpr_tpr_source_data.csv`)

|

| 238 |

+

|

| 239 |

+

To run the training script, use

|

| 240 |

+

|

| 241 |

+

```

|

| 242 |

+

nohup python train.py > train.out 2> train.err &

|

| 243 |

+

```

|

| 244 |

+

|

| 245 |

+

### Plotting

|

| 246 |

+

|

| 247 |

+

The `plot.py` script generates many figures from the paper, alongside the formatted data directly used for plotting.

|

| 248 |

+

|

| 249 |

+

```

|

| 250 |

+

benchmarking/

|

| 251 |

+

└── caid/

|

| 252 |

+

└── results/final/

|

| 253 |

+

├── CAID2_FusOn-pLM-Diso_with_ESM_AUROC_curve.png

|

| 254 |

+

└── processed_data/

|

| 255 |

+

└── figures/

|

| 256 |

+

└── fusion_disorder/

|

| 257 |

+

├── plddt_sequence_EML4-ALK.png

|

| 258 |

+

├── plddt_sequence_EML4::ALK_source_data.csv

|

| 259 |

+

├── plddt_sequence_EWSR1-FLI1.png

|

| 260 |

+

├── plddt_sequence_EWSR1::FLI1_source_data.csv

|

| 261 |

+

├── plddt_sequence_PAX3-FOXO1.png

|

| 262 |

+

├── plddt_sequence_PAX3::FOXO1_source_data.csv

|

| 263 |

+

├── plddt_sequence_SS18-SSX1.png

|

| 264 |

+

├── plddt_sequence_SS18::SSX1_source_data.csv

|

| 265 |

+

└── histograms/

|

| 266 |

+

├── disorder_nox_histogram.png

|

| 267 |

+

├── disorder_nox_histogram_source_data.csv

|

| 268 |

+

├── fusions_histogram.png

|

| 269 |

+

├── fusions_histogram_source_data.csv

|

| 270 |

+

├── heads_histogram.png

|

| 271 |

+

├── heads_histogram_source_data.csv

|

| 272 |

+

├── tails_histogram.png

|

| 273 |

+

├── tails_histogram_source_data.csv

|

| 274 |

+

```

|

| 275 |

+

|

| 276 |

+

- Plots in `fusion_disorder` are from Fig. 1C

|

| 277 |

+

- Plots in `hisograms` are from Fig. 1D and Fig. S1

|

| 278 |

+

|

| 279 |

+

To regenerate these plots and source data, run:

|

| 280 |

+

|

| 281 |

+

```

|

| 282 |

+

python plot.py

|

| 283 |

+

```

|

| 284 |

+

|

| 285 |

+

### Colored structure images

|

| 286 |

+

`color_disorder_residues.ipynb` is used to plot fusion structures with pLDDT or disorder prediction color overlays. By running certain (or all) of its cells, you will recreate images from Fig. 1C and 4F, as well as the following file:

|

| 287 |

+

|

| 288 |

+

```

|

| 289 |

+

benchmarking/

|

| 290 |

+

└── caid/

|

| 291 |

+

└── disorder_coloring_data

|

| 292 |

+

├── normalized_disorder_propensities_source_data.csv

|

| 293 |

+

```

|

| 294 |

+

- **`normalized_disorder_propensities_source_data.csv`**: the normalized disorder propensities that were visualized on fusion structures in Fig. 4F

|

fuson_plm/benchmarking/caid/__init__.py

ADDED

|

File without changes

|

fuson_plm/benchmarking/caid/analyze_fusion_preds.py

ADDED

|

@@ -0,0 +1,158 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

import torch

|

| 3 |

+

import torch.nn as nn

|

| 4 |

+

import os

|

| 5 |

+

import pickle

|

| 6 |

+

import pandas as pd

|

| 7 |

+

import numpy as np

|

| 8 |

+

|

| 9 |

+

from sklearn.metrics import accuracy_score, precision_score, recall_score, f1_score, roc_auc_score, precision_recall_curve, average_precision_score

|

| 10 |

+

from fuson_plm.utils.logging import log_update, open_logfile

|

| 11 |

+

from fuson_plm.benchmarking.caid.plot import plot_fusion_stats_boxplots, plot_fusion_frac_disorder_r2

|

| 12 |

+

|

| 13 |

+

# calculate AUROC and AUPRC for each sequence

|

| 14 |

+

def calc_metrics(row):

|

| 15 |

+

probs = row['prob_1']

|

| 16 |

+

probs = [float(y) for y in probs.split(',')]

|

| 17 |

+

true_labels = row['Label']

|

| 18 |

+

true_labels = [int(y) for y in list(true_labels)]

|

| 19 |

+

pred_labels = row['pred_labels']

|

| 20 |

+

pred_labels = [int(y) for y in list(pred_labels)]

|

| 21 |

+

|

| 22 |

+

# Calculate AUROC

|

| 23 |

+

# Calculate AUPRC

|

| 24 |

+

# Calculate all the other stats based on the predicted labels

|

| 25 |

+

|

| 26 |

+

flat_binary_preds = np.array(pred_labels)

|

| 27 |

+

flat_prob_preds = np.array(probs)

|

| 28 |

+

flat_labels = np.array(true_labels)

|

| 29 |

+

|

| 30 |

+

accuracy = accuracy_score(flat_labels, flat_binary_preds)

|

| 31 |

+

precision = precision_score(flat_labels, flat_binary_preds)

|

| 32 |

+

recall = recall_score(flat_labels, flat_binary_preds)

|

| 33 |

+

f1 = f1_score(flat_labels, flat_binary_preds)

|

| 34 |

+

try:

|

| 35 |

+

roc_auc = roc_auc_score(flat_labels, flat_prob_preds)

|

| 36 |

+

except:

|

| 37 |

+

roc_auc = np.nan

|

| 38 |

+

|

| 39 |

+

try:

|

| 40 |

+

auprc = average_precision_score(flat_labels, flat_prob_preds)

|

| 41 |

+

except:

|

| 42 |

+

auprc = np.nan

|

| 43 |

+

|

| 44 |

+

return pd.Series({

|

| 45 |

+

'Accuracy': round(accuracy,3),

|

| 46 |

+

'Precision': round(precision,3),

|

| 47 |

+

'Recall': round(recall,3),

|

| 48 |

+

'F1': round(f1,3),

|

| 49 |

+

'AUROC': round(roc_auc,3) if not(np.isnan(roc_auc)) else roc_auc,

|

| 50 |

+

'AUPRC': round(auprc,3) if not(np.isnan(auprc)) else auprc,

|

| 51 |

+

})

|

| 52 |

+

|

| 53 |

+

def get_model_preds_with_metrics(path_to_model_predictions):

|

| 54 |

+

# Define paths and dataframes that we will need

|

| 55 |

+

fusion_benchmark_set = pd.read_csv('splits/fusion_bench_df.csv')

|

| 56 |

+

model_predictions = pd.read_csv(path_to_model_predictions)

|

| 57 |

+

fusion_structure_data = pd.read_csv('processed_data/fusionpdb/FusionPDB_level2-3_cleaned_structure_info.csv')

|

| 58 |

+

fusion_structure_data['Fusion_Structure_Link'] = fusion_structure_data['Fusion_Structure_Link'].apply(lambda x: x.split('/')[-1])

|

| 59 |

+

|

| 60 |

+

# merge fusion data with seq ids

|

| 61 |

+

fuson_db = pd.read_csv('../../data/fuson_db.csv')

|

| 62 |

+

fuson_db = fuson_db[['aa_seq','seq_id']].rename(columns={'aa_seq':'Fusion_Seq'})

|

| 63 |

+

fusion_structure_data = pd.merge(

|

| 64 |

+

fusion_structure_data,

|

| 65 |

+

fuson_db,

|

| 66 |

+

on='Fusion_Seq',

|

| 67 |

+

how='inner'

|

| 68 |

+

)

|

| 69 |

+

|

| 70 |

+

# merge fusion structure data with top swissprot alignments

|

| 71 |

+

swissprot_top_alignments = pd.read_csv("../../data/blast/blast_outputs/swissprot_top_alignments.csv")

|

| 72 |

+

fusion_structure_data = pd.merge(

|

| 73 |

+

fusion_structure_data,

|

| 74 |

+

swissprot_top_alignments,

|

| 75 |

+

on="seq_id",

|

| 76 |

+

how="left"

|

| 77 |

+

)

|

| 78 |

+

|

| 79 |

+

model_predictions_labeled = pd.merge(model_predictions,fusion_benchmark_set.rename(columns={'Sequence':'sequence'}),on='sequence',how='inner')

|

| 80 |

+

model_predictions_labeled = pd.merge(model_predictions_labeled,

|

| 81 |

+

fusion_structure_data[['FusionGene','Fusion_Seq','Fusion_Structure_Link','Fusion_pLDDT','Fusion_AA_pLDDTs',

|

| 82 |

+

'top_hg_UniProtID', 'top_hg_UniProt_isoform', 'top_hg_UniProt_fus_indices', 'top_tg_UniProtID', 'top_tg_UniProt_isoform',

|

| 83 |

+

'top_tg_UniProt_fus_indices', 'top_UniProtID', 'top_UniProt_isoform', 'top_UniProt_fus_indices', 'top_UniProt_nIdentities',

|

| 84 |

+

'top_UniProt_nPositives']].rename(

|

| 85 |

+

columns={'Fusion_Seq': 'sequence'}

|

| 86 |

+

),

|

| 87 |

+

on='sequence',

|

| 88 |

+

how='left')

|

| 89 |

+

model_predictions_labeled['length'] = model_predictions_labeled['sequence'].str.len()

|

| 90 |

+

model_predictions_labeled['Fusion_Structure_Link'] = model_predictions_labeled['Fusion_Structure_Link'].apply(lambda x: x.split('/')[-1])

|

| 91 |

+

|

| 92 |

+

model_predictions_labeled[['Accuracy','Precision','Recall','F1','AUROC','AUPRC']] = model_predictions_labeled.apply(lambda row: calc_metrics(row),axis=1)

|

| 93 |

+

model_predictions_labeled = model_predictions_labeled.sort_values(by=['AUROC','F1','AUPRC','Accuracy','Precision','Recall'],ascending=[False,False,False,False,False,False]).reset_index(drop=True)

|

| 94 |

+

model_predictions_labeled['pcnt_disordered'] = round(100*model_predictions_labeled['Label'].apply(lambda x: sum([int(y) for y in list(x)]))/model_predictions_labeled['sequence'].str.len(),2)

|

| 95 |

+

model_predictions_labeled['pred_pcnt_disordered'] = round(100*model_predictions_labeled['pred_labels'].apply(lambda x: sum([int(y) for y in list(x)]))/model_predictions_labeled['sequence'].str.len(),2)

|

| 96 |

+

log_update(f"Model predictions for {len(model_predictions_labeled)} fusion oncoproteins. Preview:")

|

| 97 |

+

log_update(

|

| 98 |

+

model_predictions_labeled[['sequence','length','FusionGene','Fusion_pLDDT','pcnt_disordered','pred_pcnt_disordered','AUROC','F1','AUPRC','Accuracy','Precision','Recall']].head()

|

| 99 |

+

)

|

| 100 |

+

cols_str = '\n\t'+ '\n\t'.join(list(model_predictions_labeled.columns))

|

| 101 |

+

log_update(f"Columns in model predictions stats database: {cols_str}")

|

| 102 |

+

|

| 103 |

+

# There is one duplicate row

|

| 104 |

+

duplicate_sequences = model_predictions_labeled.loc[model_predictions_labeled['sequence'].duplicated()]['sequence'].unique().tolist()

|

| 105 |

+

log_update(f"\nTotal duplicate sequences: {len(duplicate_sequences)}")

|

| 106 |

+

gb = model_predictions_labeled.groupby('sequence').agg(

|

| 107 |

+

pred_labels=("pred_labels", list),

|

| 108 |

+

)

|

| 109 |

+

gb['pred_labels'] = gb['pred_labels'].apply(lambda x: list(set(x)))

|

| 110 |

+

gb['unique_label_vectors'] = gb['pred_labels'].apply(lambda x: len(x))

|

| 111 |

+

log_update(f"Duplicate entries for sequences have the exact same label vector: {(gb['unique_label_vectors']==1).all()}")

|

| 112 |

+

log_update("\tSince above statement is true, randomly dropping duplicate sequence rows - should make no difference to prediction.")

|

| 113 |

+

|

| 114 |

+

model_predictions_labeled = model_predictions_labeled.drop_duplicates('sequence').reset_index(drop=True)

|

| 115 |

+

#os.makedirs("processed_data/fusion_predictions",exist_ok=True)

|

| 116 |

+

return model_predictions_labeled

|

| 117 |

+

|

| 118 |

+

def calc_average_stats(model_pred_stats):

|

| 119 |

+

# cols: Accuracy Precision Recall F1 AUROC AUPRC pcnt_disordered pred_pcnt_disordered

|

| 120 |

+

averages = model_pred_stats[[

|

| 121 |

+

'Accuracy', 'Precision', 'Recall', 'F1', 'AUROC', 'AUPRC'

|

| 122 |

+

]].mean()

|

| 123 |

+

averages

|

| 124 |

+

|

| 125 |

+

def main():

|

| 126 |

+

with open_logfile("analyze_fusion_preds.txt"):

|

| 127 |

+

## Put path to model predictions you'd like to use for benchmarking

|

| 128 |

+

path_to_model_predictions = "trained_models/fuson_plm/best/caid_hyperparam_screen_fusion_benchmark_probs.csv"

|

| 129 |

+

save_dir = "results/final"

|

| 130 |

+

preds_with_metrics_save_path = f"{save_dir}/model_preds_with_metrics.csv"

|

| 131 |

+

boxplot_save_path = f"{save_dir}/fusion_disorder_boxplots.png"

|

| 132 |

+

r2_save_path = "results/final/fusion_pred_disorder_r2.png"

|

| 133 |

+

|

| 134 |

+

# Additional benchmarking on fusion predictions

|

| 135 |

+

fuson_db = pd.read_csv("../../data/fuson_db.csv")

|

| 136 |

+

seq_id_dict = dict(zip(fuson_db['aa_seq'],fuson_db['seq_id']))

|

| 137 |

+

model_preds_with_metrics = get_model_preds_with_metrics(path_to_model_predictions)

|

| 138 |

+

model_preds_with_metrics['seq_id'] = model_preds_with_metrics['sequence'].map(seq_id_dict)

|

| 139 |

+

model_preds_with_metrics.to_csv(preds_with_metrics_save_path,index=False)

|

| 140 |

+

print(model_preds_with_metrics.columns)

|

| 141 |

+

|

| 142 |

+

# Box plot

|

| 143 |

+

boxplot_model_preds = model_preds_with_metrics[['seq_id','FusionGene',

|

| 144 |

+

'Accuracy', 'Precision', 'Recall', 'F1', 'AUROC'

|

| 145 |

+

]]

|

| 146 |

+

|

| 147 |

+

boxplot_model_preds.to_csv(boxplot_save_path.replace(".png","_source_data.csv"),index=False)

|

| 148 |

+

plot_fusion_stats_boxplots(boxplot_model_preds[['Accuracy', 'Precision', 'Recall', 'F1', 'AUROC'

|

| 149 |

+

]], save_path=boxplot_save_path)

|

| 150 |

+

|

| 151 |

+

# R2 plot

|

| 152 |

+

r2_model_preds = model_preds_with_metrics[['seq_id','FusionGene','pcnt_disordered','pred_pcnt_disordered']]

|

| 153 |

+

r2_model_preds.to_csv(r2_save_path.replace(".png","_source_data.csv"),index=False)

|

| 154 |

+

plot_fusion_frac_disorder_r2(r2_model_preds['pcnt_disordered'], r2_model_preds['pred_pcnt_disordered'], save_path=r2_save_path)

|

| 155 |

+

calc_average_stats(model_preds_with_metrics)

|

| 156 |

+

|

| 157 |

+

if __name__ == "__main__":

|

| 158 |

+

main()

|

fuson_plm/benchmarking/caid/clean.py

ADDED

|

@@ -0,0 +1,671 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

import numpy as np

|

| 3 |

+

import re

|

| 4 |

+

import pandas as pd

|

| 5 |

+

import requests

|

| 6 |

+

|

| 7 |

+

from fuson_plm.utils.logging import open_logfile, log_update

|

| 8 |

+

from fuson_plm.utils.constants import DELIMITERS, VALID_AAS

|

| 9 |

+

from fuson_plm.utils.data_cleaning import check_columns_for_listlike, find_invalid_chars

|

| 10 |

+

|

| 11 |

+

from fuson_plm.benchmarking.caid.scrape_fusionpdb import scrape_fusionpdb_level_2_3

|

| 12 |

+

from fuson_plm.benchmarking.caid.process_fusion_structures import process_fusions_and_hts

|

| 13 |

+

|

| 14 |

+

def download_fasta(uniprotid, includeIsoform, output_file):

|

| 15 |

+

try:

|

| 16 |

+

url = f"https://rest.uniprot.org/uniprotkb/search?format=fasta&includeIsoform={includeIsoform}&query=accession%3A{uniprotid}&size=500&sort=accession+asc"

|

| 17 |

+

# Send a GET request to the URL

|

| 18 |

+

response = requests.get(url)

|

| 19 |

+

|

| 20 |

+

# Raise an exception if the request was unsuccessful

|

| 21 |

+

response.raise_for_status()

|

| 22 |

+

|

| 23 |

+

# Write the content to a file in text mode