Add files using upload-large-folder tool

Browse files- .gitattributes +7 -0

- README.md +173 -3

- assets/.DS_Store +0 -0

- assets/dcvae.png +3 -0

- assets/dit.png +3 -0

- assets/dpo_pipeline.png +3 -0

- assets/logo.png +0 -0

- assets/model_architecture.png +3 -0

- hunyuan_clip/clip_text_encoder/config.json +34 -0

- hunyuan_clip/clip_text_encoder/pytorch_model.bin +3 -0

- hunyuan_clip/tokenizer/special_tokens_map.json +7 -0

- hunyuan_clip/tokenizer/tokenizer_config.json +16 -0

- hunyuan_clip/tokenizer/vocab.txt +0 -0

- hunyuan_clip/tokenizer/vocab_org.txt +0 -0

- lib/liboptimus_ths-torch2.2-cu121.cpython-310-x86_64-linux-gnu.so +3 -0

- lib/liboptimus_ths-torch2.3-cu121.cpython-310-x86_64-linux-gnu.so +3 -0

- lib/liboptimus_ths-torch2.5-cu124.cpython-310-x86_64-linux-gnu.so +3 -0

- model_index.json +12 -0

- scheduler/scheduler_config.json +8 -0

- step_llm/config.json +32 -0

- step_llm/model-00001-of-00009.safetensors +3 -0

- step_llm/model-00002-of-00009.safetensors +3 -0

- step_llm/model-00003-of-00009.safetensors +3 -0

- step_llm/model-00004-of-00009.safetensors +3 -0

- step_llm/model-00005-of-00009.safetensors +3 -0

- step_llm/model-00006-of-00009.safetensors +3 -0

- step_llm/model-00007-of-00009.safetensors +3 -0

- step_llm/model-00008-of-00009.safetensors +3 -0

- step_llm/model-00009-of-00009.safetensors +3 -0

- step_llm/model.safetensors.index.json +296 -0

- step_llm/step1_chat_tokenizer.model +3 -0

- transformer/config.json +20 -0

- transformer/diffusion_pytorch_model-00001-of-00006.safetensors +3 -0

- transformer/diffusion_pytorch_model-00002-of-00006.safetensors +3 -0

- transformer/diffusion_pytorch_model-00003-of-00006.safetensors +3 -0

- transformer/diffusion_pytorch_model-00004-of-00006.safetensors +3 -0

- transformer/diffusion_pytorch_model-00005-of-00006.safetensors +3 -0

- transformer/diffusion_pytorch_model-00006-of-00006.safetensors +3 -0

- transformer/diffusion_pytorch_model.safetensors.index.json +796 -0

- vae/vae.safetensors +3 -0

- vae/vae_v2.safetensors +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,10 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

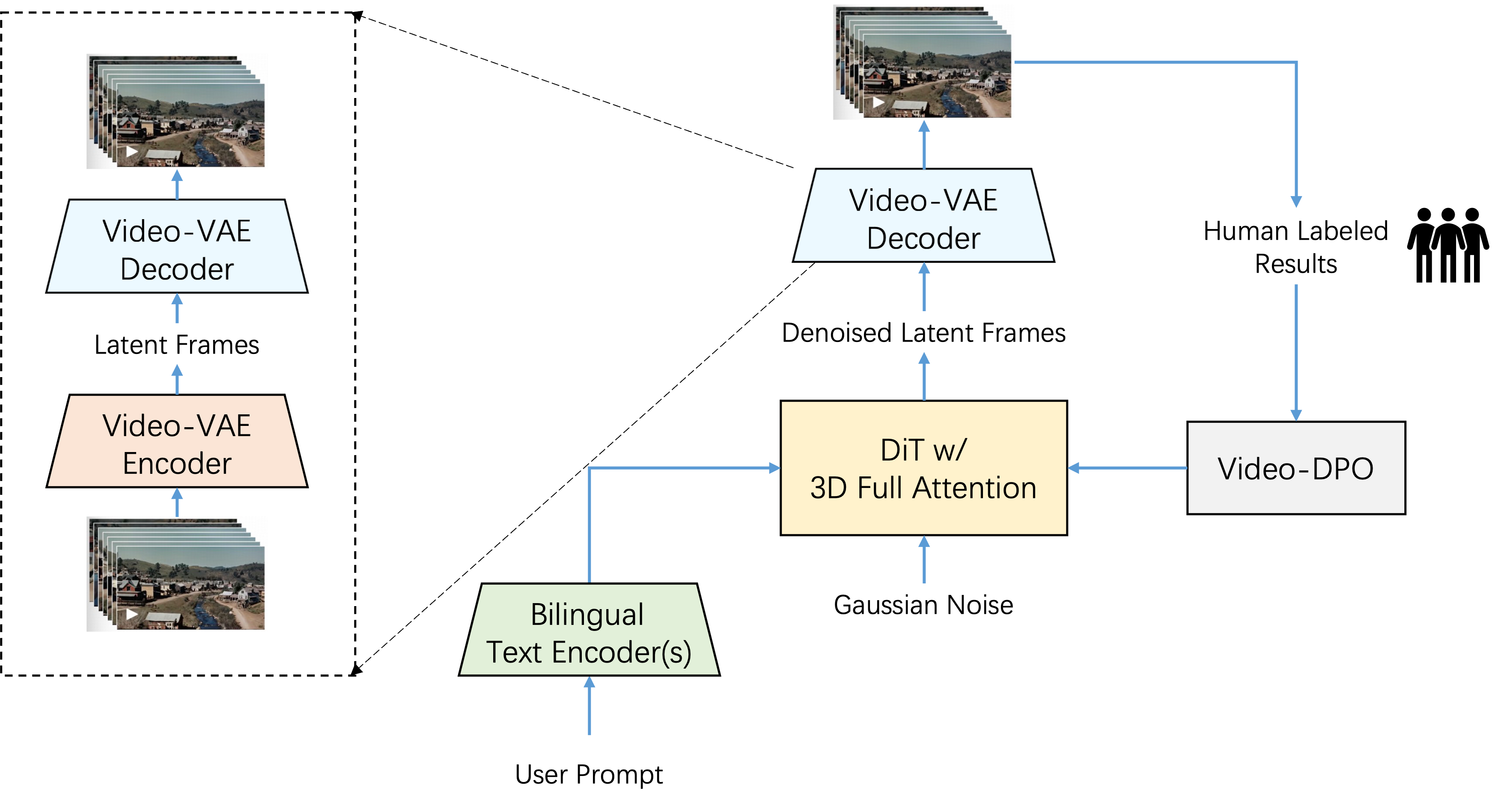

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

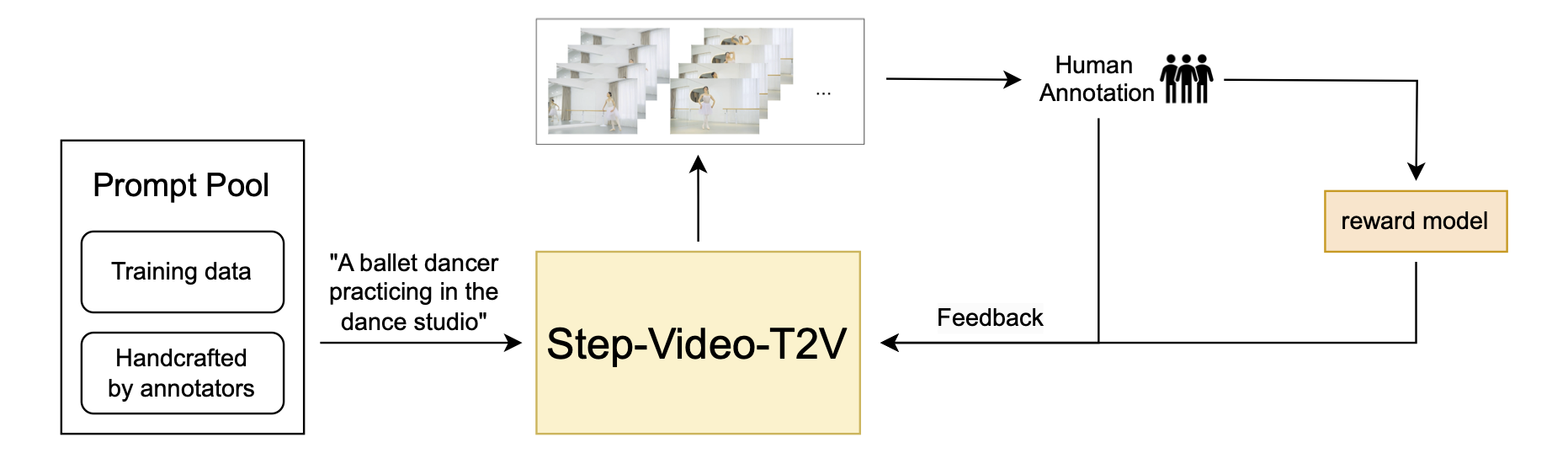

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

lib/liboptimus_ths-torch2.2-cu121.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

lib/liboptimus_ths-torch2.3-cu121.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

lib/liboptimus_ths-torch2.5-cu124.cpython-310-x86_64-linux-gnu.so filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

assets/dcvae.png filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

assets/dit.png filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

assets/dpo_pipeline.png filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

assets/model_architecture.png filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,3 +1,173 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: mit

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

library_name: diffusers

|

| 4 |

+

pipeline_tag: text-to-video

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

<p align="center">

|

| 8 |

+

<img src="assets/logo.png" height=100>

|

| 9 |

+

</p>

|

| 10 |

+

<div align="center">

|

| 11 |

+

<a href="https://yuewen.cn/videos"><img src="https://img.shields.io/static/v1?label=Step-Video&message=Web&color=green"></a>

|

| 12 |

+

<a href="https://arxiv.org/abs/2502.10248"><img src="https://img.shields.io/static/v1?label=Tech Report&message=Arxiv&color=red"></a>

|

| 13 |

+

<a href="https://x.com/StepFun_ai"><img src="https://img.shields.io/static/v1?label=X.com&message=Web&color=blue"></a>

|

| 14 |

+

</div>

|

| 15 |

+

|

| 16 |

+

<div align="center">

|

| 17 |

+

<a href="https://huggingface.co/stepfun-ai/stepvideo-t2v"><img src="https://img.shields.io/static/v1?label=Step-Video-T2V&message=HuggingFace&color=yellow"></a>

|

| 18 |

+

<a href="https://huggingface.co/stepfun-ai/stepvideo-t2v-turbo"><img src="https://img.shields.io/static/v1?label=Step-Video-T2V-Turbo&message=HuggingFace&color=yellow"></a>

|

| 19 |

+

<a href="https://github.com/stepfun-ai/Step-Video-T2V"><img src="https://img.shields.io/static/v1?label=Code&message=Github&color=black"></a>

|

| 20 |

+

</div>

|

| 21 |

+

|

| 22 |

+

## 🔥🔥🔥 News!!

|

| 23 |

+

* Feb 17, 2025: 👋 We release the inference code and model weights of Step-Video-T2V. [Download](https://huggingface.co/stepfun-ai/stepvideo-t2v)

|

| 24 |

+

* Feb 17, 2025: 👋 We release the inference code and model weights of Step-Video-T2V-Turbo. [Download](https://huggingface.co/stepfun-ai/stepvideo-t2v-turbo)

|

| 25 |

+

* Feb 17, 2025: 🎉 We have made our technical report available as open source. [Read](https://arxiv.org/abs/2502.10248)

|

| 26 |

+

|

| 27 |

+

## Video Demos

|

| 28 |

+

|

| 29 |

+

<table border="0" style="width: 100%; text-align: center; margin-top: 1px;">

|

| 30 |

+

<tr>

|

| 31 |

+

<td><video src="https://github.com/user-attachments/assets/9274b351-595d-41fb-aba3-f58e6e91603a" width="100%" controls autoplay loop muted></video></td>

|

| 32 |

+

<td><video src="https://github.com/user-attachments/assets/2f6b3ad5-e93b-436b-98bc-4701182d8652" width="100%" controls autoplay loop muted></video></td>

|

| 33 |

+

<td><video src="https://github.com/user-attachments/assets/67d20ee7-ad78-4b8f-80f6-3fdb00fb52d8" width="100%" controls autoplay loop muted></video></td>

|

| 34 |

+

</tr>

|

| 35 |

+

<tr>

|

| 36 |

+

<td><video src="https://github.com/user-attachments/assets/9abce409-105d-4a8a-ad13-104a98cc8a0b" width="100%" controls autoplay loop muted></video></td>

|

| 37 |

+

<td><video src="https://github.com/user-attachments/assets/8d1e1a47-048a-49ce-85f6-9d013f2d8e89" width="100%" controls autoplay loop muted></video></td>

|

| 38 |

+

<td><video src="https://github.com/user-attachments/assets/32cf4bd1-ec1f-4f77-a488-cd0284aa81bb" width="100%" controls autoplay loop muted></video></td>

|

| 39 |

+

</tr>

|

| 40 |

+

<tr>

|

| 41 |

+

<td><video src="https://github.com/user-attachments/assets/f95a7a49-032a-44ea-a10f-553d4e5d21c6" width="100%" controls autoplay loop muted></video></td>

|

| 42 |

+

<td><video src="https://github.com/user-attachments/assets/3534072e-87d9-4128-a87f-28fcb5d951e0" width="100%" controls autoplay loop muted></video></td>

|

| 43 |

+

<td><video src="https://github.com/user-attachments/assets/6d893dad-556d-4527-a882-666cba3d10e9" width="100%" controls autoplay loop muted></video></td>

|

| 44 |

+

</tr>

|

| 45 |

+

|

| 46 |

+

</table>

|

| 47 |

+

|

| 48 |

+

## Table of Contents

|

| 49 |

+

|

| 50 |

+

1. [Introduction](#1-introduction)

|

| 51 |

+

2. [Model Summary](#2-model-summary)

|

| 52 |

+

3. [Model Download](#3-model-download)

|

| 53 |

+

4. [Model Usage](#4-model-usage)

|

| 54 |

+

5. [Benchmark](#5-benchmark)

|

| 55 |

+

6. [Online Engine](#6-online-engine)

|

| 56 |

+

7. [Citation](#7-citation)

|

| 57 |

+

8. [Acknowledgement](#8-ackownledgement)

|

| 58 |

+

|

| 59 |

+

## 1. Introduction

|

| 60 |

+

We present **Step-Video-T2V**, a state-of-the-art (SoTA) text-to-video pre-trained model with 30 billion parameters and the capability to generate videos up to 204 frames. To enhance both training and inference efficiency, we propose a deep compression VAE for videos, achieving 16x16 spatial and 8x temporal compression ratios. Direct Preference Optimization (DPO) is applied in the final stage to further enhance the visual quality of the generated videos. Step-Video-T2V's performance is evaluated on a novel video generation benchmark, **Step-Video-T2V-Eval**, demonstrating its SoTA text-to-video quality compared to both open-source and commercial engines.

|

| 61 |

+

|

| 62 |

+

## 2. Model Summary

|

| 63 |

+

In Step-Video-T2V, videos are represented by a high-compression Video-VAE, achieving 16x16 spatial and 8x temporal compression ratios. User prompts are encoded using two bilingual pre-trained text encoders to handle both English and Chinese. A DiT with 3D full attention is trained using Flow Matching and is employed to denoise input noise into latent frames, with text embeddings and timesteps serving as conditioning factors. To further enhance the visual quality of the generated videos, a video-based DPO approach is applied, which effectively reduces artifacts and ensures smoother, more realistic video outputs.

|

| 64 |

+

|

| 65 |

+

<p align="center">

|

| 66 |

+

<img width="80%" src="assets/model_architecture.png">

|

| 67 |

+

</p>

|

| 68 |

+

|

| 69 |

+

### 2.1. Video-VAE

|

| 70 |

+

A deep compression Variational Autoencoder (VideoVAE) is designed for video generation tasks, achieving 16x16 spatial and 8x temporal compression ratios while maintaining exceptional video reconstruction quality. This compression not only accelerates training and inference but also aligns with the diffusion process's preference for condensed representations.

|

| 71 |

+

|

| 72 |

+

<p align="center">

|

| 73 |

+

<img width="70%" src="assets/dcvae.png">

|

| 74 |

+

</p>

|

| 75 |

+

|

| 76 |

+

### 2.2. DiT w/ 3D Full Attention

|

| 77 |

+

Step-Video-T2V is built on the DiT architecture, which has 48 layers, each containing 48 attention heads, with each head’s dimension set to 128. AdaLN-Single is leveraged to incorporate the timestep condition, while QK-Norm in the self-attention mechanism is introduced to ensure training stability. Additionally, 3D RoPE is employed, playing a critical role in handling sequences of varying video lengths and resolutions.

|

| 78 |

+

|

| 79 |

+

<p align="center">

|

| 80 |

+

<img width="80%" src="assets/dit.png">

|

| 81 |

+

</p>

|

| 82 |

+

|

| 83 |

+

### 2.3. Video-DPO

|

| 84 |

+

In Step-Video-T2V, we incorporate human feedback through Direct Preference Optimization (DPO) to further enhance the visual quality of the generated videos. DPO leverages human preference data to fine-tune the model, ensuring that the generated content aligns more closely with human expectations. The overall DPO pipeline is shown below, highlighting its critical role in improving both the consistency and quality of the video generation process.

|

| 85 |

+

|

| 86 |

+

<p align="center">

|

| 87 |

+

<img width="100%" src="assets/dpo_pipeline.png">

|

| 88 |

+

</p>

|

| 89 |

+

|

| 90 |

+

|

| 91 |

+

|

| 92 |

+

## 3. Model Download

|

| 93 |

+

| Models | 🤗Huggingface | 🤖Modelscope |

|

| 94 |

+

|:-------:|:-------:|:-------:|

|

| 95 |

+

| Step-Video-T2V | [download](https://huggingface.co/stepfun-ai/stepvideo-t2v) | [download](https://www.modelscope.cn/models/stepfun-ai/stepvideo-t2v)

|

| 96 |

+

| Step-Video-T2V-Turbo (Inference Step Distillation) | [download](https://huggingface.co/stepfun-ai/stepvideo-t2v-turbo) | [download](https://www.modelscope.cn/models/stepfun-ai/stepvideo-t2v-turbo)

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

## 4. Model Usage

|

| 100 |

+

### 📜 4.1 Requirements

|

| 101 |

+

|

| 102 |

+

The following table shows the requirements for running Step-Video-T2V model (batch size = 1, w/o cfg distillation) to generate videos:

|

| 103 |

+

|

| 104 |

+

| Model | height/width/frame | Peak GPU Memory | 50 steps w flash-attn | 50 steps w/o flash-attn |

|

| 105 |

+

|:------------:|:------------:|:------------:|:------------:|:------------:|

|

| 106 |

+

| Step-Video-T2V | 544px992px204f | 77.64 GB | 743 s | 1232 s |

|

| 107 |

+

| Step-Video-T2V | 544px992px136f | 72.48 GB | 408 s | 605 s |

|

| 108 |

+

|

| 109 |

+

* An NVIDIA GPU with CUDA support is required.

|

| 110 |

+

* The model is tested on four GPUs.

|

| 111 |

+

* **Recommended**: We recommend to use GPUs with 80GB of memory for better generation quality.

|

| 112 |

+

* Tested operating system: Linux

|

| 113 |

+

* The self-attention in text-encoder (step_llm) only supports CUDA capabilities sm_80 sm_86 and sm_90

|

| 114 |

+

|

| 115 |

+

### 🔧 4.2 Dependencies and Installation

|

| 116 |

+

- Python >= 3.10.0 (Recommend to use [Anaconda](https://www.anaconda.com/download/#linux) or [Miniconda](https://docs.conda.io/en/latest/miniconda.html))

|

| 117 |

+

- [PyTorch >= 2.3-cu121](https://pytorch.org/)

|

| 118 |

+

- [CUDA Toolkit](https://developer.nvidia.com/cuda-downloads)

|

| 119 |

+

- [FFmpeg](https://www.ffmpeg.org/)

|

| 120 |

+

```bash

|

| 121 |

+

git clone https://github.com/stepfun-ai/Step-Video-T2V.git

|

| 122 |

+

conda create -n stepvideo python=3.10

|

| 123 |

+

conda activate stepvideo

|

| 124 |

+

|

| 125 |

+

cd Step-Video-T2V

|

| 126 |

+

pip install -e .

|

| 127 |

+

pip install flash-attn --no-build-isolation ## flash-attn is optional

|

| 128 |

+

```

|

| 129 |

+

|

| 130 |

+

### 🚀 4.3 Inference Scripts

|

| 131 |

+

- We employed a decoupling strategy for the text encoder, VAE decoding, and DiT to optimize GPU resource utilization by DiT. As a result, a dedicated GPU is needed to handle the API services for the text encoder's embeddings and VAE decoding.

|

| 132 |

+

```bash

|

| 133 |

+

python api/call_remote_server.py --model_dir where_you_download_dir & ## We assume you have more than 4 GPUs available. This command will return the URL for both the caption API and the VAE API. Please use the returned URL in the following command.

|

| 134 |

+

|

| 135 |

+

parallel=4 # or parallel=8

|

| 136 |

+

url='127.0.0.1'

|

| 137 |

+

model_dir=where_you_download_dir

|

| 138 |

+

|

| 139 |

+

torchrun --nproc_per_node $parallel run_parallel.py --model_dir $model_dir --vae_url $url --caption_url $url --ulysses_degree $parallel --prompt "一名宇航员在月球上发现一块石碑,上面印有“stepfun”字样,闪闪发光" --infer_steps 50 --cfg_scale 9.0 --time_shift 13.0

|

| 140 |

+

```

|

| 141 |

+

|

| 142 |

+

### 🚀 4.4 Best-of-Practice Inference settings

|

| 143 |

+

Step-Video-T2V exhibits robust performance in inference settings, consistently generating high-fidelity and dynamic videos. However, our experiments reveal that variations in inference hyperparameters can have a substantial effect on the trade-off between video fidelity and dynamics. To achieve optimal results, we recommend the following best practices for tuning inference parameters:

|

| 144 |

+

|

| 145 |

+

| Models | infer_steps | cfg_scale | time_shift | num_frames |

|

| 146 |

+

|:-------:|:-------:|:-------:|:-------:|:-------:|

|

| 147 |

+

| Step-Video-T2V | 30-50 | 9.0 | 13.0 | 204

|

| 148 |

+

| Step-Video-T2V-Turbo (Inference Step Distillation) | 10-15 | 5.0 | 17.0 | 204 |

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

## 5. Benchmark

|

| 152 |

+

We are releasing [Step-Video-T2V Eval](https://github.com/stepfun-ai/Step-Video-T2V/blob/main/benchmark/Step-Video-T2V-Eval) as a new benchmark, featuring 128 Chinese prompts sourced from real users. This benchmark is designed to evaluate the quality of generated videos across 11 distinct categories: Sports, Food, Scenery, Animals, Festivals, Combination Concepts, Surreal, People, 3D Animation, Cinematography, and Style.

|

| 153 |

+

|

| 154 |

+

## 6. Online Engine

|

| 155 |

+

The online version of Step-Video-T2V is available on [跃问视频](https://yuewen.cn/videos), where you can also explore some impressive examples.

|

| 156 |

+

|

| 157 |

+

## 7. Citation

|

| 158 |

+

```

|

| 159 |

+

@misc{ma2025stepvideot2vtechnicalreportpractice,

|

| 160 |

+

title={Step-Video-T2V Technical Report: The Practice, Challenges, and Future of Video Foundation Model},

|

| 161 |

+

author={Guoqing Ma and Haoyang Huang and Kun Yan and Liangyu Chen and Nan Duan and Shengming Yin and Changyi Wan and Ranchen Ming and Xiaoniu Song and Xing Chen and Yu Zhou and Deshan Sun and Deyu Zhou and Jian Zhou and Kaijun Tan and Kang An and Mei Chen and Wei Ji and Qiling Wu and Wen Sun and Xin Han and Yanan Wei and Zheng Ge and Aojie Li and Bin Wang and Bizhu Huang and Bo Wang and Brian Li and Changxing Miao and Chen Xu and Chenfei Wu and Chenguang Yu and Dapeng Shi and Dingyuan Hu and Enle Liu and Gang Yu and Ge Yang and Guanzhe Huang and Gulin Yan and Haiyang Feng and Hao Nie and Haonan Jia and Hanpeng Hu and Hanqi Chen and Haolong Yan and Heng Wang and Hongcheng Guo and Huilin Xiong and Huixin Xiong and Jiahao Gong and Jianchang Wu and Jiaoren Wu and Jie Wu and Jie Yang and Jiashuai Liu and Jiashuo Li and Jingyang Zhang and Junjing Guo and Junzhe Lin and Kaixiang Li and Lei Liu and Lei Xia and Liang Zhao and Liguo Tan and Liwen Huang and Liying Shi and Ming Li and Mingliang Li and Muhua Cheng and Na Wang and Qiaohui Chen and Qinglin He and Qiuyan Liang and Quan Sun and Ran Sun and Rui Wang and Shaoliang Pang and Shiliang Yang and Sitong Liu and Siqi Liu and Shuli Gao and Tiancheng Cao and Tianyu Wang and Weipeng Ming and Wenqing He and Xu Zhao and Xuelin Zhang and Xianfang Zeng and Xiaojia Liu and Xuan Yang and Yaqi Dai and Yanbo Yu and Yang Li and Yineng Deng and Yingming Wang and Yilei Wang and Yuanwei Lu and Yu Chen and Yu Luo and Yuchu Luo and Yuhe Yin and Yuheng Feng and Yuxiang Yang and Zecheng Tang and Zekai Zhang and Zidong Yang and Binxing Jiao and Jiansheng Chen and Jing Li and Shuchang Zhou and Xiangyu Zhang and Xinhao Zhang and Yibo Zhu and Heung-Yeung Shum and Daxin Jiang},

|

| 162 |

+

year={2025},

|

| 163 |

+

eprint={2502.10248},

|

| 164 |

+

archivePrefix={arXiv},

|

| 165 |

+

primaryClass={cs.CV},

|

| 166 |

+

url={https://arxiv.org/abs/2502.10248},

|

| 167 |

+

}

|

| 168 |

+

```

|

| 169 |

+

|

| 170 |

+

## 8. Acknowledgement

|

| 171 |

+

- We would like to express our sincere thanks to the [xDiT](https://github.com/xdit-project/xDiT) team for their invaluable support and parallelization strategy.

|

| 172 |

+

- Our code will be integrated into the official repository of [Huggingface/Diffusers](https://github.com/huggingface/diffusers).

|

| 173 |

+

- We thank the [FastVideo](https://github.com/hao-ai-lab/FastVideo) team for their continued collaboration and look forward to launching inference acceleration solutions together in the near future.

|

assets/.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

assets/dcvae.png

ADDED

|

Git LFS Details

|

assets/dit.png

ADDED

|

Git LFS Details

|

assets/dpo_pipeline.png

ADDED

|

Git LFS Details

|

assets/logo.png

ADDED

|

assets/model_architecture.png

ADDED

|

Git LFS Details

|

hunyuan_clip/clip_text_encoder/config.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "hfl/chinese-roberta-wwm-ext-large",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"BertModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_probs_dropout_prob": 0.1,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"classifier_dropout": null,

|

| 9 |

+

"directionality": "bidi",

|

| 10 |

+

"eos_token_id": 2,

|

| 11 |

+

"hidden_act": "gelu",

|

| 12 |

+

"hidden_dropout_prob": 0.1,

|

| 13 |

+

"hidden_size": 1024,

|

| 14 |

+

"initializer_range": 0.02,

|

| 15 |

+

"intermediate_size": 4096,

|

| 16 |

+

"layer_norm_eps": 1e-12,

|

| 17 |

+

"max_position_embeddings": 512,

|

| 18 |

+

"model_type": "bert",

|

| 19 |

+

"num_attention_heads": 16,

|

| 20 |

+

"num_hidden_layers": 24,

|

| 21 |

+

"output_past": true,

|

| 22 |

+

"pad_token_id": 0,

|

| 23 |

+

"pooler_fc_size": 768,

|

| 24 |

+

"pooler_num_attention_heads": 12,

|

| 25 |

+

"pooler_num_fc_layers": 3,

|

| 26 |

+

"pooler_size_per_head": 128,

|

| 27 |

+

"pooler_type": "first_token_transform",

|

| 28 |

+

"position_embedding_type": "absolute",

|

| 29 |

+

"torch_dtype": "float32",

|

| 30 |

+

"transformers_version": "4.22.1",

|

| 31 |

+

"type_vocab_size": 2,

|

| 32 |

+

"use_cache": true,

|

| 33 |

+

"vocab_size": 47020

|

| 34 |

+

}

|

hunyuan_clip/clip_text_encoder/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:77fd65c751513310fe575e0ef59963743f89a4955ee5c79d7468157b27e83c51

|

| 3 |

+

size 3936679395

|

hunyuan_clip/tokenizer/special_tokens_map.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cls_token": "[CLS]",

|

| 3 |

+

"mask_token": "[MASK]",

|

| 4 |

+

"pad_token": "[PAD]",

|

| 5 |

+

"sep_token": "[SEP]",

|

| 6 |

+

"unk_token": "[UNK]"

|

| 7 |

+

}

|

hunyuan_clip/tokenizer/tokenizer_config.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cls_token": "[CLS]",

|

| 3 |

+

"do_basic_tokenize": true,

|

| 4 |

+

"do_lower_case": true,

|

| 5 |

+

"mask_token": "[MASK]",

|

| 6 |

+

"name_or_path": "hfl/chinese-roberta-wwm-ext",

|

| 7 |

+

"never_split": null,

|

| 8 |

+

"pad_token": "[PAD]",

|

| 9 |

+

"sep_token": "[SEP]",

|

| 10 |

+

"special_tokens_map_file": "/home/chenweifeng/.cache/huggingface/hub/models--hfl--chinese-roberta-wwm-ext/snapshots/5c58d0b8ec1d9014354d691c538661bf00bfdb44/special_tokens_map.json",

|

| 11 |

+

"strip_accents": null,

|

| 12 |

+

"tokenize_chinese_chars": true,

|

| 13 |

+

"tokenizer_class": "BertTokenizer",

|

| 14 |

+

"unk_token": "[UNK]",

|

| 15 |

+

"model_max_length": 77

|

| 16 |

+

}

|

hunyuan_clip/tokenizer/vocab.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

hunyuan_clip/tokenizer/vocab_org.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

lib/liboptimus_ths-torch2.2-cu121.cpython-310-x86_64-linux-gnu.so

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2201371a93b103f7a5bc87dc8b9f1d781a81fc272d5a8ac8c1a82877aea98661

|

| 3 |

+

size 3994424

|

lib/liboptimus_ths-torch2.3-cu121.cpython-310-x86_64-linux-gnu.so

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a43a22318a90192fe37e167689bbfda9321b7eeb004f8cbafe671fe99204f1fe

|

| 3 |

+

size 3998552

|

lib/liboptimus_ths-torch2.5-cu124.cpython-310-x86_64-linux-gnu.so

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ab934f8ca508ec04b252674d3654628b1eea39ce7834f004a71c6fae399b9ce6

|

| 3 |

+

size 4002768

|

model_index.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "StepVideoPipeline",

|

| 3 |

+

"_diffusers_version": "0.31.0",

|

| 4 |

+

"scheduler": [

|

| 5 |

+

"stepvideo.diffusion.scheduler",

|

| 6 |

+

"FlowMatchDiscreteScheduler"

|

| 7 |

+

],

|

| 8 |

+

"transformer": [

|

| 9 |

+

"stepvideo.modules.model",

|

| 10 |

+

"StepVideoModel"

|

| 11 |

+

]

|

| 12 |

+

}

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "FlowMatchDiscreteScheduler",

|

| 3 |

+

"_diffusers_version": "0.31.0",

|

| 4 |

+

"device": null,

|

| 5 |

+

"num_train_timesteps": 1000,

|

| 6 |

+

"reverse": false,

|

| 7 |

+

"solver": "euler"

|

| 8 |

+

}

|

step_llm/config.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/mnt/shared-storage/tenant/opensource/step_llm",

|

| 3 |

+

"allow_transformer_engine": false,

|

| 4 |

+

"architectures": [

|

| 5 |

+

"Step1Model"

|

| 6 |

+

],

|

| 7 |

+

"attention_dropout": 0.0,

|

| 8 |

+

"attention_impl": "GQA",

|

| 9 |

+

"base_batch_size": 128,

|

| 10 |

+

"embedding_weights_in_fp32": false,

|

| 11 |

+

"ffn_hidden_size": 16896,

|

| 12 |

+

"fp32_residual_connection": false,

|

| 13 |

+

"hidden_dropout": 0.0,

|

| 14 |

+

"hidden_size": 6144,

|

| 15 |

+

"kv_channels": 128,

|

| 16 |

+

"layernorm_epsilon": 1e-05,

|

| 17 |

+

"max_position_embeddings": 16384,

|

| 18 |

+

"num_attention_groups": 8,

|

| 19 |

+

"num_attention_heads": 48,

|

| 20 |

+

"num_layers": 48,

|

| 21 |

+

"orig_vocab_size": 65536,

|

| 22 |

+

"overlap_p2p_comm": true,

|

| 23 |

+

"padded_vocab_size": 65536,

|

| 24 |

+

"params_dtype": "torch.bfloat16",

|

| 25 |

+

"seq_length": 16384,

|

| 26 |

+

"swiglu_recompute_silu_dot": true,

|

| 27 |

+

"tokens_to_generate": 512,

|

| 28 |

+

"torch_dtype": "bfloat16",

|

| 29 |

+

"transformers_version": "4.48.3",

|

| 30 |

+

"use_flash_attn": true,

|

| 31 |

+

"virtual_pipeline_model_parallel_size": 3

|

| 32 |

+

}

|

step_llm/model-00001-of-00009.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0dc79bad96b5f9fdd56106864c56fdc7955627062de4d023ee99944ddac0c930

|

| 3 |

+

size 4976668520

|

step_llm/model-00002-of-00009.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e2112d1a56602599f5cd9c8f3e80b47a9df5861b5a03c565fbd29f4cbc8b7acc

|

| 3 |

+

size 4794241224

|

step_llm/model-00003-of-00009.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3a7bd774bd5c30b51ae342ae92ae9d2706d631bf4ee817fd0997ccc1b0b651c3

|

| 3 |

+

size 4794241248

|

step_llm/model-00004-of-00009.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:88d9d688d310ef2098b9f219d7fd84245d13a46b969497de5c111cdd3196a86e

|

| 3 |

+

size 4794241248

|

step_llm/model-00005-of-00009.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:bfe129667571adf93917a54839a7af52f702bd7c7e63aee34912228507d6c1dc

|

| 3 |

+

size 4794241248

|

step_llm/model-00006-of-00009.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3b4f01bd89d7e047de600579071e6e5e17dfff7aaf8143323ab2989ec840db26

|

| 3 |

+

size 4794241248

|

step_llm/model-00007-of-00009.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:50e9ba842a20b0a0fe9bc00646135b5c82cf544c6fba83aaa3630748f9c9ada9

|

| 3 |

+

size 4794241248

|

step_llm/model-00008-of-00009.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:40c5fd462b5422bdb8665fcb2e4c461cbdbd39f92c9b5e677f87a77044c259af

|

| 3 |

+

size 4794241248

|

step_llm/model-00009-of-00009.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d707aa08cd795a137e6b18558658e65bd9969a27a8aca34d201e3a1b1a68fa4e

|

| 3 |

+

size 622879216

|

step_llm/model.safetensors.index.json

ADDED

|

@@ -0,0 +1,296 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 39159201792

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"tok_embeddings.word_embeddings.weight": "model-00001-of-00009.safetensors",

|

| 7 |

+

"transformer.layers.0.attention.wo.weight": "model-00001-of-00009.safetensors",

|

| 8 |

+

"transformer.layers.0.attention.wqkv.weight": "model-00001-of-00009.safetensors",

|

| 9 |

+

"transformer.layers.0.attention_norm.weight": "model-00001-of-00009.safetensors",

|

| 10 |

+

"transformer.layers.0.feed_forward.w1.weight": "model-00001-of-00009.safetensors",

|

| 11 |

+

"transformer.layers.0.feed_forward.w2.weight": "model-00001-of-00009.safetensors",

|

| 12 |

+

"transformer.layers.0.ffn_norm.weight": "model-00001-of-00009.safetensors",

|

| 13 |

+

"transformer.layers.1.attention.wo.weight": "model-00001-of-00009.safetensors",

|

| 14 |

+

"transformer.layers.1.attention.wqkv.weight": "model-00001-of-00009.safetensors",

|

| 15 |

+

"transformer.layers.1.attention_norm.weight": "model-00001-of-00009.safetensors",

|

| 16 |

+

"transformer.layers.1.feed_forward.w1.weight": "model-00001-of-00009.safetensors",

|

| 17 |

+

"transformer.layers.1.feed_forward.w2.weight": "model-00001-of-00009.safetensors",

|

| 18 |

+

"transformer.layers.1.ffn_norm.weight": "model-00001-of-00009.safetensors",

|

| 19 |

+

"transformer.layers.10.attention.wo.weight": "model-00002-of-00009.safetensors",

|

| 20 |

+

"transformer.layers.10.attention.wqkv.weight": "model-00002-of-00009.safetensors",

|

| 21 |

+

"transformer.layers.10.attention_norm.weight": "model-00002-of-00009.safetensors",

|

| 22 |

+

"transformer.layers.10.feed_forward.w1.weight": "model-00002-of-00009.safetensors",

|

| 23 |

+

"transformer.layers.10.feed_forward.w2.weight": "model-00002-of-00009.safetensors",

|

| 24 |

+

"transformer.layers.10.ffn_norm.weight": "model-00002-of-00009.safetensors",

|

| 25 |

+

"transformer.layers.11.attention.wo.weight": "model-00002-of-00009.safetensors",

|

| 26 |

+

"transformer.layers.11.attention.wqkv.weight": "model-00002-of-00009.safetensors",

|

| 27 |

+

"transformer.layers.11.attention_norm.weight": "model-00003-of-00009.safetensors",

|

| 28 |

+

"transformer.layers.11.feed_forward.w1.weight": "model-00003-of-00009.safetensors",

|

| 29 |

+

"transformer.layers.11.feed_forward.w2.weight": "model-00003-of-00009.safetensors",

|

| 30 |

+

"transformer.layers.11.ffn_norm.weight": "model-00003-of-00009.safetensors",

|

| 31 |

+

"transformer.layers.12.attention.wo.weight": "model-00003-of-00009.safetensors",

|

| 32 |

+

"transformer.layers.12.attention.wqkv.weight": "model-00003-of-00009.safetensors",

|

| 33 |

+

"transformer.layers.12.attention_norm.weight": "model-00003-of-00009.safetensors",

|

| 34 |

+

"transformer.layers.12.feed_forward.w1.weight": "model-00003-of-00009.safetensors",

|

| 35 |

+

"transformer.layers.12.feed_forward.w2.weight": "model-00003-of-00009.safetensors",

|

| 36 |

+

"transformer.layers.12.ffn_norm.weight": "model-00003-of-00009.safetensors",

|

| 37 |

+

"transformer.layers.13.attention.wo.weight": "model-00003-of-00009.safetensors",

|

| 38 |

+

"transformer.layers.13.attention.wqkv.weight": "model-00003-of-00009.safetensors",

|

| 39 |

+

"transformer.layers.13.attention_norm.weight": "model-00003-of-00009.safetensors",

|

| 40 |

+

"transformer.layers.13.feed_forward.w1.weight": "model-00003-of-00009.safetensors",

|

| 41 |

+

"transformer.layers.13.feed_forward.w2.weight": "model-00003-of-00009.safetensors",

|

| 42 |

+

"transformer.layers.13.ffn_norm.weight": "model-00003-of-00009.safetensors",

|

| 43 |

+

"transformer.layers.14.attention.wo.weight": "model-00003-of-00009.safetensors",

|

| 44 |

+

"transformer.layers.14.attention.wqkv.weight": "model-00003-of-00009.safetensors",

|

| 45 |

+

"transformer.layers.14.attention_norm.weight": "model-00003-of-00009.safetensors",

|

| 46 |

+

"transformer.layers.14.feed_forward.w1.weight": "model-00003-of-00009.safetensors",

|

| 47 |

+

"transformer.layers.14.feed_forward.w2.weight": "model-00003-of-00009.safetensors",

|

| 48 |

+

"transformer.layers.14.ffn_norm.weight": "model-00003-of-00009.safetensors",

|

| 49 |

+

"transformer.layers.15.attention.wo.weight": "model-00003-of-00009.safetensors",

|

| 50 |

+

"transformer.layers.15.attention.wqkv.weight": "model-00003-of-00009.safetensors",

|

| 51 |

+

"transformer.layers.15.attention_norm.weight": "model-00003-of-00009.safetensors",

|

| 52 |

+

"transformer.layers.15.feed_forward.w1.weight": "model-00003-of-00009.safetensors",

|

| 53 |

+

"transformer.layers.15.feed_forward.w2.weight": "model-00003-of-00009.safetensors",

|

| 54 |

+

"transformer.layers.15.ffn_norm.weight": "model-00003-of-00009.safetensors",

|

| 55 |

+

"transformer.layers.16.attention.wo.weight": "model-00003-of-00009.safetensors",

|

| 56 |

+

"transformer.layers.16.attention.wqkv.weight": "model-00003-of-00009.safetensors",

|

| 57 |

+

"transformer.layers.16.attention_norm.weight": "model-00003-of-00009.safetensors",

|

| 58 |

+

"transformer.layers.16.feed_forward.w1.weight": "model-00003-of-00009.safetensors",

|

| 59 |

+

"transformer.layers.16.feed_forward.w2.weight": "model-00003-of-00009.safetensors",

|

| 60 |

+

"transformer.layers.16.ffn_norm.weight": "model-00003-of-00009.safetensors",

|

| 61 |

+

"transformer.layers.17.attention.wo.weight": "model-00003-of-00009.safetensors",

|

| 62 |

+

"transformer.layers.17.attention.wqkv.weight": "model-00003-of-00009.safetensors",

|

| 63 |

+

"transformer.layers.17.attention_norm.weight": "model-00004-of-00009.safetensors",

|

| 64 |

+

"transformer.layers.17.feed_forward.w1.weight": "model-00004-of-00009.safetensors",

|

| 65 |

+

"transformer.layers.17.feed_forward.w2.weight": "model-00004-of-00009.safetensors",

|

| 66 |

+

"transformer.layers.17.ffn_norm.weight": "model-00004-of-00009.safetensors",

|

| 67 |

+

"transformer.layers.18.attention.wo.weight": "model-00004-of-00009.safetensors",

|

| 68 |

+

"transformer.layers.18.attention.wqkv.weight": "model-00004-of-00009.safetensors",

|

| 69 |

+

"transformer.layers.18.attention_norm.weight": "model-00004-of-00009.safetensors",

|

| 70 |

+

"transformer.layers.18.feed_forward.w1.weight": "model-00004-of-00009.safetensors",

|

| 71 |

+

"transformer.layers.18.feed_forward.w2.weight": "model-00004-of-00009.safetensors",

|

| 72 |

+

"transformer.layers.18.ffn_norm.weight": "model-00004-of-00009.safetensors",

|

| 73 |

+

"transformer.layers.19.attention.wo.weight": "model-00004-of-00009.safetensors",

|

| 74 |

+

"transformer.layers.19.attention.wqkv.weight": "model-00004-of-00009.safetensors",

|

| 75 |

+

"transformer.layers.19.attention_norm.weight": "model-00004-of-00009.safetensors",

|

| 76 |

+

"transformer.layers.19.feed_forward.w1.weight": "model-00004-of-00009.safetensors",

|

| 77 |

+

"transformer.layers.19.feed_forward.w2.weight": "model-00004-of-00009.safetensors",

|

| 78 |

+

"transformer.layers.19.ffn_norm.weight": "model-00004-of-00009.safetensors",

|

| 79 |

+

"transformer.layers.2.attention.wo.weight": "model-00001-of-00009.safetensors",

|

| 80 |

+

"transformer.layers.2.attention.wqkv.weight": "model-00001-of-00009.safetensors",

|

| 81 |

+

"transformer.layers.2.attention_norm.weight": "model-00001-of-00009.safetensors",

|

| 82 |

+

"transformer.layers.2.feed_forward.w1.weight": "model-00001-of-00009.safetensors",

|

| 83 |

+

"transformer.layers.2.feed_forward.w2.weight": "model-00001-of-00009.safetensors",

|

| 84 |

+

"transformer.layers.2.ffn_norm.weight": "model-00001-of-00009.safetensors",

|

| 85 |

+

"transformer.layers.20.attention.wo.weight": "model-00004-of-00009.safetensors",

|

| 86 |

+

"transformer.layers.20.attention.wqkv.weight": "model-00004-of-00009.safetensors",

|

| 87 |

+

"transformer.layers.20.attention_norm.weight": "model-00004-of-00009.safetensors",

|

| 88 |

+

"transformer.layers.20.feed_forward.w1.weight": "model-00004-of-00009.safetensors",

|

| 89 |

+

"transformer.layers.20.feed_forward.w2.weight": "model-00004-of-00009.safetensors",

|

| 90 |

+

"transformer.layers.20.ffn_norm.weight": "model-00004-of-00009.safetensors",

|

| 91 |

+

"transformer.layers.21.attention.wo.weight": "model-00004-of-00009.safetensors",

|

| 92 |

+

"transformer.layers.21.attention.wqkv.weight": "model-00004-of-00009.safetensors",

|

| 93 |

+

"transformer.layers.21.attention_norm.weight": "model-00004-of-00009.safetensors",

|

| 94 |

+

"transformer.layers.21.feed_forward.w1.weight": "model-00004-of-00009.safetensors",

|

| 95 |

+

"transformer.layers.21.feed_forward.w2.weight": "model-00004-of-00009.safetensors",

|

| 96 |

+

"transformer.layers.21.ffn_norm.weight": "model-00004-of-00009.safetensors",

|

| 97 |

+

"transformer.layers.22.attention.wo.weight": "model-00004-of-00009.safetensors",

|

| 98 |

+

"transformer.layers.22.attention.wqkv.weight": "model-00004-of-00009.safetensors",

|

| 99 |

+

"transformer.layers.22.attention_norm.weight": "model-00004-of-00009.safetensors",

|

| 100 |

+

"transformer.layers.22.feed_forward.w1.weight": "model-00004-of-00009.safetensors",

|

| 101 |

+

"transformer.layers.22.feed_forward.w2.weight": "model-00004-of-00009.safetensors",

|

| 102 |

+

"transformer.layers.22.ffn_norm.weight": "model-00004-of-00009.safetensors",

|

| 103 |

+

"transformer.layers.23.attention.wo.weight": "model-00004-of-00009.safetensors",

|

| 104 |

+

"transformer.layers.23.attention.wqkv.weight": "model-00004-of-00009.safetensors",

|

| 105 |

+

"transformer.layers.23.attention_norm.weight": "model-00005-of-00009.safetensors",

|

| 106 |

+

"transformer.layers.23.feed_forward.w1.weight": "model-00005-of-00009.safetensors",

|

| 107 |

+

"transformer.layers.23.feed_forward.w2.weight": "model-00005-of-00009.safetensors",

|

| 108 |

+

"transformer.layers.23.ffn_norm.weight": "model-00005-of-00009.safetensors",

|

| 109 |

+

"transformer.layers.24.attention.wo.weight": "model-00005-of-00009.safetensors",

|

| 110 |

+

"transformer.layers.24.attention.wqkv.weight": "model-00005-of-00009.safetensors",

|

| 111 |

+

"transformer.layers.24.attention_norm.weight": "model-00005-of-00009.safetensors",

|

| 112 |

+

"transformer.layers.24.feed_forward.w1.weight": "model-00005-of-00009.safetensors",

|

| 113 |

+

"transformer.layers.24.feed_forward.w2.weight": "model-00005-of-00009.safetensors",

|

| 114 |

+

"transformer.layers.24.ffn_norm.weight": "model-00005-of-00009.safetensors",

|

| 115 |

+

"transformer.layers.25.attention.wo.weight": "model-00005-of-00009.safetensors",

|

| 116 |

+

"transformer.layers.25.attention.wqkv.weight": "model-00005-of-00009.safetensors",

|

| 117 |

+

"transformer.layers.25.attention_norm.weight": "model-00005-of-00009.safetensors",

|

| 118 |

+

"transformer.layers.25.feed_forward.w1.weight": "model-00005-of-00009.safetensors",

|

| 119 |

+

"transformer.layers.25.feed_forward.w2.weight": "model-00005-of-00009.safetensors",

|

| 120 |

+

"transformer.layers.25.ffn_norm.weight": "model-00005-of-00009.safetensors",

|

| 121 |

+

"transformer.layers.26.attention.wo.weight": "model-00005-of-00009.safetensors",

|

| 122 |

+

"transformer.layers.26.attention.wqkv.weight": "model-00005-of-00009.safetensors",

|

| 123 |

+

"transformer.layers.26.attention_norm.weight": "model-00005-of-00009.safetensors",

|

| 124 |

+

"transformer.layers.26.feed_forward.w1.weight": "model-00005-of-00009.safetensors",

|

| 125 |

+

"transformer.layers.26.feed_forward.w2.weight": "model-00005-of-00009.safetensors",

|

| 126 |

+

"transformer.layers.26.ffn_norm.weight": "model-00005-of-00009.safetensors",

|

| 127 |

+

"transformer.layers.27.attention.wo.weight": "model-00005-of-00009.safetensors",

|

| 128 |

+

"transformer.layers.27.attention.wqkv.weight": "model-00005-of-00009.safetensors",

|

| 129 |

+

"transformer.layers.27.attention_norm.weight": "model-00005-of-00009.safetensors",

|

| 130 |

+

"transformer.layers.27.feed_forward.w1.weight": "model-00005-of-00009.safetensors",

|

| 131 |

+

"transformer.layers.27.feed_forward.w2.weight": "model-00005-of-00009.safetensors",

|

| 132 |

+

"transformer.layers.27.ffn_norm.weight": "model-00005-of-00009.safetensors",

|

| 133 |

+

"transformer.layers.28.attention.wo.weight": "model-00005-of-00009.safetensors",

|

| 134 |

+

"transformer.layers.28.attention.wqkv.weight": "model-00005-of-00009.safetensors",

|

| 135 |

+

"transformer.layers.28.attention_norm.weight": "model-00005-of-00009.safetensors",

|

| 136 |

+

"transformer.layers.28.feed_forward.w1.weight": "model-00005-of-00009.safetensors",

|

| 137 |

+

"transformer.layers.28.feed_forward.w2.weight": "model-00005-of-00009.safetensors",

|

| 138 |

+

"transformer.layers.28.ffn_norm.weight": "model-00005-of-00009.safetensors",

|

| 139 |

+

"transformer.layers.29.attention.wo.weight": "model-00005-of-00009.safetensors",

|

| 140 |

+

"transformer.layers.29.attention.wqkv.weight": "model-00005-of-00009.safetensors",

|

| 141 |

+

"transformer.layers.29.attention_norm.weight": "model-00006-of-00009.safetensors",

|

| 142 |

+

"transformer.layers.29.feed_forward.w1.weight": "model-00006-of-00009.safetensors",

|

| 143 |

+

"transformer.layers.29.feed_forward.w2.weight": "model-00006-of-00009.safetensors",

|

| 144 |

+

"transformer.layers.29.ffn_norm.weight": "model-00006-of-00009.safetensors",

|

| 145 |

+

"transformer.layers.3.attention.wo.weight": "model-00001-of-00009.safetensors",

|

| 146 |

+

"transformer.layers.3.attention.wqkv.weight": "model-00001-of-00009.safetensors",

|

| 147 |

+

"transformer.layers.3.attention_norm.weight": "model-00001-of-00009.safetensors",

|

| 148 |

+

"transformer.layers.3.feed_forward.w1.weight": "model-00001-of-00009.safetensors",

|

| 149 |

+

"transformer.layers.3.feed_forward.w2.weight": "model-00001-of-00009.safetensors",

|

| 150 |

+

"transformer.layers.3.ffn_norm.weight": "model-00001-of-00009.safetensors",

|

| 151 |

+

"transformer.layers.30.attention.wo.weight": "model-00006-of-00009.safetensors",

|

| 152 |

+

"transformer.layers.30.attention.wqkv.weight": "model-00006-of-00009.safetensors",

|

| 153 |

+

"transformer.layers.30.attention_norm.weight": "model-00006-of-00009.safetensors",

|

| 154 |

+

"transformer.layers.30.feed_forward.w1.weight": "model-00006-of-00009.safetensors",

|

| 155 |

+

"transformer.layers.30.feed_forward.w2.weight": "model-00006-of-00009.safetensors",

|

| 156 |

+

"transformer.layers.30.ffn_norm.weight": "model-00006-of-00009.safetensors",

|

| 157 |

+

"transformer.layers.31.attention.wo.weight": "model-00006-of-00009.safetensors",

|

| 158 |

+

"transformer.layers.31.attention.wqkv.weight": "model-00006-of-00009.safetensors",

|

| 159 |

+

"transformer.layers.31.attention_norm.weight": "model-00006-of-00009.safetensors",

|

| 160 |

+

"transformer.layers.31.feed_forward.w1.weight": "model-00006-of-00009.safetensors",

|

| 161 |

+

"transformer.layers.31.feed_forward.w2.weight": "model-00006-of-00009.safetensors",

|

| 162 |

+

"transformer.layers.31.ffn_norm.weight": "model-00006-of-00009.safetensors",

|

| 163 |

+

"transformer.layers.32.attention.wo.weight": "model-00006-of-00009.safetensors",

|

| 164 |

+

"transformer.layers.32.attention.wqkv.weight": "model-00006-of-00009.safetensors",

|

| 165 |

+

"transformer.layers.32.attention_norm.weight": "model-00006-of-00009.safetensors",

|

| 166 |

+

"transformer.layers.32.feed_forward.w1.weight": "model-00006-of-00009.safetensors",

|

| 167 |

+

"transformer.layers.32.feed_forward.w2.weight": "model-00006-of-00009.safetensors",

|

| 168 |

+

"transformer.layers.32.ffn_norm.weight": "model-00006-of-00009.safetensors",

|

| 169 |

+

"transformer.layers.33.attention.wo.weight": "model-00006-of-00009.safetensors",

|

| 170 |

+

"transformer.layers.33.attention.wqkv.weight": "model-00006-of-00009.safetensors",

|

| 171 |

+

"transformer.layers.33.attention_norm.weight": "model-00006-of-00009.safetensors",

|

| 172 |

+

"transformer.layers.33.feed_forward.w1.weight": "model-00006-of-00009.safetensors",

|

| 173 |

+

"transformer.layers.33.feed_forward.w2.weight": "model-00006-of-00009.safetensors",

|

| 174 |

+

"transformer.layers.33.ffn_norm.weight": "model-00006-of-00009.safetensors",

|

| 175 |

+

"transformer.layers.34.attention.wo.weight": "model-00006-of-00009.safetensors",

|

| 176 |

+

"transformer.layers.34.attention.wqkv.weight": "model-00006-of-00009.safetensors",

|

| 177 |

+

"transformer.layers.34.attention_norm.weight": "model-00006-of-00009.safetensors",

|

| 178 |

+

"transformer.layers.34.feed_forward.w1.weight": "model-00006-of-00009.safetensors",

|

| 179 |

+

"transformer.layers.34.feed_forward.w2.weight": "model-00006-of-00009.safetensors",

|

| 180 |

+

"transformer.layers.34.ffn_norm.weight": "model-00006-of-00009.safetensors",

|

| 181 |

+

"transformer.layers.35.attention.wo.weight": "model-00006-of-00009.safetensors",

|

| 182 |

+

"transformer.layers.35.attention.wqkv.weight": "model-00006-of-00009.safetensors",

|

| 183 |

+

"transformer.layers.35.attention_norm.weight": "model-00007-of-00009.safetensors",

|

| 184 |

+

"transformer.layers.35.feed_forward.w1.weight": "model-00007-of-00009.safetensors",

|

| 185 |

+

"transformer.layers.35.feed_forward.w2.weight": "model-00007-of-00009.safetensors",

|

| 186 |

+

"transformer.layers.35.ffn_norm.weight": "model-00007-of-00009.safetensors",

|

| 187 |

+

"transformer.layers.36.attention.wo.weight": "model-00007-of-00009.safetensors",

|

| 188 |

+

"transformer.layers.36.attention.wqkv.weight": "model-00007-of-00009.safetensors",

|

| 189 |

+

"transformer.layers.36.attention_norm.weight": "model-00007-of-00009.safetensors",

|

| 190 |

+

"transformer.layers.36.feed_forward.w1.weight": "model-00007-of-00009.safetensors",

|

| 191 |

+

"transformer.layers.36.feed_forward.w2.weight": "model-00007-of-00009.safetensors",

|

| 192 |

+

"transformer.layers.36.ffn_norm.weight": "model-00007-of-00009.safetensors",

|

| 193 |

+

"transformer.layers.37.attention.wo.weight": "model-00007-of-00009.safetensors",

|

| 194 |

+

"transformer.layers.37.attention.wqkv.weight": "model-00007-of-00009.safetensors",

|

| 195 |

+

"transformer.layers.37.attention_norm.weight": "model-00007-of-00009.safetensors",

|

| 196 |

+

"transformer.layers.37.feed_forward.w1.weight": "model-00007-of-00009.safetensors",

|

| 197 |

+

"transformer.layers.37.feed_forward.w2.weight": "model-00007-of-00009.safetensors",

|

| 198 |

+

"transformer.layers.37.ffn_norm.weight": "model-00007-of-00009.safetensors",

|

| 199 |

+

"transformer.layers.38.attention.wo.weight": "model-00007-of-00009.safetensors",

|

| 200 |

+

"transformer.layers.38.attention.wqkv.weight": "model-00007-of-00009.safetensors",

|

| 201 |

+

"transformer.layers.38.attention_norm.weight": "model-00007-of-00009.safetensors",

|

| 202 |

+

"transformer.layers.38.feed_forward.w1.weight": "model-00007-of-00009.safetensors",

|

| 203 |

+

"transformer.layers.38.feed_forward.w2.weight": "model-00007-of-00009.safetensors",

|

| 204 |

+

"transformer.layers.38.ffn_norm.weight": "model-00007-of-00009.safetensors",

|

| 205 |

+

"transformer.layers.39.attention.wo.weight": "model-00007-of-00009.safetensors",

|

| 206 |

+

"transformer.layers.39.attention.wqkv.weight": "model-00007-of-00009.safetensors",

|

| 207 |

+

"transformer.layers.39.attention_norm.weight": "model-00007-of-00009.safetensors",

|

| 208 |

+

"transformer.layers.39.feed_forward.w1.weight": "model-00007-of-00009.safetensors",

|

| 209 |

+

"transformer.layers.39.feed_forward.w2.weight": "model-00007-of-00009.safetensors",

|

| 210 |

+

"transformer.layers.39.ffn_norm.weight": "model-00007-of-00009.safetensors",

|

| 211 |

+

"transformer.layers.4.attention.wo.weight": "model-00001-of-00009.safetensors",

|

| 212 |

+

"transformer.layers.4.attention.wqkv.weight": "model-00001-of-00009.safetensors",

|

| 213 |

+

"transformer.layers.4.attention_norm.weight": "model-00001-of-00009.safetensors",

|

| 214 |

+

"transformer.layers.4.feed_forward.w1.weight": "model-00001-of-00009.safetensors",

|

| 215 |

+

"transformer.layers.4.feed_forward.w2.weight": "model-00001-of-00009.safetensors",

|

| 216 |

+

"transformer.layers.4.ffn_norm.weight": "model-00001-of-00009.safetensors",

|

| 217 |

+

"transformer.layers.40.attention.wo.weight": "model-00007-of-00009.safetensors",

|

| 218 |

+

"transformer.layers.40.attention.wqkv.weight": "model-00007-of-00009.safetensors",

|

| 219 |

+

"transformer.layers.40.attention_norm.weight": "model-00007-of-00009.safetensors",

|

| 220 |

+

"transformer.layers.40.feed_forward.w1.weight": "model-00007-of-00009.safetensors",

|

| 221 |

+

"transformer.layers.40.feed_forward.w2.weight": "model-00007-of-00009.safetensors",

|

| 222 |

+

"transformer.layers.40.ffn_norm.weight": "model-00007-of-00009.safetensors",

|

| 223 |

+

"transformer.layers.41.attention.wo.weight": "model-00007-of-00009.safetensors",

|

| 224 |

+

"transformer.layers.41.attention.wqkv.weight": "model-00007-of-00009.safetensors",

|

| 225 |

+

"transformer.layers.41.attention_norm.weight": "model-00008-of-00009.safetensors",

|

| 226 |

+

"transformer.layers.41.feed_forward.w1.weight": "model-00008-of-00009.safetensors",

|

| 227 |

+

"transformer.layers.41.feed_forward.w2.weight": "model-00008-of-00009.safetensors",

|

| 228 |

+

"transformer.layers.41.ffn_norm.weight": "model-00008-of-00009.safetensors",

|

| 229 |

+

"transformer.layers.42.attention.wo.weight": "model-00008-of-00009.safetensors",

|

| 230 |

+

"transformer.layers.42.attention.wqkv.weight": "model-00008-of-00009.safetensors",

|

| 231 |

+