Spaces:

Sleeping

Sleeping

Commit

·

022b5a1

1

Parent(s):

7ec27e8

Working with most parameters

Browse files- app.py +115 -18

- examples/bsZTs.jpg +0 -0

- examples/cans.png +0 -0

- examples/gboriginal.jpg +0 -0

- examples/pose.png +3 -0

- examples/singlemarkerssource.jpg +0 -0

app.py

CHANGED

|

@@ -5,9 +5,35 @@ import cv2

|

|

| 5 |

import gradio as gr

|

| 6 |

import os

|

| 7 |

|

| 8 |

-

dict_list = ['DICT_4X4_50',

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 9 |

|

| 10 |

-

def inference(image_path, dict_name

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

if not dict_name:

|

| 12 |

raise gr.Error("No model selected. Please select a model.")

|

| 13 |

if not image_path:

|

|

@@ -17,17 +43,47 @@ def inference(image_path, dict_name):

|

|

| 17 |

aruco_dict = cv2.aruco.getPredefinedDictionary(dict_index)

|

| 18 |

|

| 19 |

aruco_params = cv2.aruco.DetectorParameters()

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 20 |

detector = cv2.aruco.ArucoDetector(aruco_dict, aruco_params)

|

| 21 |

image = cv2.imread(image_path)

|

| 22 |

|

| 23 |

corners, ids, rejectedImgPoints = detector.detectMarkers(image)

|

|

|

|

| 24 |

image = cv2.aruco.drawDetectedMarkers(image, corners, ids, borderColor=(0, 255, 0))

|

|

|

|

|

|

|

|

|

|

| 25 |

for corner in corners:

|

| 26 |

cv2.polylines(image, [corner.astype(int)], isClosed=True, color=(0, 255, 0), thickness=3)

|

| 27 |

cv2.imwrite("output.jpg", image)

|

| 28 |

output_image = cv2.cvtColor(cv2.imread("output.jpg"), cv2.COLOR_BGR2RGB)

|

| 29 |

-

|

| 30 |

-

return output_image

|

| 31 |

|

| 32 |

def get_aruco_dict():

|

| 33 |

#PREDEFINED_DICTIONARY_NAME

|

|

@@ -35,22 +91,63 @@ def get_aruco_dict():

|

|

| 35 |

|

| 36 |

aruco_dict = get_aruco_dict()

|

| 37 |

|

| 38 |

-

image_paths= [['examples/cans.png', 'DICT_4X4_50',

|

| 39 |

-

['examples/image4k.png', 'DICT_4X4_50',

|

|

|

|

|

|

|

| 40 |

]

|

| 41 |

|

| 42 |

-

|

| 43 |

-

|

| 44 |

-

|

| 45 |

-

|

| 46 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 47 |

|

| 48 |

-

|

| 49 |

-

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

examples=image_paths,

|

| 53 |

-

|

| 54 |

-

|

|

|

|

|

|

|

|

|

|

| 55 |

|

| 56 |

demo.launch()

|

|

|

|

| 5 |

import gradio as gr

|

| 6 |

import os

|

| 7 |

|

| 8 |

+

dict_list = ['DICT_4X4_50',

|

| 9 |

+

'DICT_4X4_100',

|

| 10 |

+

'DICT_4X4_250',

|

| 11 |

+

'DICT_4X4_1000',

|

| 12 |

+

'DICT_5X5_50',

|

| 13 |

+

'DICT_5X5_100',

|

| 14 |

+

'DICT_5X5_250',

|

| 15 |

+

'DICT_5X5_1000',

|

| 16 |

+

'DICT_6X6_50',

|

| 17 |

+

'DICT_6X6_100',

|

| 18 |

+

'DICT_6X6_250',

|

| 19 |

+

'DICT_6X6_1000',

|

| 20 |

+

'DICT_7X7_50',

|

| 21 |

+

'DICT_7X7_100',

|

| 22 |

+

'DICT_7X7_250',

|

| 23 |

+

'DICT_7X7_1000',

|

| 24 |

+

'DICT_ARUCO_ORIGINAL',

|

| 25 |

+

'DICT_APRILTAG_16h5',

|

| 26 |

+

'DICT_APRILTAG_25h9',

|

| 27 |

+

'DICT_APRILTAG_36h10',

|

| 28 |

+

'DICT_APRILTAG_36h11',

|

| 29 |

+

'DICT_ARUCO_MIP_36h12']

|

| 30 |

|

| 31 |

+

def inference(image_path, dict_name, draw_rejects,

|

| 32 |

+

adaptiveThreshWinSizeMin, adaptiveThreshWinSizeMax, adaptiveThreshWinSizeStep, adaptiveThreshConstant,

|

| 33 |

+

minMarkerPerimeterRate, maxMarkerPerimeterRate,

|

| 34 |

+

polygonalApproxAccuracyRate, minCornerDistanceRate, minDistanceToBorder, minMarkerDistanceRate,

|

| 35 |

+

cornerRefinementMethod, cornerRefinementWinSize, cornerRefinementMaxIterations, cornerRefinementMinAccuracy,

|

| 36 |

+

markerBoderBits, perspectiveRemovePixelPerCell, perspectiveRemoveIgnoredMarginPerCell, maxErroneousBitsInBorderRate, minOtsuStdDev, errorCorrectionRate):

|

| 37 |

if not dict_name:

|

| 38 |

raise gr.Error("No model selected. Please select a model.")

|

| 39 |

if not image_path:

|

|

|

|

| 43 |

aruco_dict = cv2.aruco.getPredefinedDictionary(dict_index)

|

| 44 |

|

| 45 |

aruco_params = cv2.aruco.DetectorParameters()

|

| 46 |

+

|

| 47 |

+

aruco_params.adaptiveThreshWinSizeMin = int(adaptiveThreshWinSizeMin)

|

| 48 |

+

aruco_params.adaptiveThreshWinSizeMax = int(adaptiveThreshWinSizeMax)

|

| 49 |

+

aruco_params.adaptiveThreshWinSizeStep = int(adaptiveThreshWinSizeStep)

|

| 50 |

+

aruco_params.adaptiveThreshConstant = int(adaptiveThreshConstant)

|

| 51 |

+

aruco_params.minMarkerPerimeterRate = minMarkerPerimeterRate

|

| 52 |

+

aruco_params.maxMarkerPerimeterRate = maxMarkerPerimeterRate

|

| 53 |

+

aruco_params.polygonalApproxAccuracyRate = polygonalApproxAccuracyRate

|

| 54 |

+

aruco_params.minCornerDistanceRate = minCornerDistanceRate

|

| 55 |

+

aruco_params.minDistanceToBorder = minDistanceToBorder

|

| 56 |

+

aruco_params.minMarkerDistanceRate = minMarkerDistanceRate

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

aruco_params.cornerRefinementMethod = cornerRefinementMethods.index(cornerRefinementMethod)

|

| 60 |

+

aruco_params.cornerRefinementWinSize = int(cornerRefinementWinSize)

|

| 61 |

+

aruco_params.cornerRefinementMaxIterations = int(cornerRefinementMaxIterations)

|

| 62 |

+

aruco_params.cornerRefinementMinAccuracy = cornerRefinementMinAccuracy

|

| 63 |

+

|

| 64 |

+

aruco_params.markerBorderBits = int(markerBoderBits)

|

| 65 |

+

aruco_params.perspectiveRemovePixelPerCell = int(perspectiveRemovePixelPerCell)

|

| 66 |

+

aruco_params.perspectiveRemoveIgnoredMarginPerCell = perspectiveRemoveIgnoredMarginPerCell

|

| 67 |

+

aruco_params.maxErroneousBitsInBorderRate = maxErroneousBitsInBorderRate

|

| 68 |

+

aruco_params.minOtsuStdDev = minOtsuStdDev

|

| 69 |

+

aruco_params.errorCorrectionRate = errorCorrectionRate

|

| 70 |

+

|

| 71 |

+

|

| 72 |

detector = cv2.aruco.ArucoDetector(aruco_dict, aruco_params)

|

| 73 |

image = cv2.imread(image_path)

|

| 74 |

|

| 75 |

corners, ids, rejectedImgPoints = detector.detectMarkers(image)

|

| 76 |

+

thresh_image = cv2.adaptiveThreshold(cv2.cvtColor(image, cv2.COLOR_BGR2GRAY), 255, cv2.ADAPTIVE_THRESH_GAUSSIAN_C, cv2.THRESH_BINARY, int(adaptiveThreshConstant), 2)

|

| 77 |

image = cv2.aruco.drawDetectedMarkers(image, corners, ids, borderColor=(0, 255, 0))

|

| 78 |

+

if draw_rejects:

|

| 79 |

+

image = cv2.aruco.drawDetectedMarkers(image, rejectedImgPoints, borderColor=(0, 0, 255))

|

| 80 |

+

|

| 81 |

for corner in corners:

|

| 82 |

cv2.polylines(image, [corner.astype(int)], isClosed=True, color=(0, 255, 0), thickness=3)

|

| 83 |

cv2.imwrite("output.jpg", image)

|

| 84 |

output_image = cv2.cvtColor(cv2.imread("output.jpg"), cv2.COLOR_BGR2RGB)

|

| 85 |

+

# TODO make a gif going through the thresh win size

|

| 86 |

+

return output_image, thresh_image

|

| 87 |

|

| 88 |

def get_aruco_dict():

|

| 89 |

#PREDEFINED_DICTIONARY_NAME

|

|

|

|

| 91 |

|

| 92 |

aruco_dict = get_aruco_dict()

|

| 93 |

|

| 94 |

+

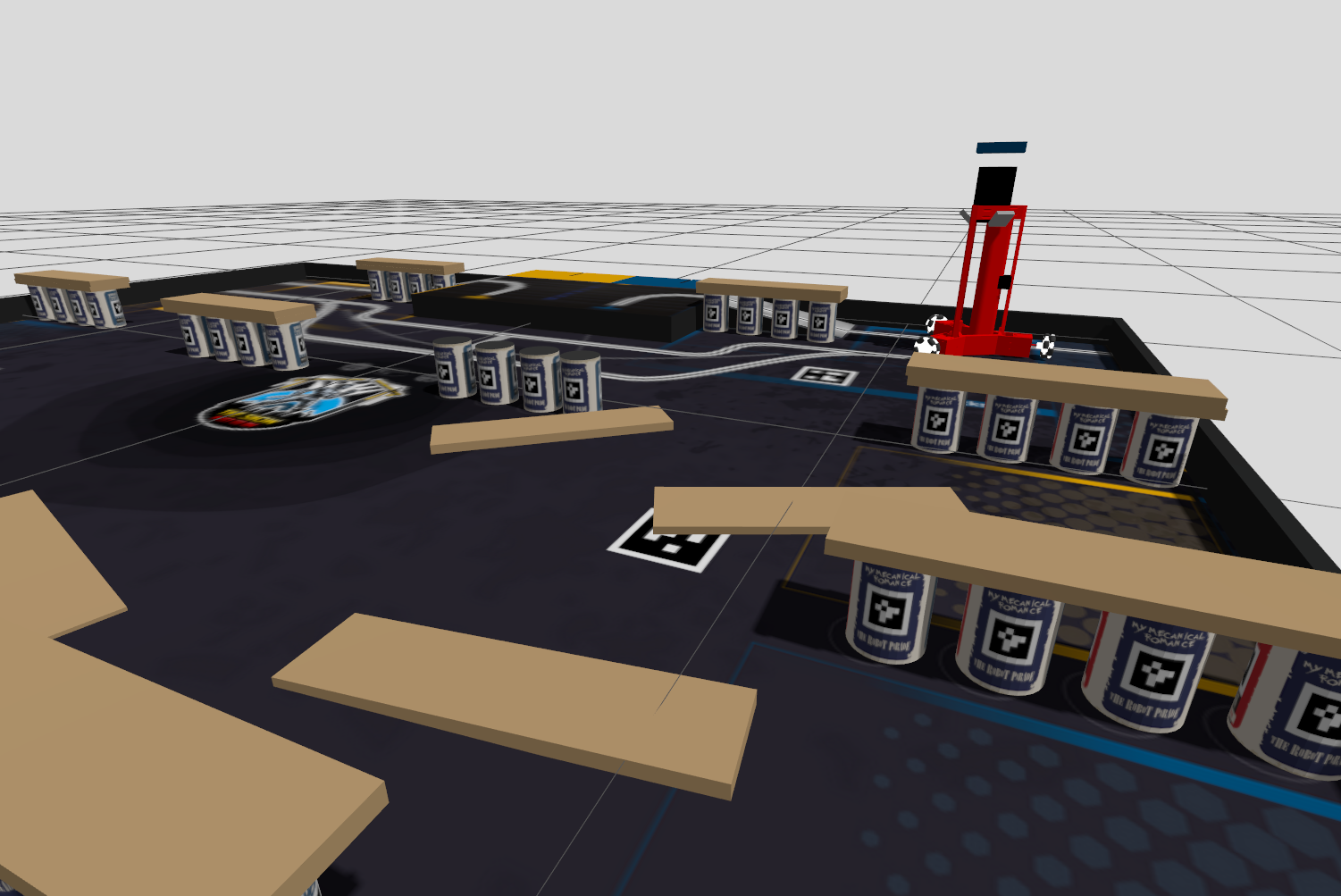

image_paths= [['examples/cans.png', 'DICT_4X4_50', 3, 23, 4, 7],

|

| 95 |

+

['examples/image4k.png', 'DICT_4X4_50', 3, 23, 4, 7],

|

| 96 |

+

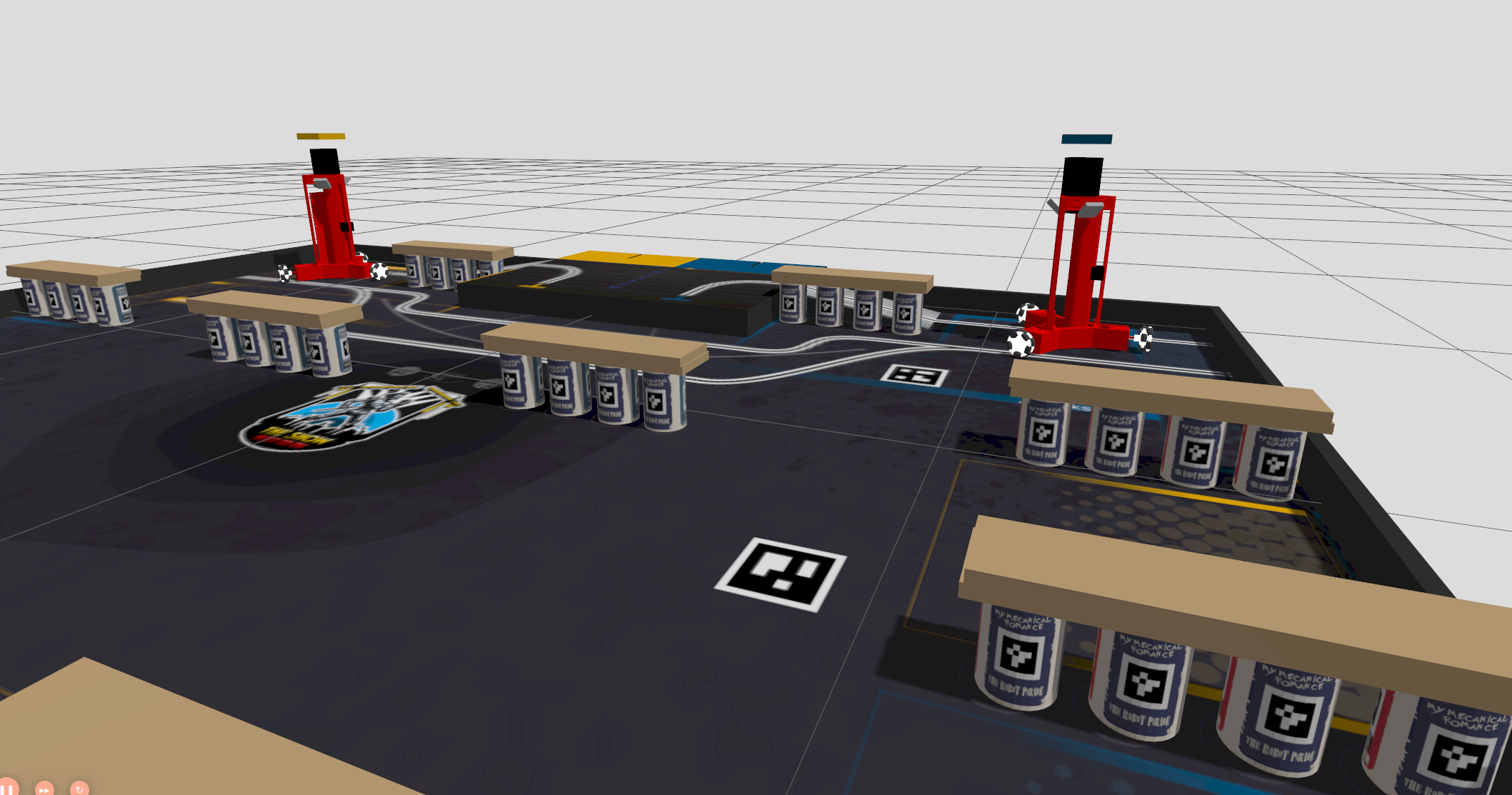

['examples/pose.png', 'DICT_5X5_1000', 3, 23, 4, 7],

|

| 97 |

+

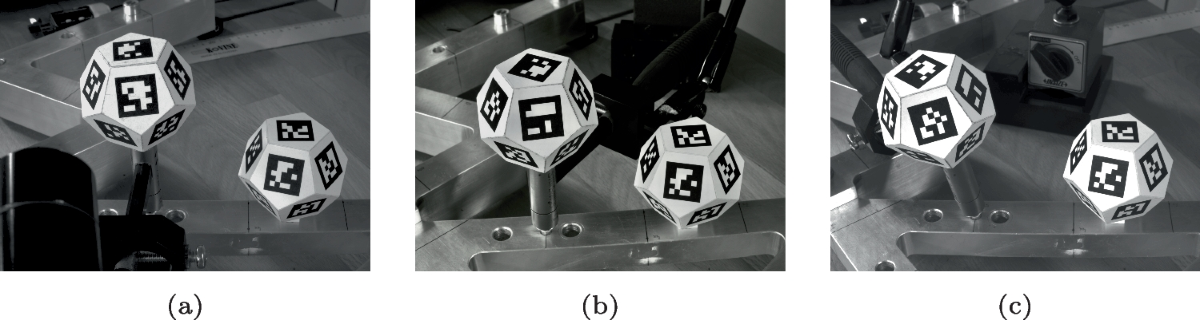

['examples/singlemarkerssource.jpg', 'DICT_6X6_250', 3, 23, 4, 7],

|

| 98 |

]

|

| 99 |

|

| 100 |

+

cornerRefinementMethods = ["CORNER_REFINE_NONE", "CORNER_REFINE_SUBPIX", "CORNER_REFINE_CONTOUR", "CORNER_REFINE_APRILTAG"]

|

| 101 |

+

|

| 102 |

+

|

| 103 |

+

with gr.Blocks() as demo:

|

| 104 |

+

gr.Markdown("# Aruco tag detection\nSelect the aruco library, upload an image, and detect the aruco tags.")

|

| 105 |

+

|

| 106 |

+

with gr.Row():

|

| 107 |

+

with gr.Column():

|

| 108 |

+

image_input = gr.Image(type="filepath", label="Upload Image")

|

| 109 |

+

dict_dropdown = gr.Dropdown(choices=aruco_dict, label="Select aruco library")

|

| 110 |

+

advanced_params = gr.Accordion("Advanced Parameters", open=False)

|

| 111 |

+

with advanced_params:

|

| 112 |

+

rejects_radio = gr.Checkbox(label="Show Rejects", value=False)

|

| 113 |

+

thresh_min_slider = gr.Slider(minimum=3, maximum=100, step=1, value=3, label="adapatativeThreshWinSizeMin")

|

| 114 |

+

thresh_max_slider = gr.Slider(minimum=0, maximum=100, step=1, value=23, label="adapatativeThreshWinSizeMax")

|

| 115 |

+

thresh_step_slider = gr.Slider(minimum=1, maximum=100, step=1, value=10, label="adapatativeThreshWinSizeStep")

|

| 116 |

+

thresh_const_slider = gr.Slider(minimum=0, maximum=50, step=1, value=7, label="adapatativeThreshConstant")

|

| 117 |

+

|

| 118 |

+

min_marker_p_slider = gr.Slider(minimum=0, maximum=1, step=0.01, value=0.03, label="minMarkerPerimeterRate")

|

| 119 |

+

max_marker_p_slider = gr.Slider(minimum=0, maximum=10, step=0.01, value=4.0, label="maxMarkerPerimeterRate")

|

| 120 |

+

poly_approx_acc_rate_slider = gr.Slider(minimum=0, maximum=1, step=0.01, value=0.05, label="polygonalApproxAccuracyRate")

|

| 121 |

+

min_corner_distance_rate_slider = gr.Slider(minimum=0, maximum=1, step=0.01, value=0.05, label="minCornerDistanceRate")

|

| 122 |

+

min_distance_to_border_slider = gr.Slider(minimum=0, maximum=25, step=1, value=3, label="minDistanceToBorder")

|

| 123 |

+

min_marker_distance_rate_slider = gr.Slider(minimum=0, maximum=1, step=0.01, value=0.05, label="minMarkerDistanceRate")

|

| 124 |

+

|

| 125 |

+

cornerRefinementMethod_radio = gr.Radio(

|

| 126 |

+

choices=cornerRefinementMethods,

|

| 127 |

+

label="cornerRefinementMethod", value=cornerRefinementMethods[0]

|

| 128 |

+

)

|

| 129 |

+

cornerRefinementWinSize_slider = gr.Slider(minimum=0, maximum=20, step=1, value=5, label="cornerRefinementWinSize")

|

| 130 |

+

cornerRefinementMaxIterations_slider = gr.Slider(minimum=1, maximum=50, step=1, value=30, label="cornerRefinementMaxIterations")

|

| 131 |

+

cornerRefinementMinAccuracy_slider = gr.Slider(minimum=0.01, maximum=2, step=0.01, value=0.1, label="cornerRefinementMinAccuracy")

|

| 132 |

+

markerBoderBits_slider = gr.Slider(minimum=1, maximum=100, step=1, value=1, label="markerBoderBits")

|

| 133 |

+

perspectiveRemovePixelPerCell_slider = gr.Slider(minimum=0, maximum=100, step=1, value=8, label="perspectiveRemovePixelPerCell")

|

| 134 |

+

perspectiveRemoveIgnoredMarginPerCell_slider = gr.Slider(minimum=0, maximum=1, step=0.01, value=0.13, label="perspectiveRemoveIgnoredMarginPerCell")

|

| 135 |

+

maxErroneousBitsInBorderRate_slider = gr.Slider(minimum=0, maximum=1, step=0.01, value=0.04, label="maxErroneousBitsInBorderRate")

|

| 136 |

+

minOtsuStdDev_slider = gr.Slider(minimum=0, maximum=10, step=0.1, value=5.0, label="minOtsuStdDev")

|

| 137 |

+

errorCorrectionRate_slider = gr.Slider(minimum=0, maximum=1, step=0.01, value=0.6, label="errorCorrectionRate")

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

submit_button = gr.Button("Submit")

|

| 141 |

|

| 142 |

+

with gr.Column():

|

| 143 |

+

output_image = gr.Image(type="numpy", label="Output Image")

|

| 144 |

+

thresh_image = gr.Image(type="numpy", label="Thresh Image")

|

| 145 |

+

|

| 146 |

+

examples = gr.Examples(examples=image_paths, inputs=[image_input, dict_dropdown, thresh_min_slider, thresh_max_slider, thresh_step_slider, thresh_const_slider], outputs=[output_image, thresh_image])

|

| 147 |

+

submit_button.click(

|

| 148 |

+

inference,

|

| 149 |

+

inputs=[image_input, dict_dropdown, rejects_radio, thresh_min_slider, thresh_max_slider, thresh_step_slider, thresh_const_slider, min_marker_p_slider, max_marker_p_slider, poly_approx_acc_rate_slider, min_corner_distance_rate_slider, min_distance_to_border_slider, min_marker_distance_rate_slider, cornerRefinementMethod_radio, cornerRefinementWinSize_slider, cornerRefinementMaxIterations_slider, cornerRefinementMinAccuracy_slider, markerBoderBits_slider, perspectiveRemovePixelPerCell_slider, perspectiveRemoveIgnoredMarginPerCell_slider, maxErroneousBitsInBorderRate_slider, minOtsuStdDev_slider, errorCorrectionRate_slider],

|

| 150 |

+

outputs=[output_image, thresh_image]

|

| 151 |

+

)

|

| 152 |

|

| 153 |

demo.launch()

|

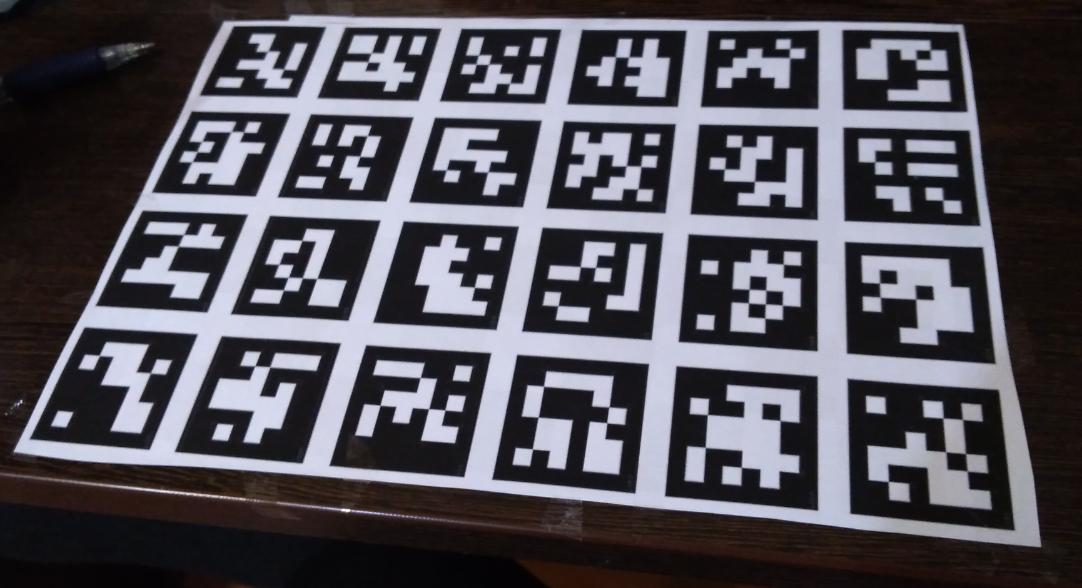

examples/bsZTs.jpg

ADDED

|

examples/cans.png

CHANGED

|

|

Git LFS Details

|

examples/gboriginal.jpg

ADDED

|

examples/pose.png

ADDED

|

Git LFS Details

|

examples/singlemarkerssource.jpg

ADDED

|