Spaces:

Running

on

Zero

Running

on

Zero

initial commit

Browse files- .gitattributes +4 -0

- bee.JPG +3 -0

- bee_edited.jpg +3 -0

- dinov3_keypoint_demo.py +192 -0

- map.jpg +3 -0

- requirements.txt +6 -0

- street.jpg +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,7 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

bee_edited.jpg filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

bee.JPG filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

map.jpg filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

street.jpg filter=lfs diff=lfs merge=lfs -text

|

bee.JPG

ADDED

|

|

Git LFS Details

|

bee_edited.jpg

ADDED

|

Git LFS Details

|

dinov3_keypoint_demo.py

ADDED

|

@@ -0,0 +1,192 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

import torch

|

| 3 |

+

import numpy as np

|

| 4 |

+

from PIL import Image

|

| 5 |

+

import cv2

|

| 6 |

+

from transformers import AutoImageProcessor, AutoModel

|

| 7 |

+

import torch.nn.functional as F

|

| 8 |

+

import spaces

|

| 9 |

+

|

| 10 |

+

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

|

| 11 |

+

|

| 12 |

+

DINO_MODELS = {

|

| 13 |

+

"DINOv3 Base ViT": "facebook/dinov3-vitb16-pretrain-lvd1689m",

|

| 14 |

+

"DINOv3 Large ViT": "facebook/dinov3-vitl16-pretrain-lvd1689m",

|

| 15 |

+

"DINOv3 Large ConvNeXT": "facebook/dinov3-convnext-large-pretrain-lvd1689m"

|

| 16 |

+

}

|

| 17 |

+

|

| 18 |

+

current_processor = None

|

| 19 |

+

current_model = None

|

| 20 |

+

DEVICE = "cuda" if torch.cuda.is_available() else "cpu"

|

| 21 |

+

|

| 22 |

+

def load_model(model_name):

|

| 23 |

+

global current_processor, current_model

|

| 24 |

+

|

| 25 |

+

model_path = DINO_MODELS[model_name]

|

| 26 |

+

|

| 27 |

+

try:

|

| 28 |

+

current_processor = AutoImageProcessor.from_pretrained(model_path)

|

| 29 |

+

current_model = AutoModel.from_pretrained(model_path)

|

| 30 |

+

current_model = current_model.to(DEVICE)

|

| 31 |

+

return f"✅ Model '{model_name}' loaded successfully!"

|

| 32 |

+

except Exception as e:

|

| 33 |

+

return f"❌ Error loading model '{model_name}': {str(e)}"

|

| 34 |

+

|

| 35 |

+

@spaces.GPU()

|

| 36 |

+

def extract_features(image):

|

| 37 |

+

|

| 38 |

+

original_size = image.size

|

| 39 |

+

|

| 40 |

+

inputs = current_processor(images=image, return_tensors="pt")

|

| 41 |

+

inputs = {k: v.to(DEVICE) for k, v in inputs.items()}

|

| 42 |

+

|

| 43 |

+

model_size = current_processor.size['height']

|

| 44 |

+

|

| 45 |

+

with torch.no_grad():

|

| 46 |

+

outputs = current_model(**inputs)

|

| 47 |

+

features = outputs.last_hidden_state

|

| 48 |

+

|

| 49 |

+

return features, original_size, model_size

|

| 50 |

+

|

| 51 |

+

def find_correspondences(features1, features2, threshold=0.8):

|

| 52 |

+

B, N1, D = features1.shape

|

| 53 |

+

B, N2, D = features2.shape

|

| 54 |

+

|

| 55 |

+

features1_norm = F.normalize(features1, dim=-1)

|

| 56 |

+

features2_norm = F.normalize(features2, dim=-1)

|

| 57 |

+

|

| 58 |

+

similarity = torch.matmul(features1_norm, features2_norm.transpose(-2, -1))

|

| 59 |

+

|

| 60 |

+

matches1 = torch.argmax(similarity, dim=-1)

|

| 61 |

+

matches2 = torch.argmax(similarity, dim=-2)

|

| 62 |

+

|

| 63 |

+

max_sim1 = torch.max(similarity, dim=-1)[0]

|

| 64 |

+

max_sim2 = torch.max(similarity, dim=-2)[0]

|

| 65 |

+

|

| 66 |

+

mutual_matches = matches2[0, matches1[0]] == torch.arange(N1).to(DEVICE)

|

| 67 |

+

good_matches = (max_sim1[0] > threshold) & mutual_matches

|

| 68 |

+

|

| 69 |

+

return matches1[0][good_matches], torch.arange(N1).to(DEVICE)[good_matches], max_sim1[0][good_matches]

|

| 70 |

+

|

| 71 |

+

def patch_to_image_coords(patch_idx, original_size, model_size, patch_size=14):

|

| 72 |

+

orig_w, orig_h = original_size

|

| 73 |

+

|

| 74 |

+

patches_h = model_size // patch_size

|

| 75 |

+

patches_w = model_size // patch_size

|

| 76 |

+

|

| 77 |

+

if patch_idx >= patches_h * patches_w:

|

| 78 |

+

return None, None

|

| 79 |

+

|

| 80 |

+

patch_y = patch_idx // patches_w

|

| 81 |

+

patch_x = patch_idx % patches_w

|

| 82 |

+

|

| 83 |

+

y_model = patch_y * patch_size + patch_size // 2

|

| 84 |

+

x_model = patch_x * patch_size + patch_size // 2

|

| 85 |

+

|

| 86 |

+

x = int(x_model * orig_w / model_size)

|

| 87 |

+

y = int(y_model * orig_h / model_size)

|

| 88 |

+

|

| 89 |

+

return x, y

|

| 90 |

+

|

| 91 |

+

def match_keypoints(image1, image2, model_name):

|

| 92 |

+

if image1 is None or image2 is None:

|

| 93 |

+

return None, "Please upload both images"

|

| 94 |

+

|

| 95 |

+

load_model(model_name)

|

| 96 |

+

|

| 97 |

+

img1_pil = Image.fromarray(image1).convert('RGB')

|

| 98 |

+

img2_pil = Image.fromarray(image2).convert('RGB')

|

| 99 |

+

|

| 100 |

+

|

| 101 |

+

features1, original_size1, model_size1 = extract_features(img1_pil)

|

| 102 |

+

features2, original_size2, model_size2 = extract_features(img2_pil)

|

| 103 |

+

|

| 104 |

+

features1 = features1[:, 1:, :]

|

| 105 |

+

features2 = features2[:, 1:, :]

|

| 106 |

+

|

| 107 |

+

matches2_idx, matches1_idx, similarities = find_correspondences(features1, features2, threshold=0.7)

|

| 108 |

+

|

| 109 |

+

img1_np = np.array(img1_pil)

|

| 110 |

+

img2_np = np.array(img2_pil)

|

| 111 |

+

|

| 112 |

+

h1, w1 = img1_np.shape[:2]

|

| 113 |

+

h2, w2 = img2_np.shape[:2]

|

| 114 |

+

|

| 115 |

+

result_img = np.zeros((max(h1, h2), w1 + w2, 3), dtype=np.uint8)

|

| 116 |

+

result_img[:h1, :w1] = img1_np

|

| 117 |

+

result_img[:h2, w1:w1+w2] = img2_np

|

| 118 |

+

|

| 119 |

+

colors = []

|

| 120 |

+

keypoints1 = []

|

| 121 |

+

keypoints2 = []

|

| 122 |

+

|

| 123 |

+

for i, (m1, m2, sim) in enumerate(zip(matches1_idx.cpu(), matches2_idx.cpu(), similarities.cpu())):

|

| 124 |

+

x1, y1 = patch_to_image_coords(m1.item(), original_size1, model_size1)

|

| 125 |

+

x2, y2 = patch_to_image_coords(m2.item(), original_size2, model_size2)

|

| 126 |

+

|

| 127 |

+

if x1 is not None and x2 is not None:

|

| 128 |

+

color = (np.random.randint(0, 255), np.random.randint(0, 255), np.random.randint(0, 255))

|

| 129 |

+

colors.append(color)

|

| 130 |

+

keypoints1.append((x1, y1))

|

| 131 |

+

keypoints2.append((x2 + w1, y2))

|

| 132 |

+

|

| 133 |

+

cv2.circle(result_img, (x1, y1), 15, color, -1)

|

| 134 |

+

cv2.circle(result_img, (x2 + w1, y2), 15, color, -1)

|

| 135 |

+

cv2.line(result_img, (x1, y1), (x2 + w1, y2), color, 10)

|

| 136 |

+

|

| 137 |

+

|

| 138 |

+

|

| 139 |

+

return result_img

|

| 140 |

+

|

| 141 |

+

load_model("DINOv3 Base ViT")

|

| 142 |

+

|

| 143 |

+

with gr.Blocks(title="DINOv3 Keypoint Matching") as demo:

|

| 144 |

+

gr.Markdown("# DINOv3 For Keypoint Matching")

|

| 145 |

+

gr.Markdown("DINOv3 can be used to find matching features between two images.")

|

| 146 |

+

gr.Markdown("Upload two images to find corresponding keypoints using DINOv3 features, switch between different DINOv3 checkpoints.")

|

| 147 |

+

|

| 148 |

+

with gr.Row():

|

| 149 |

+

image1 = gr.Image(label="Image 1", type="numpy")

|

| 150 |

+

image2 = gr.Image(label="Image 2", type="numpy")

|

| 151 |

+

with gr.Column(scale=1):

|

| 152 |

+

|

| 153 |

+

model_selector = gr.Dropdown(

|

| 154 |

+

choices=list(DINO_MODELS.keys()),

|

| 155 |

+

value="DINOv3 Base ViT",

|

| 156 |

+

label="Select DINOv3 Model",

|

| 157 |

+

info="Choose the model size. Larger models may provide better features but require more memory."

|

| 158 |

+

)

|

| 159 |

+

|

| 160 |

+

# Add status bar

|

| 161 |

+

status_bar = gr.Textbox(

|

| 162 |

+

value="✅ Model 'DINOv3 Base ViT' loaded successfully!",

|

| 163 |

+

label="Status",

|

| 164 |

+

interactive=False,

|

| 165 |

+

container=False

|

| 166 |

+

)

|

| 167 |

+

|

| 168 |

+

match_btn = gr.Button("Find Correspondences", variant="primary")

|

| 169 |

+

|

| 170 |

+

with gr.Column(scale=2):

|

| 171 |

+

output_image = gr.Image(label="Matched Keypoints")

|

| 172 |

+

|

| 173 |

+

# Connect model selector to status bar

|

| 174 |

+

model_selector.change(

|

| 175 |

+

fn=load_model,

|

| 176 |

+

inputs=[model_selector],

|

| 177 |

+

outputs=[status_bar]

|

| 178 |

+

)

|

| 179 |

+

|

| 180 |

+

match_btn.click(

|

| 181 |

+

fn=match_keypoints,

|

| 182 |

+

inputs=[image1, image2, model_selector],

|

| 183 |

+

outputs=[output_image]

|

| 184 |

+

)

|

| 185 |

+

|

| 186 |

+

gr.Examples(

|

| 187 |

+

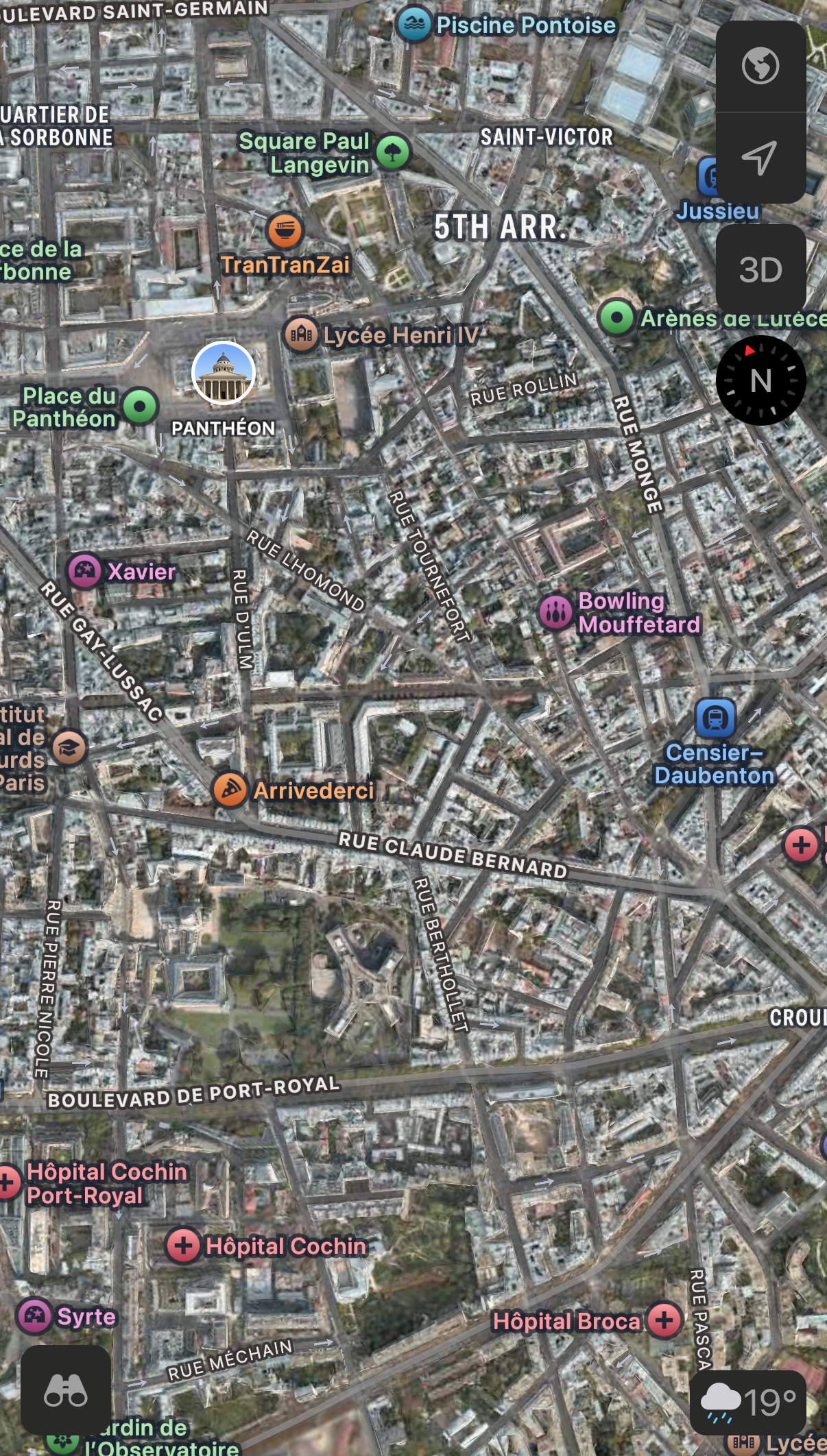

examples=[["map.jpg", "street.jpg"], ["bee.JPG", "bee_edited.jpg"]],

|

| 188 |

+

inputs=[image1, image2]

|

| 189 |

+

)

|

| 190 |

+

|

| 191 |

+

if __name__ == "__main__":

|

| 192 |

+

demo.launch(share=True)

|

map.jpg

ADDED

|

Git LFS Details

|

requirements.txt

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

spaces

|

| 2 |

+

git+https://github.com/huggingface/transformers.git

|

| 3 |

+

opencv-python

|

| 4 |

+

torch

|

| 5 |

+

torchvision

|

| 6 |

+

pillow

|

street.jpg

ADDED

|

Git LFS Details

|