Spaces:

Sleeping

Sleeping

initial commit

Browse files- app.py +181 -0

- c_data.json +0 -0

app.py

ADDED

|

@@ -0,0 +1,181 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

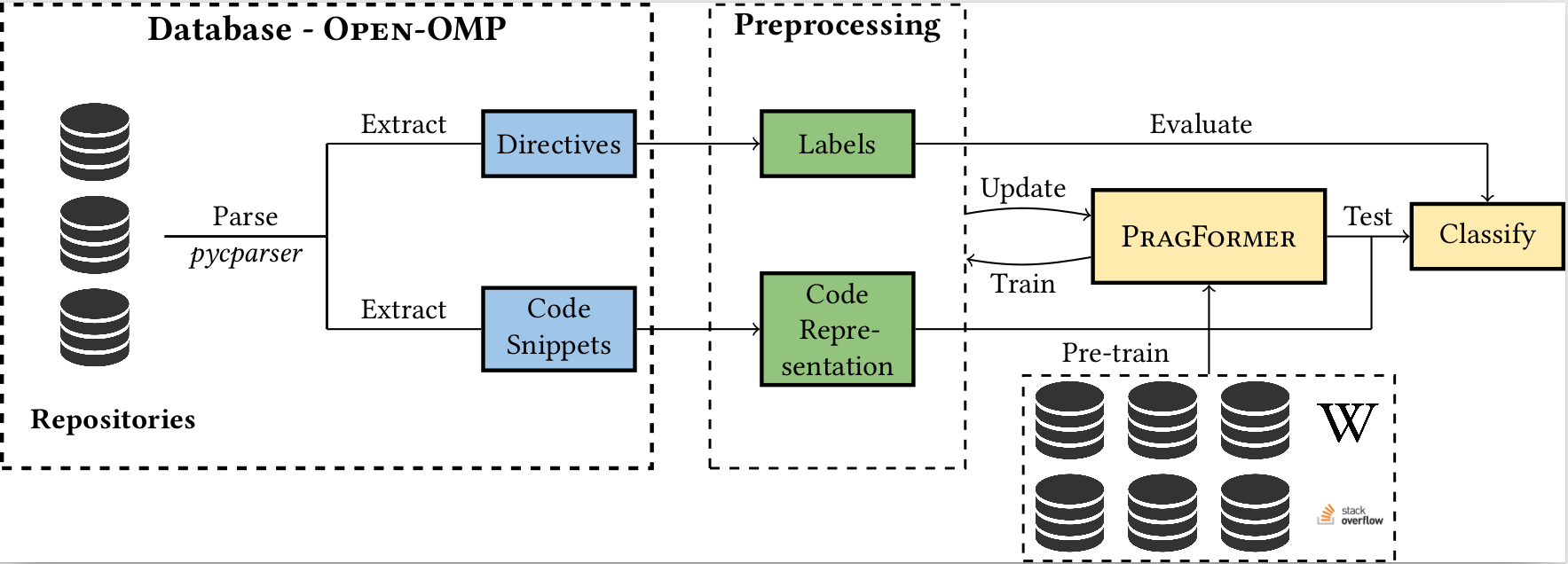

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

import transformers

|

| 3 |

+

import torch

|

| 4 |

+

import json

|

| 5 |

+

|

| 6 |

+

# load all models

|

| 7 |

+

deep_scc_model_args = ClassificationArgs(num_train_epochs=10,max_seq_length=300,use_multiprocessing=False)

|

| 8 |

+

deep_scc_model = ClassificationModel("roberta", "NTUYG/DeepSCC-RoBERTa", num_labels=19, args=deep_scc_model_args, use_cuda=False)

|

| 9 |

+

|

| 10 |

+

pragformer = transformers.AutoModel.from_pretrained("Pragformer/PragFormer", trust_remote_code=True)

|

| 11 |

+

pragformer_private = transformers.AutoModel.from_pretrained("Pragformer/PragFormer_private", trust_remote_code=True)

|

| 12 |

+

pragformer_reduction = transformers.AutoModel.from_pretrained("Pragformer/PragFormer_reduction", trust_remote_code=True)

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

#Event Listeners

|

| 16 |

+

with_omp_str = 'Should contain a parallel work-sharing loop construct'

|

| 17 |

+

without_omp_str = 'Should not contain a parallel work-sharing loop construct'

|

| 18 |

+

name_file = ['bash', 'c', 'c#', 'c++','css', 'haskell', 'java', 'javascript', 'lua', 'objective-c', 'perl', 'php', 'python','r','ruby', 'scala', 'sql', 'swift', 'vb.net']

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

tokenizer = transformers.AutoTokenizer.from_pretrained('NTUYG/DeepSCC-RoBERTa')

|

| 22 |

+

|

| 23 |

+

with open('./HF_Pragformer/c_data.json', 'r') as f:

|

| 24 |

+

data = json.load(f)

|

| 25 |

+

|

| 26 |

+

def fill_code(code_pth):

|

| 27 |

+

pragma = data[code_pth]['pragma']

|

| 28 |

+

code = data[code_pth]['code']

|

| 29 |

+

return 'None' if len(pragma)==0 else pragma, code

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

def predict(code_txt):

|

| 33 |

+

code = code_txt.lstrip().rstrip()

|

| 34 |

+

tokenized = tokenizer.batch_encode_plus(

|

| 35 |

+

[code],

|

| 36 |

+

max_length = 150,

|

| 37 |

+

pad_to_max_length = True,

|

| 38 |

+

truncation = True

|

| 39 |

+

)

|

| 40 |

+

pred = pragformer(torch.tensor(tokenized['input_ids']), torch.tensor(tokenized['attention_mask']))

|

| 41 |

+

|

| 42 |

+

y_hat = torch.argmax(pred).item()

|

| 43 |

+

return with_omp_str if y_hat==1 else without_omp_str, torch.nn.Softmax(dim=1)(pred).squeeze()[y_hat].item()

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

def is_private(code_txt):

|

| 47 |

+

if predict(code_txt)[0] == without_omp_str:

|

| 48 |

+

return gr.update(visible=False)

|

| 49 |

+

|

| 50 |

+

code = code_txt.lstrip().rstrip()

|

| 51 |

+

tokenized = tokenizer.batch_encode_plus(

|

| 52 |

+

[code],

|

| 53 |

+

max_length = 150,

|

| 54 |

+

pad_to_max_length = True,

|

| 55 |

+

truncation = True

|

| 56 |

+

)

|

| 57 |

+

pred = pragformer_private(torch.tensor(tokenized['input_ids']), torch.tensor(tokenized['attention_mask']))

|

| 58 |

+

|

| 59 |

+

y_hat = torch.argmax(pred).item()

|

| 60 |

+

# if y_hat == 0:

|

| 61 |

+

# return gr.update(visible=False)

|

| 62 |

+

# else:

|

| 63 |

+

return gr.update(value=f"Should {'not' if y_hat==0 else ''} contain private with confidence: {torch.nn.Softmax(dim=1)(pred).squeeze()[y_hat].item()}", visible=True)

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

def is_reduction(code_txt):

|

| 67 |

+

if predict(code_txt)[0] == without_omp_str:

|

| 68 |

+

return gr.update(visible=False)

|

| 69 |

+

|

| 70 |

+

code = code_txt.lstrip().rstrip()

|

| 71 |

+

tokenized = tokenizer.batch_encode_plus(

|

| 72 |

+

[code],

|

| 73 |

+

max_length = 150,

|

| 74 |

+

pad_to_max_length = True,

|

| 75 |

+

truncation = True

|

| 76 |

+

)

|

| 77 |

+

pred = pragformer_reduction(torch.tensor(tokenized['input_ids']), torch.tensor(tokenized['attention_mask']))

|

| 78 |

+

|

| 79 |

+

y_hat = torch.argmax(pred).item()

|

| 80 |

+

# if y_hat == 0:

|

| 81 |

+

# return gr.update(visible=False)

|

| 82 |

+

# else:

|

| 83 |

+

return gr.update(value=f"Should {'not' if y_hat==0 else ''} contain reduction with confidence: {torch.nn.Softmax(dim=1)(pred).squeeze()[y_hat].item()}", visible=True)

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

def lang_predict(code_txt):

|

| 87 |

+

res = {}

|

| 88 |

+

code = code_txt.replace('\n',' ').replace('\r',' ')

|

| 89 |

+

predictions, raw_outputs = deep_scc_model.predict([code])

|

| 90 |

+

# preds = [name_file[predictions[i]] for i in range(5)]

|

| 91 |

+

softmax_vals = torch.nn.Softmax(dim=1)(torch.tensor(raw_outputs))

|

| 92 |

+

top5 = torch.topk(softmax_vals, 5)

|

| 93 |

+

|

| 94 |

+

for lang_idx, conf in zip(top5.indices.flatten(), top5.values.flatten()):

|

| 95 |

+

res[name_file[lang_idx.item()]] = conf.item()

|

| 96 |

+

|

| 97 |

+

return '\n'.join([f" {'V ' if k=='c' else 'X'}{k}: {v}" for k,v in res.items()])

|

| 98 |

+

|

| 99 |

+

|

| 100 |

+

# Define GUI

|

| 101 |

+

with gr.Blocks() as pragformer_gui:

|

| 102 |

+

|

| 103 |

+

gr.Markdown(

|

| 104 |

+

"""

|

| 105 |

+

# PragFormer Pragma Classifiction

|

| 106 |

+

|

| 107 |

+

""")

|

| 108 |

+

|

| 109 |

+

#with gr.Row(equal_height=True):

|

| 110 |

+

with gr.Column():

|

| 111 |

+

gr.Markdown("## Input")

|

| 112 |

+

with gr.Row():

|

| 113 |

+

with gr.Column():

|

| 114 |

+

drop = gr.Dropdown(list(data.keys()), label="Mix of parallel and not-parallel code snippets", value="Minyoung-Kim1110/OpenMP/Excercise/atomic/0")

|

| 115 |

+

sample_btn = gr.Button("Sample")

|

| 116 |

+

|

| 117 |

+

pragma = gr.Textbox(label="Original parallelization classification (if any)")

|

| 118 |

+

with gr.Row():

|

| 119 |

+

code_in = gr.Textbox(lines=5, label="Write some C code and see if it should contain a parallel work-sharing loop construct")

|

| 120 |

+

lang_pred = gr.Textbox(lines=5, label="DeepScc programming language prediction")

|

| 121 |

+

|

| 122 |

+

submit_btn = gr.Button("Submit")

|

| 123 |

+

with gr.Column():

|

| 124 |

+

gr.Markdown("## Results")

|

| 125 |

+

|

| 126 |

+

with gr.Row():

|

| 127 |

+

label_out = gr.Textbox(label="Label")

|

| 128 |

+

confidence_out = gr.Textbox(label="Confidence")

|

| 129 |

+

|

| 130 |

+

with gr.Row():

|

| 131 |

+

private = gr.Textbox(label="Data-sharing attribute clause- private", visible=False)

|

| 132 |

+

reduction = gr.Textbox(label="Data-sharing attribute clause- reduction", visible=False)

|

| 133 |

+

|

| 134 |

+

code_in.change(fn=lang_predict, inputs=code_in, outputs=lang_pred)

|

| 135 |

+

|

| 136 |

+

submit_btn.click(fn=predict, inputs=code_in, outputs=[label_out, confidence_out])

|

| 137 |

+

submit_btn.click(fn=is_private, inputs=code_in, outputs=private)

|

| 138 |

+

submit_btn.click(fn=is_reduction, inputs=code_in, outputs=reduction)

|

| 139 |

+

sample_btn.click(fn=fill_code, inputs=drop, outputs=[pragma, code_in])

|

| 140 |

+

|

| 141 |

+

gr.Markdown(

|

| 142 |

+

"""

|

| 143 |

+

|

| 144 |

+

## How it Works?

|

| 145 |

+

|

| 146 |

+

To use the PragFormer tool, you will need to input a C language for-loop. You can either write your own code or use the samples

|

| 147 |

+

provided in the dropdown menu, which have been gathered from GitHub. Once you submit the code, the PragFormer model will analyze

|

| 148 |

+

it and predict whether the for-loop should be parallelized using OpenMP. If the PragFormer model determines that parallelization

|

| 149 |

+

is necessary, two additional models will be used to determine if adding specific data-sharing attributes, such as ***private*** or ***reduction*** clauses, is needed.

|

| 150 |

+

|

| 151 |

+

***private***- Specifies that each thread should have its own instance of a variable.

|

| 152 |

+

|

| 153 |

+

***reduction***- Specifies that one or more variables that are private to each thread are the subject of a reduction operation at

|

| 154 |

+

the end of the parallel region.

|

| 155 |

+

|

| 156 |

+

|

| 157 |

+

## Description

|

| 158 |

+

|

| 159 |

+

In past years, the world has switched to many-core and multi-core shared memory architectures.

|

| 160 |

+

As a result, there is a growing need to utilize these architectures by introducing shared memory parallelization schemes to software applications.

|

| 161 |

+

OpenMP is the most comprehensive API that implements such schemes, characterized by a readable interface.

|

| 162 |

+

Nevertheless, introducing OpenMP into code, especially legacy code, is challenging due to pervasive pitfalls in management of parallel shared memory.

|

| 163 |

+

To facilitate the performance of this task, many source-to-source (S2S) compilers have been created over the years, tasked with inserting OpenMP directives into

|

| 164 |

+

code automatically.

|

| 165 |

+

In addition to having limited robustness to their input format, these compilers still do not achieve satisfactory coverage and precision in locating parallelizable

|

| 166 |

+

code and generating appropriate directives.

|

| 167 |

+

In this work, we propose leveraging recent advances in machine learning techniques, specifically in natural language processing (NLP), to replace S2S compilers altogether.

|

| 168 |

+

We create a database (corpus), OpenMP-OMP specifically for this goal.

|

| 169 |

+

OpenMP-OMP contains over 28,000 code snippets, half of which contain OpenMP directives while the other half do not need parallelization at all with high probability.

|

| 170 |

+

We use the corpus to train systems to automatically classify code segments in need of parallelization, as well as suggest individual OpenMP clauses.

|

| 171 |

+

We train several transformer models, named PragFormer, for these tasks, and show that they outperform statistically-trained baselines and automatic S2S parallelization

|

| 172 |

+

compilers in both classifying the overall need for an OpenMP directive and the introduction of private and reduction clauses.

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

|

| 176 |

+

""")

|

| 177 |

+

|

| 178 |

+

|

| 179 |

+

|

| 180 |

+

pragformer_gui.launch()

|

| 181 |

+

|

c_data.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|