0311e3757b1b39d3d8e7d4d8e0dc1cdcb07865fc594aa861084a4ac58959ad5e

Browse files- README.md +1 -1

- model/optimized_model.pkl +1 -1

- model/smash_config.json +1 -1

- plots.png +0 -0

README.md

CHANGED

|

@@ -36,7 +36,7 @@ metrics:

|

|

| 36 |

|

| 37 |

|

| 38 |

**Frequently Asked Questions**

|

| 39 |

-

- ***How does the compression work?*** The model is compressed by combining

|

| 40 |

- ***How does the model quality change?*** The quality of the model output might slightly vary compared to the base model.

|

| 41 |

- ***How is the model efficiency evaluated?*** These results were obtained on NVIDIA A100-PCIE-40GB with configuration described in `model/smash_config.json` and are obtained after a hardware warmup. The smashed model is directly compared to the original base model. Efficiency results may vary in other settings (e.g. other hardware, image size, batch size, ...). We recommend to directly run them in the use-case conditions to know if the smashed model can benefit you.

|

| 42 |

- ***What is the model format?*** We used a custom Pruna model format based on pickle to make models compatible with the compression methods. We provide a tutorial to run models in dockers in the documentation [here](https://pruna-ai-pruna.readthedocs-hosted.com/en/latest/) if needed.

|

|

|

|

| 36 |

|

| 37 |

|

| 38 |

**Frequently Asked Questions**

|

| 39 |

+

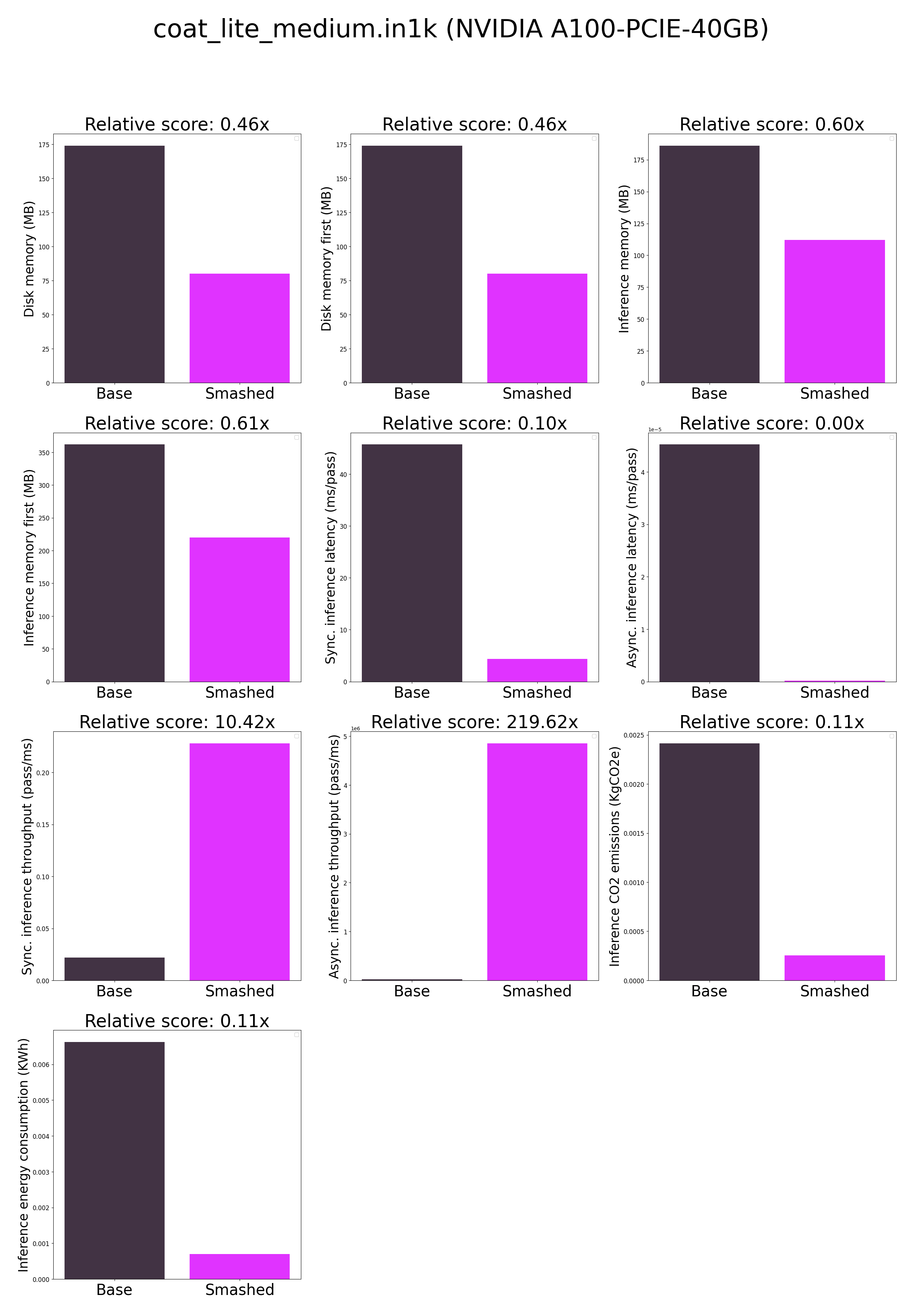

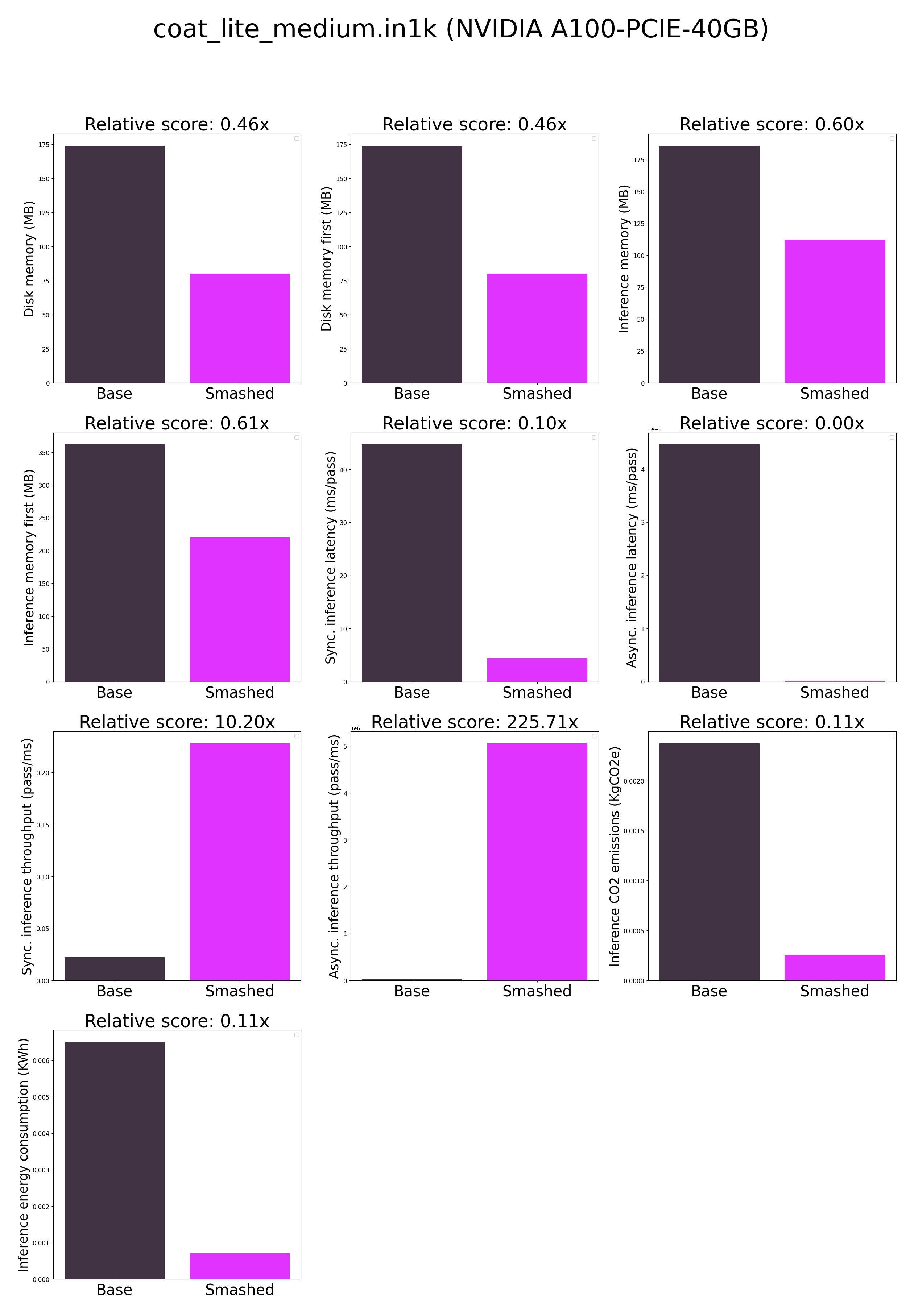

- ***How does the compression work?*** The model is compressed by combining quantization, xformers, jit, cuda graphs, triton.

|

| 40 |

- ***How does the model quality change?*** The quality of the model output might slightly vary compared to the base model.

|

| 41 |

- ***How is the model efficiency evaluated?*** These results were obtained on NVIDIA A100-PCIE-40GB with configuration described in `model/smash_config.json` and are obtained after a hardware warmup. The smashed model is directly compared to the original base model. Efficiency results may vary in other settings (e.g. other hardware, image size, batch size, ...). We recommend to directly run them in the use-case conditions to know if the smashed model can benefit you.

|

| 42 |

- ***What is the model format?*** We used a custom Pruna model format based on pickle to make models compatible with the compression methods. We provide a tutorial to run models in dockers in the documentation [here](https://pruna-ai-pruna.readthedocs-hosted.com/en/latest/) if needed.

|

model/optimized_model.pkl

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

size 89327576

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0a0e8179440e77b7a0483632258f91ff1898dd4d9f6b36373417b5d488f806be

|

| 3 |

size 89327576

|

model/smash_config.json

CHANGED

|

@@ -14,7 +14,7 @@

|

|

| 14 |

"controlnet": "None",

|

| 15 |

"unet_dim": 4,

|

| 16 |

"device": "cuda",

|

| 17 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 18 |

"batch_size": 1,

|

| 19 |

"model_name": "coat_lite_medium.in1k",

|

| 20 |

"max_batch_size": 1,

|

|

|

|

| 14 |

"controlnet": "None",

|

| 15 |

"unet_dim": 4,

|

| 16 |

"device": "cuda",

|

| 17 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/modelsj_xz9k4p",

|

| 18 |

"batch_size": 1,

|

| 19 |

"model_name": "coat_lite_medium.in1k",

|

| 20 |

"max_batch_size": 1,

|

plots.png

CHANGED

|

|