spark-tts

commited on

Commit

·

6b4fcb8

1

Parent(s):

5b69e7a

init

Browse files- .gitattributes +4 -0

- BiCodec/config.yaml +60 -0

- BiCodec/model.safetensors +3 -0

- LLM/added_tokens.json +0 -0

- LLM/config.json +27 -0

- LLM/merges.txt +0 -0

- LLM/model.safetensors +3 -0

- LLM/special_tokens_map.json +31 -0

- LLM/tokenizer.json +3 -0

- LLM/tokenizer_config.json +0 -0

- LLM/vocab.json +0 -0

- README.md +151 -0

- config.yaml +7 -0

- src/figures/gradio_TTS.png +0 -0

- src/figures/gradio_control.png +0 -0

- src/figures/infer_control.png +0 -0

- src/figures/infer_voice_cloning.png +0 -0

- src/logo/HKUST.jpg +0 -0

- src/logo/NPU.jpg +0 -0

- src/logo/NTU.jpg +0 -0

- src/logo/SJU.jpg +0 -0

- src/logo/SparkAudio.jpg +0 -0

- src/logo/SparkAudio2.jpg +0 -0

- src/logo/SparkTTS.png +0 -0

- src/logo/mobvoi.jpg +0 -0

- src/logo/mobvoi.png +0 -0

- wav2vec2-large-xlsr-53/README.md +29 -0

- wav2vec2-large-xlsr-53/config.json +83 -0

- wav2vec2-large-xlsr-53/preprocessor_config.json +9 -0

- wav2vec2-large-xlsr-53/pytorch_model.bin +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,7 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

LLM/tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

wav2vec2-large-xlsr-53/pytorch_model.bin filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

LLM/model.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

BiCodec/model.safetensors filter=lfs diff=lfs merge=lfs -text

|

BiCodec/config.yaml

ADDED

|

@@ -0,0 +1,60 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

audio_tokenizer:

|

| 2 |

+

mel_params:

|

| 3 |

+

sample_rate: 16000

|

| 4 |

+

n_fft: 1024

|

| 5 |

+

win_length: 640

|

| 6 |

+

hop_length: 320

|

| 7 |

+

mel_fmin: 10

|

| 8 |

+

mel_fmax: null

|

| 9 |

+

num_mels: 128

|

| 10 |

+

|

| 11 |

+

encoder:

|

| 12 |

+

input_channels: 1024

|

| 13 |

+

vocos_dim: 384

|

| 14 |

+

vocos_intermediate_dim: 2048

|

| 15 |

+

vocos_num_layers: 12

|

| 16 |

+

out_channels: 1024

|

| 17 |

+

sample_ratios: [1,1]

|

| 18 |

+

|

| 19 |

+

decoder:

|

| 20 |

+

input_channel: 1024

|

| 21 |

+

channels: 1536

|

| 22 |

+

rates: [8, 5, 4, 2]

|

| 23 |

+

kernel_sizes: [16,11,8,4]

|

| 24 |

+

|

| 25 |

+

quantizer:

|

| 26 |

+

input_dim: 1024

|

| 27 |

+

codebook_size: 8192

|

| 28 |

+

codebook_dim: 8

|

| 29 |

+

commitment: 0.25

|

| 30 |

+

codebook_loss_weight: 2.0

|

| 31 |

+

use_l2_normlize: True

|

| 32 |

+

threshold_ema_dead_code: 0.2

|

| 33 |

+

|

| 34 |

+

speaker_encoder:

|

| 35 |

+

input_dim: 128

|

| 36 |

+

out_dim: 1024

|

| 37 |

+

latent_dim: 128

|

| 38 |

+

token_num: 32

|

| 39 |

+

fsq_levels: [4, 4, 4, 4, 4, 4]

|

| 40 |

+

fsq_num_quantizers: 1

|

| 41 |

+

|

| 42 |

+

prenet:

|

| 43 |

+

input_channels: 1024

|

| 44 |

+

vocos_dim: 384

|

| 45 |

+

vocos_intermediate_dim: 2048

|

| 46 |

+

vocos_num_layers: 12

|

| 47 |

+

out_channels: 1024

|

| 48 |

+

condition_dim: 1024

|

| 49 |

+

sample_ratios: [1,1]

|

| 50 |

+

use_tanh_at_final: False

|

| 51 |

+

|

| 52 |

+

postnet:

|

| 53 |

+

input_channels: 1024

|

| 54 |

+

vocos_dim: 384

|

| 55 |

+

vocos_intermediate_dim: 2048

|

| 56 |

+

vocos_num_layers: 6

|

| 57 |

+

out_channels: 1024

|

| 58 |

+

use_tanh_at_final: False

|

| 59 |

+

|

| 60 |

+

|

BiCodec/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e9940cd48d4446e4340ced82d234bf5618350dd9f5db900ebe47a4fdb03867ec

|

| 3 |

+

size 625518756

|

LLM/added_tokens.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

LLM/config.json

ADDED

|

@@ -0,0 +1,27 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen2ForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_dropout": 0.0,

|

| 6 |

+

"bos_token_id": 151643,

|

| 7 |

+

"eos_token_id": 151645,

|

| 8 |

+

"hidden_act": "silu",

|

| 9 |

+

"hidden_size": 896,

|

| 10 |

+

"initializer_range": 0.02,

|

| 11 |

+

"intermediate_size": 4864,

|

| 12 |

+

"max_position_embeddings": 32768,

|

| 13 |

+

"max_window_layers": 21,

|

| 14 |

+

"model_type": "qwen2",

|

| 15 |

+

"num_attention_heads": 14,

|

| 16 |

+

"num_hidden_layers": 24,

|

| 17 |

+

"num_key_value_heads": 2,

|

| 18 |

+

"rms_norm_eps": 1e-06,

|

| 19 |

+

"rope_theta": 1000000.0,

|

| 20 |

+

"sliding_window": 32768,

|

| 21 |

+

"tie_word_embeddings": true,

|

| 22 |

+

"torch_dtype": "bfloat16",

|

| 23 |

+

"transformers_version": "4.43.1",

|

| 24 |

+

"use_cache": true,

|

| 25 |

+

"use_sliding_window": false,

|

| 26 |

+

"vocab_size": 166000

|

| 27 |

+

}

|

LLM/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

LLM/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:54825baf0a2f6076eb3c78fa1d22a95aee225f59070a8b295f8169db860eb109

|

| 3 |

+

size 2026568968

|

LLM/special_tokens_map.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"additional_special_tokens": [

|

| 3 |

+

"<|im_start|>",

|

| 4 |

+

"<|im_end|>",

|

| 5 |

+

"<|object_ref_start|>",

|

| 6 |

+

"<|object_ref_end|>",

|

| 7 |

+

"<|box_start|>",

|

| 8 |

+

"<|box_end|>",

|

| 9 |

+

"<|quad_start|>",

|

| 10 |

+

"<|quad_end|>",

|

| 11 |

+

"<|vision_start|>",

|

| 12 |

+

"<|vision_end|>",

|

| 13 |

+

"<|vision_pad|>",

|

| 14 |

+

"<|image_pad|>",

|

| 15 |

+

"<|video_pad|>"

|

| 16 |

+

],

|

| 17 |

+

"eos_token": {

|

| 18 |

+

"content": "<|im_end|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": false,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

},

|

| 24 |

+

"pad_token": {

|

| 25 |

+

"content": "<|endoftext|>",

|

| 26 |

+

"lstrip": false,

|

| 27 |

+

"normalized": false,

|

| 28 |

+

"rstrip": false,

|

| 29 |

+

"single_word": false

|

| 30 |

+

}

|

| 31 |

+

}

|

LLM/tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9c8b057d6ca205a429cc3428b9fc815f0d6ee1d53106dd5e5b129ef9db2ff057

|

| 3 |

+

size 14129172

|

LLM/tokenizer_config.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

LLM/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

README.md

CHANGED

|

@@ -1,3 +1,154 @@

|

|

| 1 |

---

|

| 2 |

license: apache-2.0

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

license: apache-2.0

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

- zh

|

| 6 |

+

tags:

|

| 7 |

+

- text-to-speech

|

| 8 |

+

library_tag: spark-tts

|

| 9 |

---

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

<div align="center">

|

| 13 |

+

<h1>

|

| 14 |

+

Spark-TTS

|

| 15 |

+

</h1>

|

| 16 |

+

<p>

|

| 17 |

+

Official model for <br>

|

| 18 |

+

<b><em>Spark-TTS: An Efficient LLM-Based Text-to-Speech Model with Single-Stream Decoupled Speech Tokens</em></b>

|

| 19 |

+

</p>

|

| 20 |

+

<p>

|

| 21 |

+

<img src="src/logo/SparkTTS.png" alt="Spark-TTS Logo" style="width: 200px; height: 200px;">

|

| 22 |

+

</p>

|

| 23 |

+

</div>

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

## Spark-TTS 🔥

|

| 27 |

+

|

| 28 |

+

### 👉🏻 [Spark-TTS Demos](https://sparkaudio.github.io/spark-tts/) 👈🏻

|

| 29 |

+

|

| 30 |

+

### 👉🏻 [Github Repo](https://github.com/SparkAudio/Spark-TTS) 👈🏻

|

| 31 |

+

|

| 32 |

+

### 👉🏻 [Paper](https://arxiv.org/pdf/2503.01710) 👈🏻

|

| 33 |

+

|

| 34 |

+

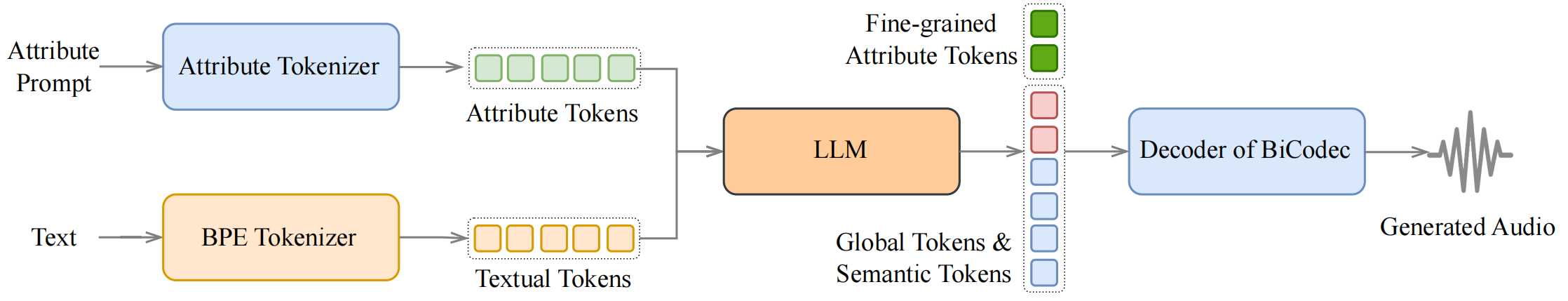

### Overview

|

| 35 |

+

|

| 36 |

+

Spark-TTS is an advanced text-to-speech system that uses the power of large language models (LLM) for highly accurate and natural-sounding voice synthesis. It is designed to be efficient, flexible, and powerful for both research and production use.

|

| 37 |

+

|

| 38 |

+

### Key Features

|

| 39 |

+

|

| 40 |

+

- **Simplicity and Efficiency**: Built entirely on Qwen2.5, Spark-TTS eliminates the need for additional generation models like flow matching. Instead of relying on separate models to generate acoustic features, it directly reconstructs audio from the code predicted by the LLM. This approach streamlines the process, improving efficiency and reducing complexity.

|

| 41 |

+

- **High-Quality Voice Cloning**: Supports zero-shot voice cloning, which means it can replicate a speaker's voice even without specific training data for that voice. This is ideal for cross-lingual and code-switching scenarios, allowing for seamless transitions between languages and voices without requiring separate training for each one.

|

| 42 |

+

- **Bilingual Support**: Supports both Chinese and English, and is capable of zero-shot voice cloning for cross-lingual and code-switching scenarios, enabling the model to synthesize speech in multiple languages with high naturalness and accuracy.

|

| 43 |

+

- **Controllable Speech Generation**: Supports creating virtual speakers by adjusting parameters such as gender, pitch, and speaking rate.

|

| 44 |

+

|

| 45 |

+

---

|

| 46 |

+

|

| 47 |

+

<table align="center">

|

| 48 |

+

<tr>

|

| 49 |

+

<td align="center"><b>Inference Overview of Voice Cloning</b><br><img src="src/figures/infer_voice_cloning.png" width="80%" /></td>

|

| 50 |

+

</tr>

|

| 51 |

+

<tr>

|

| 52 |

+

<td align="center"><b>Inference Overview of Controlled Generation</b><br><img src="src/figures/infer_control.png" width="80%" /></td>

|

| 53 |

+

</tr>

|

| 54 |

+

</table>

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

## Install

|

| 58 |

+

**Clone and Install**

|

| 59 |

+

|

| 60 |

+

- Clone the repo

|

| 61 |

+

``` sh

|

| 62 |

+

git clone https://github.com/SparkAudio/Spark-TTS.git

|

| 63 |

+

cd Spark-TTS

|

| 64 |

+

```

|

| 65 |

+

|

| 66 |

+

- Install Conda: please see https://docs.conda.io/en/latest/miniconda.html

|

| 67 |

+

- Create Conda env:

|

| 68 |

+

|

| 69 |

+

``` sh

|

| 70 |

+

conda create -n sparktts -y python=3.12

|

| 71 |

+

conda activate sparktts

|

| 72 |

+

pip install -r requirements.txt

|

| 73 |

+

# If you are in mainland China, you can set the mirror as follows:

|

| 74 |

+

pip install -r requirements.txt -i https://mirrors.aliyun.com/pypi/simple/ --trusted-host=mirrors.aliyun.com

|

| 75 |

+

```

|

| 76 |

+

|

| 77 |

+

**Model Download**

|

| 78 |

+

|

| 79 |

+

Download via python:

|

| 80 |

+

```python

|

| 81 |

+

from huggingface_hub import snapshot_download

|

| 82 |

+

|

| 83 |

+

snapshot_download("SparkAudio/Spark-TTS-0.5B", local_dir="pretrained_models/Spark-TTS-0.5B")

|

| 84 |

+

```

|

| 85 |

+

|

| 86 |

+

Download via git clone:

|

| 87 |

+

```sh

|

| 88 |

+

mkdir -p pretrained_models

|

| 89 |

+

|

| 90 |

+

# Make sure you have git-lfs installed (https://git-lfs.com)

|

| 91 |

+

git lfs install

|

| 92 |

+

|

| 93 |

+

git clone https://huggingface.co/SparkAudio/Spark-TTS-0.5B pretrained_models/Spark-TTS-0.5B

|

| 94 |

+

```

|

| 95 |

+

|

| 96 |

+

**Basic Usage**

|

| 97 |

+

|

| 98 |

+

You can simply run the demo with the following commands:

|

| 99 |

+

``` sh

|

| 100 |

+

cd example

|

| 101 |

+

bash infer.sh

|

| 102 |

+

```

|

| 103 |

+

|

| 104 |

+

Alternatively, you can directly execute the following command in the command line to perform inference:

|

| 105 |

+

|

| 106 |

+

``` sh

|

| 107 |

+

python -m cli.inference \

|

| 108 |

+

--text "text to synthesis." \

|

| 109 |

+

--device 0 \

|

| 110 |

+

--save_dir "path/to/save/audio" \

|

| 111 |

+

--model_dir pretrained_models/Spark-TTS-0.5B \

|

| 112 |

+

--prompt_text "transcript of the prompt audio" \

|

| 113 |

+

--prompt_speech_path "path/to/prompt_audio"

|

| 114 |

+

```

|

| 115 |

+

|

| 116 |

+

**UI Usage**

|

| 117 |

+

|

| 118 |

+

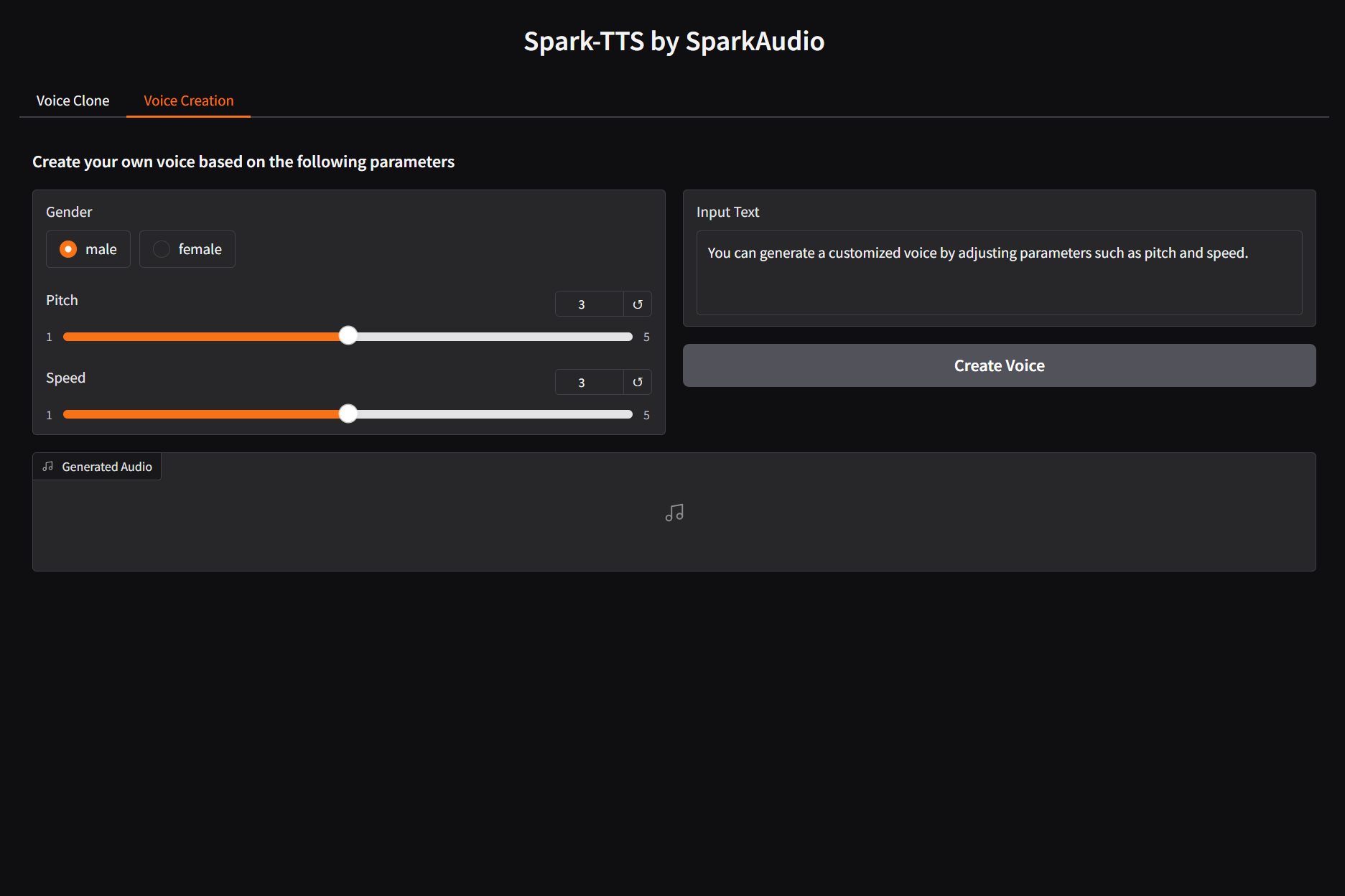

You can start the UI interface by running `python webui.py`, which allows you to perform Voice Cloning and Voice Creation. Voice Cloning supports uploading reference audio or directly recording the audio.

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

| **Voice Cloning** | **Voice Creation** |

|

| 122 |

+

|:-------------------:|:-------------------:|

|

| 123 |

+

|  |  |

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

## Citation

|

| 128 |

+

|

| 129 |

+

```

|

| 130 |

+

@misc{wang2025sparktts,

|

| 131 |

+

title={Spark-TTS: An Efficient LLM-Based Text-to-Speech Model with Single-Stream Decoupled Speech Tokens},

|

| 132 |

+

author={Xinsheng Wang and Mingqi Jiang and Ziyang Ma and Ziyu Zhang and Songxiang Liu and Linqin Li and Zheng Liang and Qixi Zheng and Rui Wang and Xiaoqin Feng and Weizhen Bian and Zhen Ye and Sitong Cheng and Ruibin Yuan and Zhixian Zhao and Xinfa Zhu and Jiahao Pan and Liumeng Xue and Pengcheng Zhu and Yunlin Chen and Zhifei Li and Xie Chen and Lei Xie and Yike Guo and Wei Xue},

|

| 133 |

+

year={2025},

|

| 134 |

+

eprint={2503.01710},

|

| 135 |

+

archivePrefix={arXiv},

|

| 136 |

+

primaryClass={cs.SD},

|

| 137 |

+

url={https://arxiv.org/abs/2503.01710},

|

| 138 |

+

}

|

| 139 |

+

```

|

| 140 |

+

|

| 141 |

+

|

| 142 |

+

## ⚠️ Usage Disclaimer

|

| 143 |

+

|

| 144 |

+

This project provides a zero-shot voice cloning TTS model intended for academic research, educational purposes, and legitimate applications, such as personalized speech synthesis, assistive technologies, and linguistic research.

|

| 145 |

+

|

| 146 |

+

Please note:

|

| 147 |

+

|

| 148 |

+

- Do not use this model for unauthorized voice cloning, impersonation, fraud, scams, deepfakes, or any illegal activities.

|

| 149 |

+

|

| 150 |

+

- Ensure compliance with local laws and regulations when using this model and uphold ethical standards.

|

| 151 |

+

|

| 152 |

+

- The developers assume no liability for any misuse of this model.

|

| 153 |

+

|

| 154 |

+

We advocate for the responsible development and use of AI and encourage the community to uphold safety and ethical principles in AI research and applications. If you have any concerns regarding ethics or misuse, please contact us.

|

config.yaml

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

highpass_cutoff_freq: 40

|

| 2 |

+

sample_rate: 16000

|

| 3 |

+

segment_duration: 2.4 # (s)

|

| 4 |

+

max_val_duration: 12 # (s)

|

| 5 |

+

latent_hop_length: 320

|

| 6 |

+

ref_segment_duration: 6

|

| 7 |

+

volume_normalize: true

|

src/figures/gradio_TTS.png

ADDED

|

src/figures/gradio_control.png

ADDED

|

src/figures/infer_control.png

ADDED

|

src/figures/infer_voice_cloning.png

ADDED

|

src/logo/HKUST.jpg

ADDED

|

src/logo/NPU.jpg

ADDED

|

src/logo/NTU.jpg

ADDED

|

src/logo/SJU.jpg

ADDED

|

src/logo/SparkAudio.jpg

ADDED

|

src/logo/SparkAudio2.jpg

ADDED

|

src/logo/SparkTTS.png

ADDED

|

src/logo/mobvoi.jpg

ADDED

|

src/logo/mobvoi.png

ADDED

|

wav2vec2-large-xlsr-53/README.md

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: multilingual

|

| 3 |

+

datasets:

|

| 4 |

+

- common_voice

|

| 5 |

+

tags:

|

| 6 |

+

- speech

|

| 7 |

+

license: apache-2.0

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

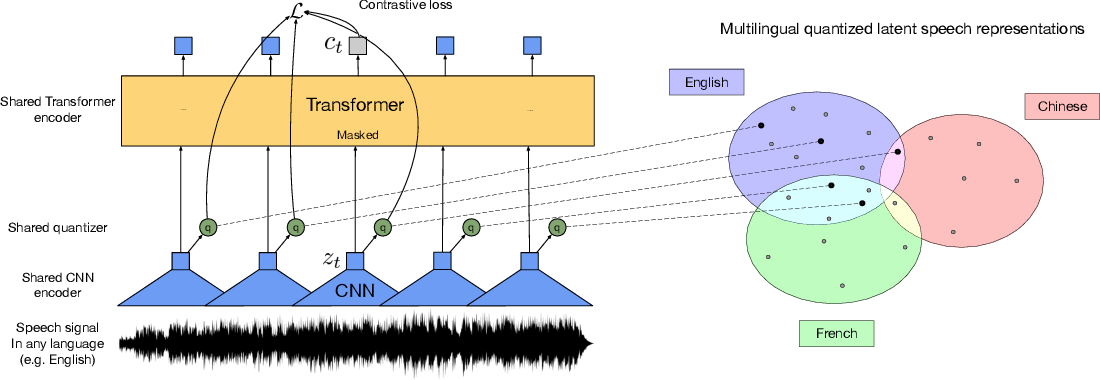

+

# Wav2Vec2-XLSR-53

|

| 11 |

+

|

| 12 |

+

[Facebook's XLSR-Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

|

| 13 |

+

|

| 14 |

+

The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. Note that this model should be fine-tuned on a downstream task, like Automatic Speech Recognition. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more information.

|

| 15 |

+

|

| 16 |

+

[Paper](https://arxiv.org/abs/2006.13979)

|

| 17 |

+

|

| 18 |

+

Authors: Alexis Conneau, Alexei Baevski, Ronan Collobert, Abdelrahman Mohamed, Michael Auli

|

| 19 |

+

|

| 20 |

+

**Abstract**

|

| 21 |

+

This paper presents XLSR which learns cross-lingual speech representations by pretraining a single model from the raw waveform of speech in multiple languages. We build on wav2vec 2.0 which is trained by solving a contrastive task over masked latent speech representations and jointly learns a quantization of the latents shared across languages. The resulting model is fine-tuned on labeled data and experiments show that cross-lingual pretraining significantly outperforms monolingual pretraining. On the CommonVoice benchmark, XLSR shows a relative phoneme error rate reduction of 72% compared to the best known results. On BABEL, our approach improves word error rate by 16% relative compared to a comparable system. Our approach enables a single multilingual speech recognition model which is competitive to strong individual models. Analysis shows that the latent discrete speech representations are shared across languages with increased sharing for related languages. We hope to catalyze research in low-resource speech understanding by releasing XLSR-53, a large model pretrained in 53 languages.

|

| 22 |

+

|

| 23 |

+

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

|

| 24 |

+

|

| 25 |

+

# Usage

|

| 26 |

+

|

| 27 |

+

See [this notebook](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLSR_Wav2Vec2_on_Turkish_ASR_with_%F0%9F%A4%97_Transformers.ipynb) for more information on how to fine-tune the model.

|

| 28 |

+

|

| 29 |

+

|

wav2vec2-large-xlsr-53/config.json

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"activation_dropout": 0.0,

|

| 3 |

+

"apply_spec_augment": true,

|

| 4 |

+

"architectures": [

|

| 5 |

+

"Wav2Vec2ForPreTraining"

|

| 6 |

+

],

|

| 7 |

+

"attention_dropout": 0.1,

|

| 8 |

+

"bos_token_id": 1,

|

| 9 |

+

"codevector_dim": 768,

|

| 10 |

+

"contrastive_logits_temperature": 0.1,

|

| 11 |

+

"conv_bias": true,

|

| 12 |

+

"conv_dim": [

|

| 13 |

+

512,

|

| 14 |

+

512,

|

| 15 |

+

512,

|

| 16 |

+

512,

|

| 17 |

+

512,

|

| 18 |

+

512,

|

| 19 |

+

512

|

| 20 |

+

],

|

| 21 |

+

"conv_kernel": [

|

| 22 |

+

10,

|

| 23 |

+

3,

|

| 24 |

+

3,

|

| 25 |

+

3,

|

| 26 |

+

3,

|

| 27 |

+

2,

|

| 28 |

+

2

|

| 29 |

+

],

|

| 30 |

+

"conv_stride": [

|

| 31 |

+

5,

|

| 32 |

+

2,

|

| 33 |

+

2,

|

| 34 |

+

2,

|

| 35 |

+

2,

|

| 36 |

+

2,

|

| 37 |

+

2

|

| 38 |

+

],

|

| 39 |

+

"ctc_loss_reduction": "sum",

|

| 40 |

+

"ctc_zero_infinity": false,

|

| 41 |

+

"diversity_loss_weight": 0.1,

|

| 42 |

+

"do_stable_layer_norm": true,

|

| 43 |

+

"eos_token_id": 2,

|

| 44 |

+

"feat_extract_activation": "gelu",

|

| 45 |

+

"feat_extract_dropout": 0.0,

|

| 46 |

+

"feat_extract_norm": "layer",

|

| 47 |

+

"feat_proj_dropout": 0.1,

|

| 48 |

+

"feat_quantizer_dropout": 0.0,

|

| 49 |

+

"final_dropout": 0.0,

|

| 50 |

+

"gradient_checkpointing": false,

|

| 51 |

+

"hidden_act": "gelu",

|

| 52 |

+

"hidden_dropout": 0.1,

|

| 53 |

+

"hidden_size": 1024,

|

| 54 |

+

"initializer_range": 0.02,

|

| 55 |

+

"intermediate_size": 4096,

|

| 56 |

+

"layer_norm_eps": 1e-05,

|

| 57 |

+

"layerdrop": 0.1,

|

| 58 |

+

"mask_channel_length": 10,

|

| 59 |

+

"mask_channel_min_space": 1,

|

| 60 |

+

"mask_channel_other": 0.0,

|

| 61 |

+

"mask_channel_prob": 0.0,

|

| 62 |

+

"mask_channel_selection": "static",

|

| 63 |

+

"mask_feature_length": 10,

|

| 64 |

+

"mask_feature_prob": 0.0,

|

| 65 |

+

"mask_time_length": 10,

|

| 66 |

+

"mask_time_min_space": 1,

|

| 67 |

+

"mask_time_other": 0.0,

|

| 68 |

+

"mask_time_prob": 0.075,

|

| 69 |

+

"mask_time_selection": "static",

|

| 70 |

+

"model_type": "wav2vec2",

|

| 71 |

+

"num_attention_heads": 16,

|

| 72 |

+

"num_codevector_groups": 2,

|

| 73 |

+

"num_codevectors_per_group": 320,

|

| 74 |

+

"num_conv_pos_embedding_groups": 16,

|

| 75 |

+

"num_conv_pos_embeddings": 128,

|

| 76 |

+

"num_feat_extract_layers": 7,

|

| 77 |

+

"num_hidden_layers": 24,

|

| 78 |

+

"num_negatives": 100,

|

| 79 |

+

"pad_token_id": 0,

|

| 80 |

+

"proj_codevector_dim": 768,

|

| 81 |

+

"transformers_version": "4.7.0.dev0",

|

| 82 |

+

"vocab_size": 32

|

| 83 |

+

}

|

wav2vec2-large-xlsr-53/preprocessor_config.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_normalize": true,

|

| 3 |

+

"feature_extractor_type": "Wav2Vec2FeatureExtractor",

|

| 4 |

+

"feature_size": 1,

|

| 5 |

+

"padding_side": "right",

|

| 6 |

+

"padding_value": 0,

|

| 7 |

+

"return_attention_mask": true,

|

| 8 |

+

"sampling_rate": 16000

|

| 9 |

+

}

|

wav2vec2-large-xlsr-53/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:314340227371a608f71adcd5f0de5933824fe77e55822aa4b24dba9c1c364dcb

|

| 3 |

+

size 1269737156

|